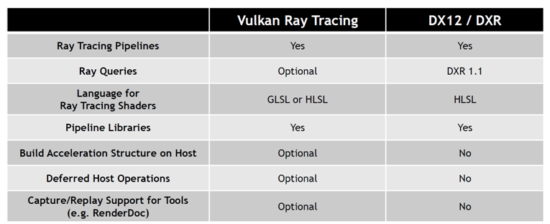

Familiar ray tracing API comes to Vulkan with some new features compared to DXR.

Microsoft announced the development of a ray tracing extension to DirectX 12 called DXR in March 2018 at the same time that Nvidia introduced its RTX hardware ray tracing architecture. Cards using the RTX architecture shipped in the summer of 2018 when Nvidia introduced its revolutionary Turing series of GPUs with dedicated ray tracing acceleration processors.

Nonetheless, as good as the proprietary DXR API is, it is only available for DX12 running on Windows 10. To reach ubiquity, ray tracing needs to be available in an open standard API that can be shipped on any platform, providing portability and increased market reach for developers for the first time—enter Vulkan Ray Tracing.

Generating industry consensus on a ray tracing framework that will meet the needs of many platforms and hardware vendors is more complex than designing a proprietary API for a single platform, but that is the charter of Khronos—provide robust, open, and contemporary APIs under multi-company governance—and so they set about it.

Needless to say, you wouldn’t be reading this if they hadn’t succeeded, but not only did they succeed, Vulkan Ray Tracing is now launched with a design that will both provide easy portability for developers already using DXR, but also introduces new functionality to ease some typical ray tracing bottlenecks, such as building acceleration data structures and compiling large numbers of ray tracing shaders, by enabling their offload onto multi-core CPUs.

The project got started in early 2018 when the Vulkan Working Group formed the Vulkan Ray Tracing Task Sub Group (TSG in Khronos parlance) to enable a focused design effort. The TSG received multiple design contributions from hardware vendors and listened to requirements from diverse ISVs and IHVs. Realtime techniques for ray tracing are still being actively researched and so this first version of Vulkan Ray Tracing is designed to provide not only a working framework but also an extensible stage for future developments.

One key design goal was to provide a single, coherent cross-platform and multi-vendor framework for ray tracing acceleration that could be easily used together with existing Vulkan functionality. And for this first version, Khronos is primarily aiming to expose the full functionality of modern desktop PC hardware.

Vulkan Ray Tracing consists of a number of Vulkan, SPIR-V, and GLSL extensions, some of which are optional. The primary VK_KHR_ray_tracing extension provides support for acceleration structure building and management, ray tracing shader stages and pipelines, and ray query intrinsics for all shader stages. VK_KHR_pipeline_library provides the ability to provide a set of shaders that can be efficiently linked into ray tracing pipelines. VK_KHR_deferred_host_operations enables intensive driver operations, including ray tracing pipeline compilation or CPU-based acceleration structure construction to be offloaded to application-managed CPU thread pools.

Vulkan ray tracing shaders are SPIR-V binaries and SPIR-V has two new extensions. SPV_KHR_ray_tracing adds support for ray tracing shader stages and instructions; SPV_KHR_ray_query adds support for ray query shader instructions. Developers can generate those binaries in GLSL using two new GLSL extensions, GLSL_EXT_ray_tracing and GLSL_EXT_ray_query, which are supported in the open-source Glslang compiler. Nvidia added SPIR-V support to Microsoft’s DXC open-source HLSL compiler for Nvidia’s precursory tracing vendor extension and now support for Vulkan Ray Tracing SPIR-V shaders is incoming, which will enable ray tracing shaders authored in HLSL to be used with DXR and Vulkan Ray Tracing with minimal modifications.

Let’s take a look under the covers of these extensions in more detail:

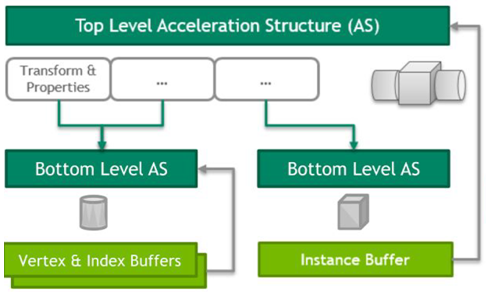

Acceleration structures. To achieve high performance in complex scenes, ray tracing looks for intersections within an optimized data structure built over the scene information called an acceleration structure (AS). The acceleration structure is divided into a two-level hierarchy as shown in Figure 1. The lower level, the bottom-level acceleration structure, contains the triangles (vertex buffers) or axis-aligned bounding boxes (AABBs) of custom geometry that make up the scene. Since each bottom level acceleration structure may correspond to what would be multiple draw calls in the rasterization pipeline, each bottom level build can take multiple sets of geometry of a given type. The upper level, the top-level acceleration structure, contains references to a set of bottom-level acceleration structures, each reference including shading and transform information for that reference.

Building either type of acceleration structure results in an opaque, implementation-defined format in memory. The bottom-level acceleration structure is only used by reference from the top-level acceleration structure. The top-level acceleration structure is accessed from the shader as a descriptor binding. An acceleration structure is created.

Like other objects in Vulkan, the acceleration structure creation just defines the “shape” of the acceleration structure and memory must be allocated and bound to the acceleration structure before further use. There is no support for sparse or dedicated allocations. In addition to querying the memory requirements for the acceleration structure allocation itself, the operation returns sizes for the auxiliary buffers required during the build and update process.

Host acceleration structure builds. Acceleration structures are very large resources and managing them requires significant processing effort. Scheduling this work on a GPU device alongside other rendering work can be tricky, particularly when host intervention is required. Vulkan provides both host and device variants of acceleration structure operations, allowing applications to better schedule these workloads. Device variants are queued into command buffers and executed on the device timeline, and the host variants are executed directly on the host timeline.

Deferred host operations. Performing acceleration structure builds and updates on the CPU is a workload that is relatively easy to parallelize, and Khronos wanted to be able to take advantage of that in Vulkan. An application can execute independent commands on independent threads, but that approach requires there be enough commands available to fully utilize the machine. It can also lead to imbalanced loads since some commands might take significantly longer than others.

In order to avoid these snags, Khronos added deferred operations to enable intra-command parallelism: spreading work for a single command across multiple CPU cores. A driver-managed thread pool is one way to achieve this but is not in keeping with the low-level explicit philosophy of Vulkan. Also, applications often run their own thread pools, and it is preferable to enable those threads to perform the work so that the application can manage the execution of the driver work together with the rest of its load.

Simplified compaction. Compaction is a very important optimization for reducing the memory used in ray tracing acceleration structures. Acceleration structure construction with compaction traditionally looks like this:

- Determine the worst-case memory requirement for an acceleration structure

- Allocate device memory

- Build the acceleration structure

- Determine the compacted size

- Synchronize with the GPU

- Allocate device memory

- Perform a compacting copy

To allocate memory for a compacted acceleration structure, an application needs to know its size. To determine the size, it needs to submit a command buffer for steps 3 and 4 and wait for it to finish.

This detail causes alarm for experienced engine developers. If done naively, this sort of host/device handshaking can seriously degrade performance. If done well, it is a significant source of complexity and can cause spikes in an application’s device memory use, because the uncompacted acceleration structures need to reside in device memory for at least one frame.

Host builds allowed Vulkan to remove both of those drawbacks. Using host builds, Vulkan is able to implement compaction by performing the initial build on the host, and then performing a compacting copy from host memory to device memory. That copy still requires monitoring so the app can recover the host memory, but it is a more familiar pattern, one which many graphics engines already implement for uploading texture and geometry data to the device.

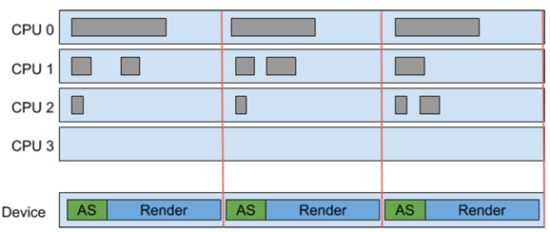

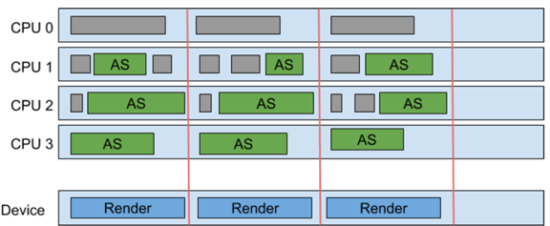

Load balancing. Host acceleration structure builds combined with deferred host operations provide opportunities to improve performance by leveraging otherwise idle CPUs.

Khronos offers a hypothetical profile from a game:

Figure 2: Building an acceleration structure and rendering on GPU.

In Figure 2, acceleration structure construction and updates are implemented on the device, but the application has considerable CPU time to spare. Moving these operations to the host allows the CPU to execute the next frame’s acceleration structure work in parallel with the previous frame’s rendering. This can improve throughput, even if the CPU requires more wall-clock time to perform the same task, as shown in Figure 3.

Ray traversal. Tracing a ray against an acceleration structure in Vulkan goes through several logical phases, giving significant flexibility on how rays are traced. Intersection candidates are initially found based purely on their geometric properties by testing if there is an intersection along the ray with the geometric object described in the acceleration structure.

Tracing rays and getting traversal results can be done via one of two mechanisms in Vulkan: Ray tracing pipelines and ray queries.

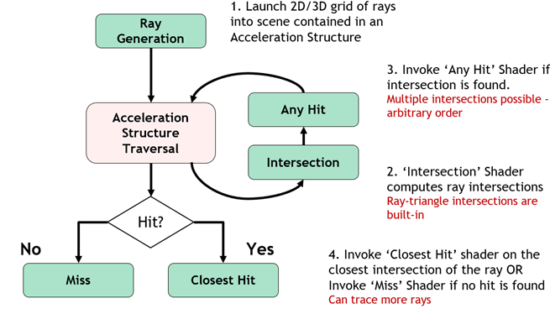

Ray tracing pipelines. Applications can associate specific shaders with objects in a scene, defining things like material parameters and intersection logic for those objects. As traversal progresses, when a ray intersects an object, associated shaders are automatically executed by the implementation (see Figure 4). A ray tracing pipeline is similar to a graphics pipeline in Vulkan, but with added functionality to manage significantly more shaders and references to specific shaders into memory.

Ray tracing pipeline work is launched using a bound ray tracing pipeline. During traversal, if required by the trace and acceleration structure, application shader code in an intersection and any hit shaders can control how traversal proceeds. After traversal completes, either a miss or closest hit shader is invoked.

Callable shaders may be invoked using the same shader selection mechanism, but outside of the direct traversal context. The different shader stages can communicate parameters and results using ray payload structures between all traversal stages and ray attribute structures from the traversal control shaders.

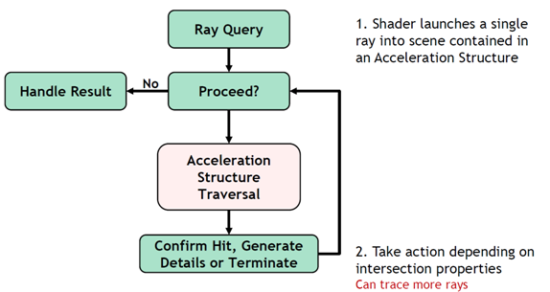

Ray queries. Ray queries can be used to perform ray traversal and get a result back in any shader stage. Other than requiring acceleration structures, ray queries are performed using only a set of new shader instructions.

Ray queries are initialized with an acceleration structure to query against, ray flags determining properties of the traversal, a cull mask, and a geometric description of the ray being traced. Properties of potential and committed intersections, and of the ray query itself, are accessible to the shader during traversal, enabling complex decision making based on what geometry is being intersected, how it is being intersected, and where.

Industry support. As you might expect there is a lot of industry support for this new API and it can be shipped on GPUs using specialized ray tracing or standard GPU compute. Companies quoted in the Khronos press and interesting mix of hardware and software companies including AMD, EA, Epic Games, Imagination Technologies, Intel, Nvidia, and OTOY.

One of the first games to show realtime ray tracing was EA’s Battlefield V by Swedish game developer, Dice. Dice began working with Microsoft in early 2017 and as a result of that advanced work was able to come out at the same time as Nvidia’s introduction of the Turing GPU. Game developer 4A also brought out their acclaimed Metro Exodus which has ray tracing through many parts of it. Nvidia’s path tracing upgrade to Quake II and Wolfenstein: Youngblood from Bethesda have both shipped using Nvidia’s vendor extension for ray tracing in Vulkan—which ended up being the precursor to much of what is now in the multi-vendor Vulkan Ray Tracing open standard.

Use of similar acceleration structure/ray tracing shader architectures enables straightforward porting of ray tracing functionality between Vulkan Ray tracing and DXR including re-use of ray tracing shaders written in HLSL.

What do we think?

This new API will influence an existing ecosphere, in a positive way, but not immediately. UL introduced its ray tracing benchmark in September 2018, and we expect them to update it with the Khronos API. UL/Futuremark started development in November 2017, about 6 months before their GDC demo in 2018. There had been talks about doing RT stuff even before, but their interest rose when Microsoft told them that there would be a DirectX API for it, making it common for all rather than a vendor-specific thing.

Crytek also introduced a ray tracing benchmark, but we don’t expect them to be in a big rush to use this new API. Crytek is a member of the Vulkan Advisory Panel—so they have input to the specification process. In any case, benchmarks are important—but not as important to adoption as applications.

Timing-wise, Khronos still has one step to go before they can get widespread adoption—they have to finalize the specifications—and for that, they need developer feedback—so all you developers out there, let Khronos know what you think, ASAP!

One critical use case for the Vulkan ray tracing API is realtime ray tracing in games—typically using a hybrid combination of a rasterized scene with some ray traced aspects. Vulkan Ray Tracing enables that.

In addition, there is rasterization post-processing after tracing primary rays, using ray tracing for shadow map generation, and dynamic light baking asynchronously with other system tasks.

However, in a pure post-processing operation limited to screen space information that doesn’t use hardware acceleration, it probably won’t have much impact. For example, the impressive post-processing shading and reflections work being done by Pascal Gilcher, a widely used author of ReShade shaders, will not benefit from the API.

You don’t need Reshade-style post-processing for a ray traced application. And light map pre-baking isn’t needed if one is ray tracing lighting accurately through ray tracing. Hybrid solutions will be used—but the long-term idea is to make these ‘kludges’ unnecessary.

Reshade is very cool, but it is applying (very smart) screen space post-processing using a Z-buffer. It is just an approximation to increase visual results if you haven’t used ray tracing to calculate accurate ray intersections using the scene geometry in the first place. (Gilcherdoes confirm that Reshade screen space ray tracing can’t use ray tracing hardware acceleration as they are not processing geometry.)

The big picture is that screen space ray tracing may be a great interim approximate solution for many games and applications especially in this transition period—but as true geometry-based ray tracing (as in Vulkan Ray Tracing) becomes more pervasive—the need for the interim screen-based solutions will decrease

Also, there are two factors why games are not as graphically impressive as prerendered movies. The first is lighting, and the second is resolution.

Lighting is affected by material and everything else in the scene due to the light bouncing and being influenced (colored) by the things it encounters. Global illumination is used to manage such reflections and shadows.

Therefore, RTX is not a full ray tracing solution because it is not doing full global illumination, only reflections, and shadows. Also, a typical path-tracing engine has perfect anti-aliasing. As far as I’ve been able to find out, the main camera rays aren’t ray traced, only secondary rays are.

RTX, DXR, and Vulkan Ray Tracing can be used for path tracing—in fact, the Nvidia Quake II demo with Vulkan was using path tracing.

Path tracing uses the same atomic operation as ray tracing—firing a ray and computing intersections. Path tracing simply fires a bunch more rays with multiple bounces to (for example) find contributions to scene lighting from all surfaces for global illumination.

Pragmatically, it is not possible to send infinite bounces into a scene, so path tracing applications use stochastic sampling to generate sufficient image quality at a reasonable number of rays to process.

Vulkan Ray Tracing can be used to accelerate the stochastic, multi-bounce ray calculations in path tracing—and access to hardware acceleration enables more rays to be fired for higher quality and performance—making it more accessible to realtime rendering applications such as games.

With regard to resolution, while rasterization doesn’t scale well with increased polygon counts, ray tracing is not as affected. That means ray tracing will allow higher polygon counts which will improve realism tremendously. However, path-tracing complexity increases linearly with the number of pixels. So, ray tracing will not scale as well from HD to 4K.

Ray tracing and path tracing are affected by geometric complexity as the setup and intersection calculation loads increase with denser models—they scale better with larger geometry than rasterization.

Ray tracing, path tracing, and rasterization loads, all increase with screen resolution and ray/path tracing is more linearly affected—but path tracing stochastic sampling and machine learning-based filtering of the stochastic results can alleviate that extra load (e.g., Nvidia DLSS).

The exciting big jump in realism from ray/path tracing is the accurate material/lighting calculations that they enable—and providing hardware acceleration in an API such as Vulkan is making this technology more accessible to realtime and ProViz applications alike.

However, the restrictions of RT implementation, whether in the HW acceleration or the application are not influenced by the API. The hardware architecture and API to access it have to be in alignment—even while enabling healthy competition in implementation details.