Cutting-edge research replaced movie magic as the largest draw. JPR’s Kathleen Maher reports from Hong Kong.

By Kathleen Maher

Attendance to Siggraph Asia has been growing—not by leaps and bounds but by nice, reasonable increments. There is still a paucity of big booths but a plethora of eager students attending the papers and that might continue to be the role of the conference—an incubator for new ideas and new development. After all the main Siggraph Conference held every summer in the U.S. tends to be dominated by the movie industry—maybe too much dominance.

Attendance to Siggraph Asia has been growing—not by leaps and bounds but by nice, reasonable increments. There is still a paucity of big booths but a plethora of eager students attending the papers and that might continue to be the role of the conference—an incubator for new ideas and new development. After all the main Siggraph Conference held every summer in the U.S. tends to be dominated by the movie industry—maybe too much dominance.

At Siggraph Asia the interest is in robots, content capture, 3D output, stereographics, and animation. Appropriately, Microsoft Principal Researcher Bill Buxton gave the keynote. Buxton’s ideas include continuous work on interfaces and how we can make the mechanics of content creation play less of a role while increasing the role of the imagination and creativity. The time has come with the increase in touch technologies and ever-improving interfaces—but oh Lord, isn’t there a long way to go on that front?

One of the papers getting a lot of notice came from the University of Chicago at Urbana Champaign. Kevin Karsch, Varsha Hedau, David Forsyth, and Derek Hoiem have developed imaging software that enables objects to be inserted into an image. The result, they told the audience, is undetectable. The technology is able to recreate the lighting in the host photograph and also make some. The team says their results have tricked experts who specialize in detecting doctored photos. Presenter Karsch told the audience that the technology his team has developed recreates 3D geometry from a 2D picture. The software then uses that information to correctly place objects with the right lighting. To build the required information, the program asks the user to select light sources in the picture and then the 3D algorithm goes to work to create a 3D environment and create appropriate shadows and highlights before converting it back to 2D.

The robots of Asia made several appearances. Dzmitry Tsetserukou of Toyohashi University of Technology presented his telepresence robot. The robot is remote-controlled by a user wearing 3D glasses and a sensor belt around the waist. The robot is moved by the user who moves his or her trunk, bending forward, turning etc. In return the user sees what the robot sees and sensors in the belt buzz when objects are nearby. The study is still in its early stages but Tsetserukou is investigating ways for robots to share more about their environment beyond the visible.

Jonas Pfeil of the Technical University Berlin, showed up with his spherical, multi-sensor ball camera designed to be tossed in the air to collect visual information about the surrounding area. The Ball Camera has 36 mobile-phone quality sensors, which fire simultaneously. They are triggered by an accelerometer that calculates when the ball will reach its highest point. Pfeil developed software that enables the resulting images to be stitched together later on the computer. Pfeil says the advantage of the ball camera compared to similar panorama cameras such as the Google Street View camera is that the ball camera can capture the photographer on the ground and the ground itself. Also, because the sensors fire simultaneously, it creates a consistent image that’s not confused by moving elements such as people, cars, windblown waves of corn, etc. Pfeil’s team includes Kristian Hildebrand, Carsten Gremzow, Bernd Bickel, and Marc Alexa. Pfeil is hoping to commercialize the product as a low-cost toy that will sell for less than a hundred Euros.

Jonas Pfeil of the Technical University Berlin, showed up with his spherical, multi-sensor ball camera designed to be tossed in the air to collect visual information about the surrounding area. The Ball Camera has 36 mobile-phone quality sensors, which fire simultaneously. They are triggered by an accelerometer that calculates when the ball will reach its highest point. Pfeil developed software that enables the resulting images to be stitched together later on the computer. Pfeil says the advantage of the ball camera compared to similar panorama cameras such as the Google Street View camera is that the ball camera can capture the photographer on the ground and the ground itself. Also, because the sensors fire simultaneously, it creates a consistent image that’s not confused by moving elements such as people, cars, windblown waves of corn, etc. Pfeil’s team includes Kristian Hildebrand, Carsten Gremzow, Bernd Bickel, and Marc Alexa. Pfeil is hoping to commercialize the product as a low-cost toy that will sell for less than a hundred Euros.

The movie industry did make an appearance of course and there were recruiters on hand at the conference. Special effects house Imagineer of Guildford, UK showed off their post tools, Mocha Planar Tracking. Sold as stand along tools or plug-ins for After Effects and Final Cut Pro, Mocha is a roto product that enables artists to track shots to a degree better than point trackers, says the company. For instance, in shots with a lot of noise, motion blur, shots that include obscured points or shots that go off screen.

Emerging technologies

At Siggraph often the biggest buzz on the show floor itself is usually generated from the Emerging Technologies section. Often relegated to the inner bowels of the expansive LA Convention Center when the show is in LA, this year in Hong Kong the energy was generated from the back of the show room, where there was a steady stream of visitors back and forth between show floor and back galleries.

Many ingenious ideas and fresh new toys were on display this year, but here are a few highlights:

Marco Marchesi from the University of Bologna was displaying his doctorial project called the Mobie Project. Marco has built upon Neorosky’s Mindwave headset to come up with a new way to play video games and watch movies. How it works is that a player is seated and the Mindwave is attached, a “benchmark” of the player’s brainwaves is recorded and registered in Mobie . Then from there the game or movie begins, as the brainwaves of the subject are recorded they are put thru the Mobie algorithms and depending on what the person is feeling and reacting Mobie takes the subject through the preloaded sequences of actions. If a player is feeling stressed a certain story will play out, relaxed, excited, etc. At first the video sequences are chosen unconsciously however with some training and with a little help from The Force the player can actively manipulate and affect the plot.

Marco Marchesi from the University of Bologna was displaying his doctorial project called the Mobie Project. Marco has built upon Neorosky’s Mindwave headset to come up with a new way to play video games and watch movies. How it works is that a player is seated and the Mindwave is attached, a “benchmark” of the player’s brainwaves is recorded and registered in Mobie . Then from there the game or movie begins, as the brainwaves of the subject are recorded they are put thru the Mobie algorithms and depending on what the person is feeling and reacting Mobie takes the subject through the preloaded sequences of actions. If a player is feeling stressed a certain story will play out, relaxed, excited, etc. At first the video sequences are chosen unconsciously however with some training and with a little help from The Force the player can actively manipulate and affect the plot.

Mobie can track the User’s account and keep memory of past calibrations and of chosen actions therefore enhancing the users experience the next time around. It is very peculiar to be the subject at the public booth with your brainwaves exposed for all to see, now they are just displayed as Red and Yellow bars which equal your attention level and your meditation level respectively but somehow you feel a bit exposed. I had no chance at actually manipulating the plot, Marco says it can take quite a bit of training but you could see how it could be very entertaining. You can read more about the Mobie project and check in with Marco Marchesi at www.mobieproject.org

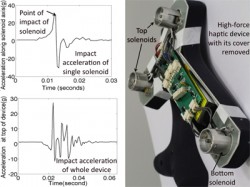

New movement in the world of virtual tennis! It has always seemed that the world of virtual tennis is far more popular than actually playing tennis. Virtua Tennis, Top Spin, Kinect tennis and of course the all-time great Wii tennis has always generated a disproportionate amount of interest compared to the real thing. We are not going to buck the trend here. Our friends at A*Star down in Singapore have come up with a 60-g Haptic Renderer that once incorporated into a handle of a racquet can simulate the feeling and reverb of hitting an actual ball. And not just a soft low impact but the feeling of really ripping one cross-court with top spin.

New movement in the world of virtual tennis! It has always seemed that the world of virtual tennis is far more popular than actually playing tennis. Virtua Tennis, Top Spin, Kinect tennis and of course the all-time great Wii tennis has always generated a disproportionate amount of interest compared to the real thing. We are not going to buck the trend here. Our friends at A*Star down in Singapore have come up with a 60-g Haptic Renderer that once incorporated into a handle of a racquet can simulate the feeling and reverb of hitting an actual ball. And not just a soft low impact but the feeling of really ripping one cross-court with top spin.

Louis Fong and his team have placed multiple solenoids in the top and bottom of their haptic prototype and by manipulating the charge in the handle can generate a true feeling of impact. Fong is able to control the waveform of the accelerations with a frequency response between 40Hz and 80Hz, the fiberglass based vibrating element is designed such that its natural frequency mimics a racquet. Holding the racquet and swinging it thru impact gave one an astonishing feeling, it was about as true to life as a simulation could get from a kinetic sense. Louis and A*Star seemed more than happy to have visitors down to their labs in Singapore, so if you get a chance you should swing on by and try out their astonishing haptic renderer.

There were all sorts of other great demonstrations Inria was there showing off their Joyman human-scale joystick. Joyman uses sense of balance as a form of locomotion through virtual world. The user stands on what amounts to a small trampoline with a help of a few balancing bars and move through a world doing nothing but leaning to and fro gives one the sense of traveling around on a Segway. Joyman has teamed up with the European company Immersion for further development.

There were all sorts of other great demonstrations Inria was there showing off their Joyman human-scale joystick. Joyman uses sense of balance as a form of locomotion through virtual world. The user stands on what amounts to a small trampoline with a help of a few balancing bars and move through a world doing nothing but leaning to and fro gives one the sense of traveling around on a Segway. Joyman has teamed up with the European company Immersion for further development.

JPR Siggraph Asia luncheon

Jon Peddie Research launched its inaugural and now annual Siggraph press luncheon at Siggraph Asia in Hong Kong last week, and by all reports it was well received and a success. The topic was Making it Real, and on hand to discuss the issues were Justin Boitano from Nvidia, David Forrester of Lightworks, Geomagic’s Ping Fu, and Rob Hoffmann from Autodesk. The discussion ranged from the new ways to work with a model, and as you might suspect one size doesn’t fit all.

This year 3D capture is happening in a variety of ways including photogrammetry, scanning, and good old fashioned 3D modeling. Also, the models are getting plenty of work, they’re increasingly being used in advertising and they’re integral to movie making and game development. Jon Peddie said that no one photographs for cars anymore. David Forrester of Lightworks agreed. Making it real isn’t just about movies, it includes advertising, games, and TV, and they all have their own issue and requirements, but different genres within them have different requirements.

With such diversity of model requirements revealed came the question of where will the artists, engineers and programmers come from to fill the needs of the various industries? It turns out that’s not as great a problem as it might seem, at least not for art. The art schools and colleges are producing tens of thousands of graduates every year now with training in the modern programs and techniques for such work.

The other aspect that quickly came into the discussion was the shortened time frame studios have to get the project out. That’s being helped with lower costs for hardware and software (the democratization of CG), and the geographic dispersion and collaboration that’s been enabled by the now ubiquitous cloud (seems you can’t have any discussion about computers now without invoking the “cloud”).

But, there’s good news and bad news in the movie industry. Financing for movies has dried up. Movies have turned to less-than-great investments. In an article published by CNBC, investor Clark Hallren of Clear Scope Partners estimates that 80% of transactions disappointed investors. That means filmmakers have to do more with less. They are relying on digital effects and fixing it in post more than ever.

The situation is not expected to get better. Rather, the film industry is changing forever. Considering that game development has also had its economic challenges we might be seeing the dominoes start to fall on traditional production methods. Like we said, good and bad news.

According to the panelists there has been a huge increase in interest in content creation on mobile, 3D capture techniques, 3D output, and an increased role for rendering in all fields.

A unique identity

Siggraph Asia is established. It has its own character and feel. The days of big giant tradeshows seems to be over, but Siggraph remains an important teaching forum and a site for the exchange of new ideas and techniques. The show floor has been steadily growing for Siggraph Asia and we find it refreshing that most of the booths are for smaller companies from Asia and not the mega-world powers. As a result, the show is a better resource for finding new and interesting companies and products.