Knowing how you stand up to the competition is critical.

Last December, we told you about ray tracing startup, Nebula, in Montreal and the founder, Yann Clotioloman Yéo. The company has developed a physically based, unbiased stand-alone renderer with realtime DirectX 12 preview written in C++. It runs on Windows 10 (64-bit) and requires SSE4 to run. The program uses AMD Radeon Rays. Also since 2.0, they are using Intel’s Embree.

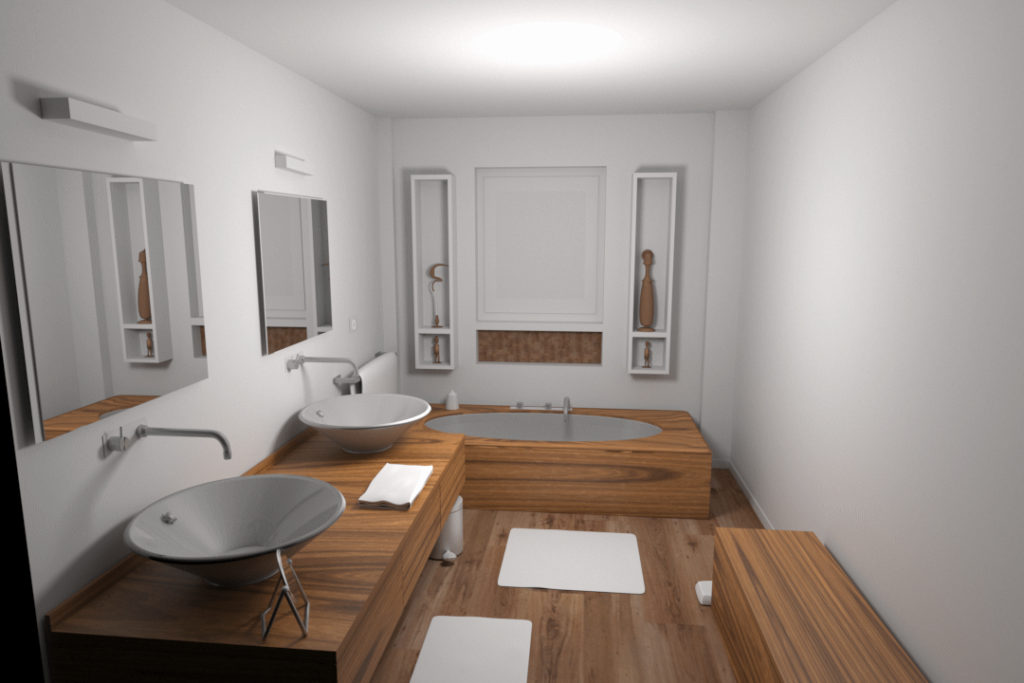

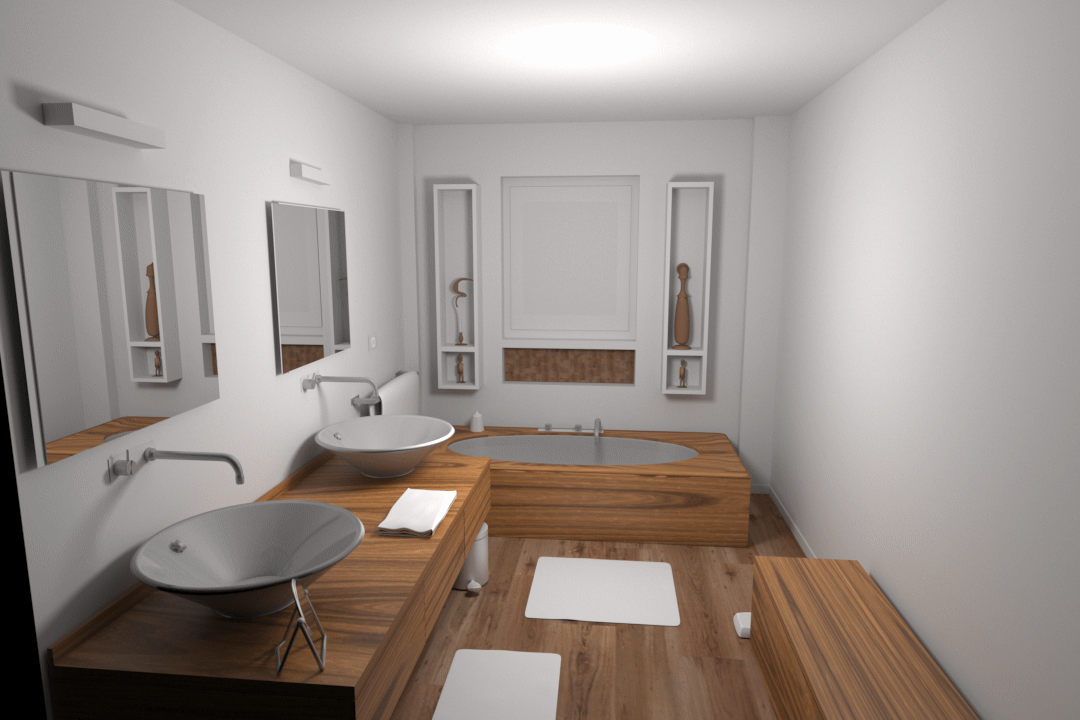

In the process of releasing their latest version of Nebula Render, the company made a benchmark to see how their software performed compared to competitors. Nebula made a test scene using a bathroom model by artist Nacimus (see above). The scene features glossy reflections, mirroring reflections, and soft shadows from a single spherical light.

Nebula has two rendering modes: CPU and CPU + GPU. For these tests, Nebula tested both CPU and GPU solutions. GPUs are currently known to be faster than CPUs on simple scenes while the contrary may be true for complex scenes. The current model has an average complexity (986K polygons) so that both types of hardware can perform well. Nebula chose to run the tests in CPU mode because it was slightly faster on the machine they were using for the tests.

Also, only unbiased solutions were considered. Even though the biased solutions used for the tests were very fast (realtime), the developers felt that the quality differences were too noticeable compared to pure ray-tracers.

Nebula ran two test scenarios with renderings performed for 4 and 20 minutes.

They rendered the scene at 1080 × 720. If a program did not support that resolution, perhaps because of licensing, they calculated the render time linearly to the resolution available.

The maximum ray tracing depth was set to (4 bounces) when such a setting was offered in the program.

With regard to CPU or GPU, the fastest mode for the test hardware was chosen.

Soft shadows and glossy reflections were possible using one area light. When spherical lights were not supported (in the program being tested), an emitter material was used.

Materials and lighting were also adjusted. The company tweaked materials for the benchmarking as well as lights and the camera so the different renders could have a similar look. When possible, the light falloff was edited.

No ambient occlusion was employed because the settings varied too much between programs.

All tests were run unbiased and at maximum quality. There are no light caches and Russian Roulette was disabled when possible.

The ray tracing programs tested were:

- Arnold 3.2.65 (CPU, 3ds Max)

- Corona Renderer 5 (CPU, 3ds Max)

- Cycles (CPU, Blender 2.8)

- Nebula Render 2.1 (CPU)

- Maverick Studio 400.420 (GPU)

- Maxwell Render 5 (GPU, 3ds Max)

- Octane 4 (GPU, 3ds Max)

- Owlet 1.7.1 (CPU)

- ProRender 2.0 (GPU, Blender 2.8)

Notable programs could be missing due to either licensing or outdated plugins.

The hardware platform used for the testing was configured to be balanced between GPU and CPU. It consisted of an Intel i7-6700 HQ 2.60 GHz, 4 Cores, 16 GB RAM, and either an Nvidia GTX 960M 1096 MHz, with 640 Cuda cores, and 4 GB RAM, or the Intel HD Graphics 530 (unused since renderers supporting multi-GPU were Cuda based).

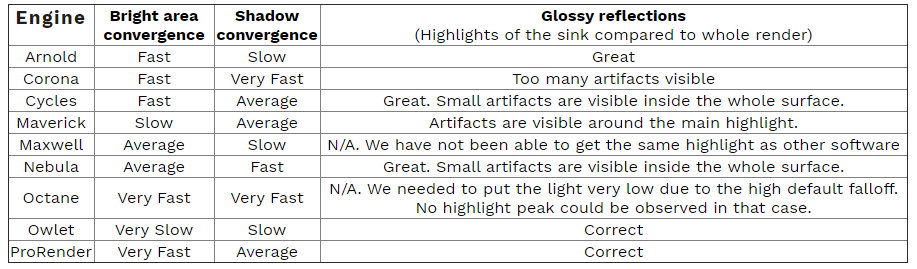

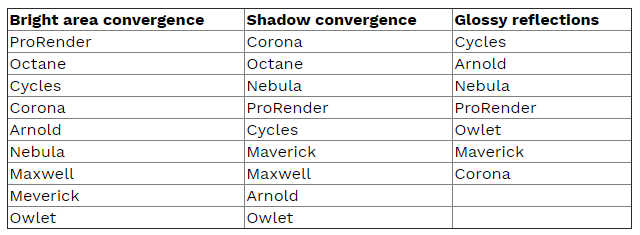

A snapshot, high-level view of the overall results are shown in the following table.

Ranking from best at the top to least best at the bottom.

Nebula didn’t make quality/beauty rankings public because that kind of judgment can be so subjective, but it won’t come as a surprise that Nebula believes their strengths lie in delivering beautiful images. In addition, the company believes they score points on performance, ease of use, and affordability. The Nebula renderer is $199.

Conclusion

All renderers are not created equal. The purpose of these tests for Nebula is to put itself on the map. The results put the company’s renderer comfortably in the running with more expensive and better-known renderers. In addition, Nebula invites readers to see for themselves. The links are provided below. Developers and interested parties are invited to send updates for a particular engine (until January 30) if you think it can be improved. Just make sure that it still has a common look with the other engines and respect the metrics used. The company also invites readers to send their own for all the software tested, but just make sure your hardware is not customized to favor the CPU or GPU.

Here are the links for the image and software files:

http://www.nebularender.com/Benchmark.zip

https://www.dropbox.com/s/6hmytvj6fb1kdd0/Benchmark_Software_Files.zip?dl=0