Siemens’ new STAR-CCM+ 2022.1 becomes the latest GPU-accelerated tool.

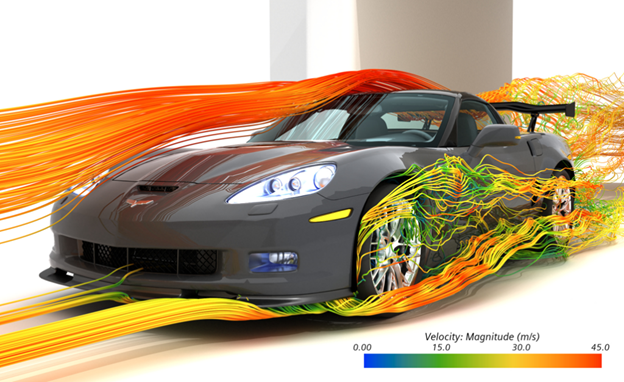

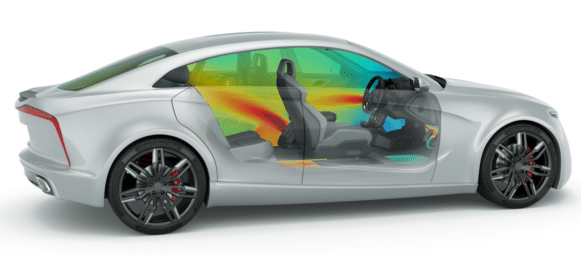

Computational fluid dynamics (CFD) simulation is a powerful tool for design and is used extensively for automotive, aeronautic, and marine design where air and water flows are vital to the performance of cars, planes, and boats. It has much broader applications as well. CFD comes in handy in content creation for simulating fire, smoke, lava flows, and even crowd movements. Being able to put CFD to work is a superpower, but it ain’t easy. It’s a matter of predicting the behavior of millions of particles that are always changing in 4D with complex interdependencies.

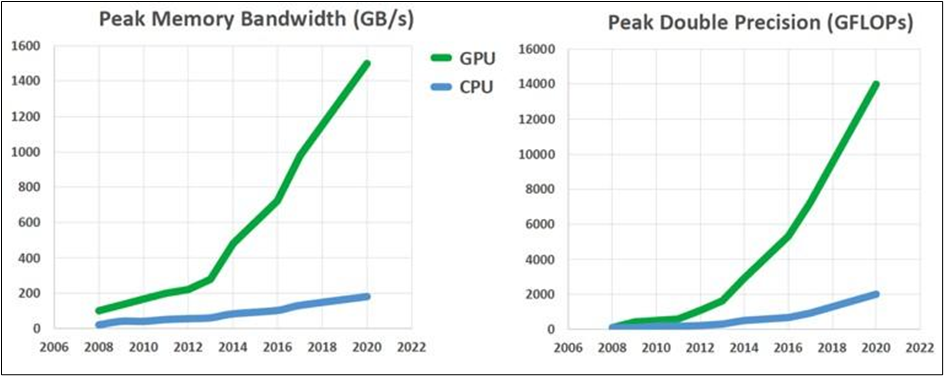

Digital analysis of CFD arrived in the early ’80s, and the work was done by CPUs that chewed on problems for days. Today, the evolution of semiconductors, memory, and their interconnectedness is enabling CFD applications using GPUs, where they can make the biggest difference and are cutting down the time it takes to get to answers. That work has been catching fire in the last decade.

CUDA was developed by Nvidia as a springboard to help developers take advantage of GPUs; it’s a collection of tools including libraries, SDKs, and profiling and optimization tools. Nvidia has been putting considerable effort into CFD and nurturing young scientists and programmers who are interested in the field. A big part of the problem is tearing into established tools developed on CPUs, opening them up to parallel processing, and giving the appropriate parts to the GPU. Nvidia introduced AmgX in 2014. It is based on one of the core algorithms for CFD, Algebraic multi-grid (AMG) is a strategy for scaling the calculations in CFD to make them manageable when dealing with a big number of unknowns. Nvidia’s Joe Eaton said in an introductory blog post that AMG is designed for highly refined and unstructured numerical meshes used for arbitrary geometries. AMG reduces solution times by scaling linearly with the number of unknowns in the model and can run in parallel, making it perfect for GPUs.

The AmgX library for CUDA was developed in conjunction with Ansys for their Fluent CFD technology. Developed in the early ’80s, Fluent is one of the first CFD tools. As an Ansys product, it is now widely used in the automotive industry. The incorporation of AmgX into Fluent’s collection of solvers means that it’s available as the default solver when Fluent detects the presence of a CUDA-enabled GPU. AmgX is also capable of using MPI (Message Passing Interface), a standard API that enables applications to take advantage of multi-node computer clusters, because CFD is just that kind of processor-hungry application. Nvidia and Ansys announced CUDA-enabled Fluent in 2014. Most recently, Ansys has introduced Ansys 2022 R1, a collection of analysis tools and technologies optimized to take advantage of modern semiconductor capabilities. The Fluent 2022 R1 tools include a new aeronautic dedicated workspace for external aeronautic simulations and include built-in best practices guidance, optimized solver settings, and parametric capabilities.

Siemens is also a repository of CFD wisdom and high-power tools. Its STAR-CCM+ is descended from CD-adapco tools, which, like Fluent, were developed in the 1980s. In 2004, CD-adapco’s engineers fundamentally updated the STAR-CD tools for CFD. The product was renamed STAR-CCM+. CCM stands for computational continuum mechanics. The product solves for fluid flow and heat transfer. Since then, CD-adapco has been optimizing its code for HPC and for many multiples of processors. For instance, in 2015, the National Center for Supercomputing Applications (NCSA) announced a successful collaboration with CD-adapco’s StTAR-CCM+ to scale the tool to 102,000 cores on the Blue Waters supercomputer. Work like that has provided a foundation for moving to GPUs whether they are located in the cloud, in an HPC supercomputer, or a workstation—or all of the above.

CD-adapco was acquired by Siemens in 2016 for its Simcenter tools included in the Xcelerator portfolio. Siemens and Nvidia then collaborated on STAR-CCM+ 2022.1, the most recent version of Siemens’ software, with increased reliance on GPU acceleration. AmgX also came into play for that work.

Memory is key

Stamatina Petropoulou, Siemens’ physics manager, technical product management, has written a blog post celebrating the work Siemens and Nvidia have done on the new STAR-CCM+. From her point of view, the race between CPUs and GPUs has just begun in advanced computing.

GPUs are less expensive than CPUs in terms of processing units and because much of the work of simulation is running calculations on changing meshes of points—the more processors on the problem the better. Siemens says the use of GPUs enables customers to reduce their hardware investments by up to 40% and reduce power consumption by 10% when compared to the CPU-based version of the program.

Also critical is the movement of data to memory. It’s a numbers game, but it’s not a zero-sum game. More processors may be able to process CFD algorithms but not if they can’t get the data to and from memory. Petropoulou says the addition of high bandwidth memory to Nvidia’s new boards has enabled the STAR-CCM+ update to take advantage of GPUs. The introduction of graphics boards with lots of high bandwidth memory keeps the GPUs at work. Petropoulou recommends the HBM2 over GDDR6 (Graphics Double Data Rate 6).

Siemens contends this is just the first step. “We plan to extend GPU-enabled acceleration across all relevant core solvers, bringing GPU-enabled acceleration to CFD simulation engineers across all industries and applications,” Petropoulou says.

As stated earlier, Nvidia is seeking out all the opportunities it can find to optimize code for GPUs, and CFD represents a big opportunity. The company has been using AmgX to work with the open-source CFD tools in OpenFoam, which has been picked up by enterprise companies using OpenFoam for CFD, including Argonne National Lab, Boeing, and General Motors.

The company also is working with Altair to accelerate its software for solving and rendering with enhanced support for Nvidia GPUs. The company has announced new optimizations for Altair AcuSolve and its Thea Render to get compelling visualizations faster.

Altair says that, in addition to GPU support, their engineers have worked with Nvidia for Nvidia RTX Server validation of Altair ultraFluidX, aerodynamics CFD software, as well as Altair nanoFluidX, a particle-based CFD software. Nvidia’s reference design, RTX Server, allows engineers to use high-performance computing for simulating physics and iterating designs—all with GPU-accelerated rendering and computer-aided engineering simulation computing times.

Nvidia’s annual developer conference, GTC, is coming up later in March, and CFD will be on the program.

What do we think?

By now, you get the idea but maybe not the whole picture. AMD has also been working on CFD, but the company has a more universalist point of view because of their big CPUs with multiple cores as well as their GPUs. AMD CTO Mark Papermaster has stressed the importance of building high-performance systems with balanced systems taking advantage of Epyc CPUs with high bandwidth access to memory and GPUs. And Intel’s determined effort to field a powerful GPU is also aimed at HPC applications, including CFD. Those two giants see opportunities for the company with multiple semiconductor resources: CPU, GPU, and FPGA.

In addition to Nvidia’s upcoming GTC, keep an eye on AMD and Intel over the coming year as HPC applications come down to the computer and to GPUs, and HPC applications get jet-propelled by the cloud. Big problems are getting faster solutions.