It is time to rethink how we think about the future of graphics-based technologies. We are in transition from the era of “what can we do with what we draw?” to “what can we do with what we see or imagine?”

By Randall S. Newton

“This is the only wish I ever wished for myself. Peter, this is the biggest feeling I’ve ever felt. This is the biggest feeling I’ve ever had and this is the first time I’ve been big enough to have it.” — Tinkerbell, as played by Julia Roberts in Hook.

The New Year has begun. The secret technology pundit rulebook says I’m supposed to write about what happened in the year ending and speculate what new stuff we’ll see. Having followed a life motto of “it is better to seek forgiveness than permission” I’m going to deviate from plan. Instead of speculating, I’m going to smash my old technology paradigm and let you do the speculating instead.

For years I have been guided by the desktop metaphor. I would look at what is being done in graphics software as the automation of desktop (or drawing board) processes, and try to see what has not yet been automated. For years such progress has been incremental, mere tweaks around the edges of the big picture. CAD geometry became CAD objects, and assigned attribute data, which is sliced and diced in ever more complex and useful ways (think PLM and BIM). Images became 3D models and were animated, first in 2D and now 3D. Stereoscopic display is on the market and eventually we will have holographic display, but such advancements are still an echo from the initial idea of taking art from the drawing board or film to the computer.

I now realize I need to completely throw out the desktop metaphor. I need a new metaphor big enough to hold the universe. The question is no longer, “what desk-based tasks can be automated” but instead “what aspects of life, the universe, and everything can be made digital, and what can we do once we make the conversion?” I know this is not a new idea; the concept of “from atoms to bits” has been around for a long time. But I believe I have been thinking too small, and I’m pretty sure I am not the only one.

I started to write “physical universe” in the preceding paragraph, but pulled out “physical” as too limiting (and yes, the line is a reference to Hitchhiker’s Guide to the Galaxy). Thinking “from atoms to bits” is not enough. Every creative thought, every wild idea, every bit of movement, every rock and leaf and manufactured object … all are now fair game for the next stage of the revolution.

The new graphics paradigm is Mashup 2.0

In graphics software specifically, we are moving from a paradigm that asks “What can we do with what we draw?” to a paradigm of “What can we do with what we see or imagine?” Bits and pieces of the new paradigm have been available for a while, and more are coming into play. Prices are dropping and accessibility is rising.

There are some fundamental building blocks in place; we’ve been covering them regularly in GraphicSpeak:

- 3D printing

- Simulation

- 3D terrestrial scanning

- General purpose graphics processing units (GP-GPUs)

- The migration of graphics software to mobile

- Kinect and related gesture input

- Cloud computing

… to name a few. But the creation of these building blocks is not the end goal. What we can accomplish using them is the real innovation. Consider it Mashup 2.0. Instead of creating new web pages combining data, images, maps, etc. (Mashup 1.0), new inventions are coming that represent the novel juxtaposition of two or more technologies that have their roots in graphics.

Consider this brief list of Mashup 2.0 innovations from 2011 as a guide to what might be coming in 2012:

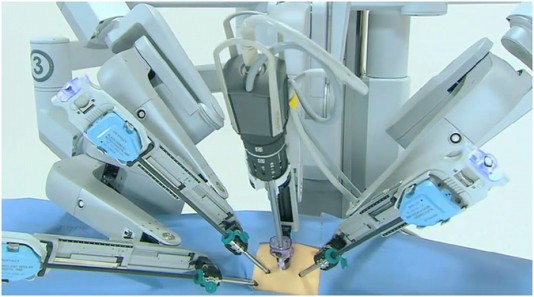

Robot surgery on a beating heart: A French team used a 3D model of a beating heart to train a robot to perform surgery directly on the heart, without removing it first. The risk of stroke goes way up among those who have received open heart surgery; robotic surgery on a beating heart will save lives both immediately and in the long run. The Mashup: robotics + 3D imagery + real-time bio simulation + GP-GPU processing.

A plane you can drive on the road: Terrafugia will deliver its first roadable aircraft in 2012, an airplane you can drive home from the airport. Terrafugia did almost all its product testing in a virtual environment. The Mashup: Automotive and aeronautical engineering + virtual product simulation + virtual composite modeling.

Light field photography: The new Lytro camera used CMOS sensors to capture the light path in a photo; they call it ‘megaray’ data capture as opposed to megapixel, and allows “shoot first, focus second” photography. Refinements to the software in 2012 will allow 3D capture from a single image. The Mashup: Image capture + light field analysis + HTML5.

Game engines for CAD and GIS: Several projects are in the works, including CityEngine, Abyssal Engine, Screampoint, and the Studio Clouds work from the Alice Labs team recently acquired by Autodesk. All of them seek to unify game engine rapid visualization with the dense visual data created by CAD and GIS applications and 3D acquisition technologies. The Mashup: render engine + design data + geophysical data.

Perhaps predicting what will come in 2012 is no more difficult than brainstorming in Scrabble. What can you predict will come from these potential mashups in 2012?

- 3D printing and simulation

- CAD and digital content creation

- Simulation and Lytro light field photography

- Augmented reality and game engines

- Motion capture and computational fluid dynamics

We invite your comments below.