Hyperion, Universal Description Language and more are moving content creation in a open direction.

By Kathleen Maher

We’re still crawling from the wreckage after Siggraph, and we’re finding interesting stuff in the rubble. A lot of brilliant realizations have come after repeated bludgeoning from digital content creation (DCC) companies and semiconductor vendors. One thing for sure, GPUs are becoming central to modern digital content creation. Yes, GPUs have been critically important for a while, and yes, I know we’ve been seeing the use of GPUs crawl deeper and deeper into pipelines, but with the information coming out of GDC, FMX, and Siggraph, we’re starting to understand that real time is now.

Last year, Pixar declared its intention to make its Universal Scene Description (USD) technology open source in order to facilitate better exchange of data and enable collaboration (See “Pixar releases Universal Scene Description language to open source”). USD is designed for any digital content creation application, including filmmaking, animation, and game development. Standing on the shoulders of Alembic, which enabled data exchange for assets, USD enables the interchange of assets and animations, but it also enables the assembly of multiple, many multiple, assets into virtual sets, scenes, and shots and to exchange them between applications and non-destructively edit them within a single-scene graph. It is thread friendly to enable hardware acceleration. It organizes work into layers, analogous to files, which enables groups and individuals to work on content, as overrides, without stepping on each other.

Rendering in real time

Another interesting aspect of USD is the development and use of the Hydra renderer architecture for real-time feedback. Hydra is designed to be an interim renderer. It supports Pixar’s OpenSubdiv that is also included in the USD distribution. As its name implies, Hydra is multi-headed. Pixar has developed a deferred-draw OpenGL back-end that supports pre-packaged and programmable GLSL shaders, but Hydra can support multiple back ends and front ends. Pixar notes that they have used an Optix-based back end for path-tracing.

For USD, Hydra’s “head” enables geometry rendering of scenes to enable fast preview and animation streaming so artists can get a fast read on the progress of their work. It supports third-party plug-ins that are included in the USD distribution. One of the benefits of ray tracing is that it can very quickly produce a descriptive, if noisy, version of a rendered scene. If necessary, it can be stopped, updated, and then restarted, and everyone can see exactly what has changed without waiting for the complete render.

In other words, Pixar is assembling its technology to reach the Holy Grail of real-time work and feedback. Pixar sent OpenSubdiv out into the wild several years ago in order to encourage the groups building content creation products like Maya, Max, Cinema4D, Blender, and Houdini to add support for OpenSubdiv. Pixar says they’re on their fifth generation of OpenSubdiv and will release all subsequent updates to the open source community at the same time the updates are released to Pixar’s teams.

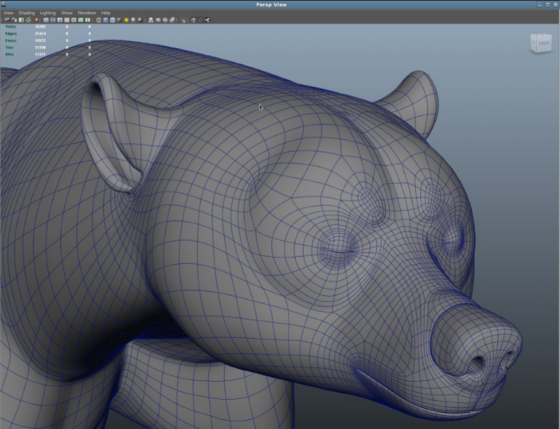

OpenSubdiv enables polygons to be subdivided for smooth models that don’t break when animated. When Pixar introduced OpenSubdiv to the public several years ago, they said animation tools like Maya and Pixar’s Presto took 100 ms to subdivide a character of 30,000 polygons to the second level of subdivision—500,000 polygons. Pixar said their goal was to get the process down to 3 ms so users could interact with smooth, accurate limit surface content at all times. The technology was developed in collaboration with Microsoft.

OpenSubdiv enables fast subdivision accelerated by GPUs. The process is invisible to the modeling and animation applications, which are primarily GPU driven. OpenSubdiv has enabled the DCC companies to update their technology and support GPU acceleration without tearing apart their engines. Hydra with its support for OpenSubdiv enables fast visualization. Open USD is bringing all together in a framework that can be reused, exchanged, and adapted.

Working the GPU

Pixar parent Disney has also developed an in-house production renderer based on ray tracing called Hyperion. The limitation to ray tracing has been the need to control the number of bounces to balance the desire for realism against the barrier of time. Hyperion has been developed to handle large—no, huge—models and to handle several million light rays at a time. It sorts and bundles the light rays according to their directions. The rays can be sorted to gather the rays that will hit the same object in the same region of space. The renderer waits until the sorting and grouping process is complete. As a result, the computer can optimize the calculations for the rays hitting objects in the scene without caching and therefore more efficiently use the available memory.

Wait, what about RenderMan?

RenderMan is still a part of Disney’s arsenal, and it too has been updated to take advantage of ray-tracing technology. In fact, as Jon Peddie has been insisting, ray tracing is the future. In 2014, Pixar announced a new development strategy for its renderers and added RIS (Rix Integration Subsystem), a rendering mode for RenderMan that takes advantage of ray tracing. Pixar says RIS is based on integrators, which “take the camera ray from the projection and return shaded results to the renderer.” RenderMan supports plug-in integrators, and Pixar encourages the development of integrators to complement its own main integrators: PxrPathTracer and PxrVCM. PxrPathTracer is a unidirectional path tracer. It uses information gathered where the light rays hit materials and combines them with light samples to create direct lighting and shading. It spawns additional rays for indirect lighting. PxrVCM adds bidirectional path tracing so that, in addition to the paths from the camera, it also traces paths from the light sources. The additional information provides compensating calculations for complicated paths. Pixar says it is primarily designed for handle specular caustics.

RIS does not yet take advantage of GPU acceleration, but Pixar’s head of business development, Chris Ford, says it is something the RenderMan group is working on. Pixar says the RIS approach is already faster than Reyes. The latest RenderMan 21 has GPU accelerated denoising. It’s reasonable to expect more work to be done on this front. (See the story below re: Nvidia’s rendering technology and Pixar.)

In 2014, Pixar and Disney further integrated their development to enable more sharing among the development teams. There are regular sessions when the groups come together to talk about what they’ve been working on and to explore work that might be used to further the efforts of different groups. Disney as a whole wanted to fully take advantage of and distribute the technology that came with the Lucas Films acquisition and ILM. At the time, Ed Catmull, President of Pixar and Walt Disney Animation Studios, said that he also didn’t want to lose the competitive spirit among different teams.

This has led to increased modularization, enabling technologies to be better shared. In general, the studios are showing an interest in open technologies to invite and share innovation. What we’re seeing now is the fruit of that integration as the various technologies come together and Disney invites the content creation world to take advantage of that work and build on it.