Machine learning, deep learning, artificial intelligence, call it what you will, it is the handle for big data and Intel intends to get a firm grip.

Intel has signed a definitive agreement to acquire Nervana Systems. The company, founded in 2014 and headquartered in San Diego, California, has developed software and hardware for deep learning. The company says the acquisition will give them the IP and expertise in accelerated deep learning to advance Intel’s capabilities. Intel EVP and general manager of the Data Center Diane Bryant says “we will apply Nervana’s software expertise to further optimize the Intel Math Kernel Library and its integration into industry standard frameworks.” In addition, she says Intel will use Nervana’s Engine and silicon expertise to improve the deep learning capabilities of Intel’s Xeon and Xeon Phi processors.

AI holds huge promise for innovation on just about every front of human endeavor. And corporations, governments, and universities are waking up to that discovery. Aided by Moore’s Law and semiconductor advances, neural nets are better and faster today. The searching algorithms are more efficient and accurate, and the cost of implementation is dropping.

The GPU, or more specifically GPU Compute has driven much of the current development in AI, but because some algorithms don’t map perfectly onto a general-purpose SIMD structure like a GPU, a few brave souls have built specialized processors using FPGAs, and dedicated ASICs. Normally there is a simple cost-performance relationship. Custom chips are always faster, and more expensive. General-purpose programmable devices are not as fast but a lot less expensive.

But there are always adventurers and so despite the economy of scale of GPUs, a group of brave souls with equally brave funding forged ahead and developed an AI chip, they called it the Nervana Engine. The Nervana Engine has separate pipelines for computation and data management, so new data is always available for computation. That pipeline isolation, combined with plenty of local memory, caused the developers to suggest that the Nervana Engine could run near its theoretical maximum throughput much of the time. We’ll probably never know.

In order to exploit the engine, as well as many other processor types, Nervana developed a neural net they call Neon. Neon is an open source Python-based language and set of libraries for developing deep learning models, and the company said it would run on processors from AMD, Intel, Nvidia, and Qualcomm, showing a breadth of platforms.

Nervana says Neon is more than twice as fast as other deep learning frameworks such as Caffe and Theano thanks to assembler-level optimization, multi-GPU support, optimized data-loading, and use of the Winograd algorithm for computing convolutions.

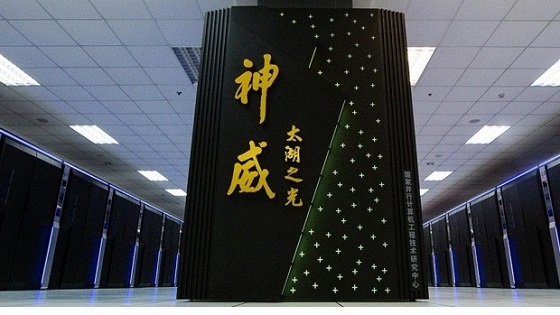

Intel has never liked the inroads GPUs have made in HPC and supercomputers, and they particularly don’t like those GPUs having an Nvidia brand. Intel tried thwarting GPUs in supercomputers with their Phi boards, and had some success, especially in the giant showcase system in China, the Tianhe-2. That moment of glory didn’t last long however, because China then built the Sunway TaihuLight using 41,000 custom Chinese designed processors with 260 cores each. Custom chips in supercomputers are immune to the economics of cost-effectiveness and economy of scale and are paid for with national pride, albeit if only for a year or two.

Aside from supercomputers however, AI using GPUs was getting up Intel’s snout and something had to be done to reposition Intel in its god-given role as industry leader and superstar. Since the company had learned that you can’t be a silicon alchemist and turn a CISC into a GPU, they had to find an alternative to the GPU to establish themselves as the leader in AI machines. Bring on Nervana. And so last week, to the delight of the VCs who backed the company, Intel bought Nervana. Although Intel has not revealed how much it is paying for the company, the rumor mill says it is around $408 million, which is not “significant” at Intel’s scale so they don’t have to declare the amount to the SEC. Of course with so many people involved, and big paychecks coming to the 48-person Nervana shareholders the secret will leak out—just visit the expensive restaurants in San Diego (Nervana’s headquarters) or Si Val.

Intel sees AI as driving the data center like never before. Intel vice president Jason Waxman told Fortune Magazine, “We’re on the precipice of the next big wave, artificial intelligence,” and he told the Recode web site, “the shift to artificial intelligence could dwarf the move to cloud computing. Machine learning, he said, is needed as we move from a world in which people control a couple of devices that connect to the Internet to one in which billions of devices are connecting and talking to one another. There is far more data being given off by these machines than people can possibly sift through,” Waxman added.

Intel says they will build the Nervana chips, which puts those chips at the head of the class in terms of fabrication and initially offer them to cloud data centers, where big companies are making extensive use of machine learning. Diane Bryant, EVP and GM of the Data Center Group at Intel will be leading that charge. Eventually Intel hopes to take the technology into smart devices such as self-driving cars and wearables to fuel the growth of the Internet of things. If you’re going to IDF, be prepared to hear a lot about this.

What do we think?

No doubt about it that AI is the next big thing, following VR and IoT—wow, so many big things. One big thing that isn’t going to let Intel or any 48-person start-up in San Diego edge them out is IBM. As of now IBM owns the AI biz, and is deploying Watson into all kinds of applications and even making it available for free to promising startups. Other startups like DeepMind, Osaro, and Skymind are also going to give Intel-Nervana a run for the money. And if you think AMD and Nvidia are going to throw in the towel and walk away from an industry they helped invent, think again.

And then there’s Intel’s history. OK, pop quiz name one (just one) non-x86 chip, of all the processors Intel has bought, that Intel makes today. Take your time, I’ll wait . . .

Right—none. Given Intel’s perfect record of religiously killing any internal competitor to its beloved x86, what do you think the half-life of Nervana’s Engine is. Two years? Three max?

But remember, Nervana’s network runs on x86s, and that’s where we expect to see Intel put the push. It’s also highly likely the multi-pipeline architecture of Nervana’s engines will find its way into the next generation (the “Tock”) architecture, and with the economy of scale that would bring, combined with what will probably be 10- or 7-nm manufacturing, a stand-alone chip really won’t be cost effective to build, program, or install.

So the shareholders of Intel, who haven’t been the most delighted folks of late, need to decide if the current management has their best interests at heart by taking $400 million out of dividends and making a gang of folks in San Diego rich in order to get an architectural design and network, which may increase sales of the margin rich x86s Intel sells to data centers. We think they will nod approvingly. This looks like a hecka smart move to us. Let’s just hope Intel doesn’t screw it up.

Epilog

Nvidia has posted a well done story, What’s the Difference Between Artificial Intelligence, Machine Learning, and Deep Learning? at: https://blogs.nvidia.com/blog/2016/07/29/whats-difference-artificial-intelligence-machine-learning-deep-learning-ai/ Recommended reading.