As the need for content continues, it is more important than ever to share time-saving techniques to advance the industry.

“You break the rules and you become the hero. I do it and I become the enemy. That doesn’t seem fair.” —Wanda Maximoff, Doctor Strange in the Multiverse of Madness, Disney, 2022

Growing up, our dad used to tell us, “You can’t put 10 gallons of stuff in a 5-gallon bucket, so sometimes you just have to leave some stuff behind.” That’s been impossible for the studio and network content folks over the past three years because every ounce of stuff was needed for pre-production, production, and post-production work to meet the growing, insatiable demand for new, mind-blowing content for theatrical and streaming audiences.

Earlier this month, IBC was a perfect time for some to take a well-earned breather, and attendees had a chance to learn what worked—and what didn’t—to economically keep the content flow flowing. Michael Crimp, IBC’s CEO, and his team did a great job of updating folks on how global teams met their time/financial budgets in almost every area. But for us, the most important concepts to catch up on and see what tomorrow looks like was in virtual/remote production and post—the throughput engines.

“More advances have been made in the past two years than in the previous decade,” Crimp said. “Now it’s time to spread the word across the entire industry.”

During the conference, Allan McLennan, CEP of media, head of M&E Americas for Atos, told us that no one is thinking about slowing down the process or downsizing to meet the demand. “The focus for the past two years has been mostly survival. In the foreseeable future, it will require working around all the identified challenges that have been presented,” he noted. “Costs have doubled/tripled, delivery schedules have gone from a few weeks to months, and executive producers are weighing the tangible/intangible ROI on remote locations, higher-capacity production, and cloud centralized vs. remote specialized post-production work.”

McLennan added that in some ways, the pandemic helped drive nascent technologies and processes “to be refined and robustized” in almost real time. “Develop, test, fail, adjust, enhance, repeat. Virtual production and remote post are not exotic work-arounds. Today, they’ve become first choices,” he said.

The production of studios in key locations around the globe (Georgia, New Mexico, Toronto, London, Vancouver, Berlin, Ireland, and other major centers) and the rapid advancement and deployment of LED walls, such as ILM’s cutting-edge StageCraft LED technology, along with motion-capture and game-engine pipelines can be partially attributed to the pandemic. Also related is the willingness of production crews to test/refine new techniques to create while meeting deadlines.

The Mandalorian was obviously the proving ground for virtual production, and the availability of tax-incentive remote production shifted the financial and creative needle to a totally new world of content development opportunities.

The idea of flying the entire crew to exotic locations won’t disappear, but the ability to create any location—especially those you can’t touch or see—without a lot of expensive set construction not only saves time and money, but it also looks great. And it works for a large range of project types and sizes.

With the increased use of virtual environments, production facilities not only reduced the need for crews traveling around the world and the construction of one-off disposable sets, but it also reduces project carbon footprints and location budgets (time/money). The growing use of the virtual digital environments for projects large and small actually saves our real environment.

It’s difficult to fathom anything as gorgeous or as immersive as the images sent back from the NASA Webb Telescope, which has been delivering breathtaking images from years and millions of miles away that are spellbinding. However, studio facilities with large LED walls and VR/AR software such as Epic Game Unreal Engine, Unity, and Brainstorm enabled creatives to rapidly and economically produce environments that match or exceed physical settings or locations without being concerned about time of day or weather.

The upside/downside of LED volume production is the idea that “it will be fixed in post” is significantly reduced, as much of the VFX work is being done in real time on set and in the camera, so projects can be completed more rapidly. It’s little wonder that production studios with LED walls are booked for years and despite increased construction costs, new facilities are being built as rapidly as possible in key tax incentivized locations.

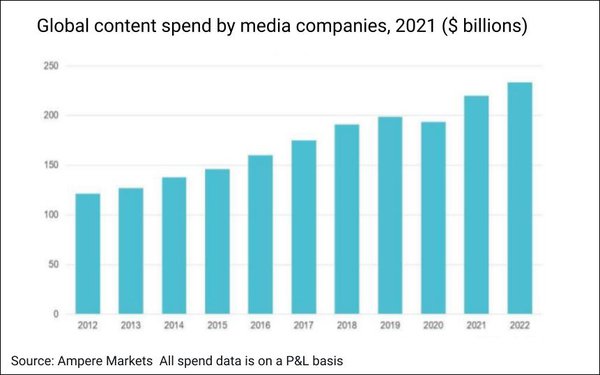

Despite the global economic pressures, studios are continuing to invest in producing new, unique content as rapidly as possible. Disney is on track to spend $33 billion this year as WBD invests $23 billion and Netflix between $17 billion and $18 billion.

While everything was seemingly on life support for the past two years, IBC spotlighted new production tools and workflows that are increasingly scalable, expandable, and efficient for projects of almost any size and complexity. VP (virtual production) merges techniques and technologies from the film and video game industries. As VR (virtual reality) has evolved, so have the cost and production savings.

Conceptualization and creation of in-film assets such as props and backgrounds are increasingly being created during pre-production, saving time during the production process. VP and LED screens have made it possible to eliminate the need for actors performing in front of an array of greenscreens while responding to things they can’t really see, and the animated backgrounds can respond to camera movement with accurate parallax. The result is completed visual effects shots on set that the entire crew can see, with perfectly matched lighting and camera movements, thus minimizing or at least reducing reshoots and months of post-production. The Mandalorian is indeed proof that high-quality content can be economically produced in a virtual environment, and filmmakers everywhere are eager to take advantage of the new creative platform.

VP has also enabled content owners/creators to take advantage of reliable, more economic 8K camera technology and cloud-based workflow, which are becoming the foundation for meeting unbending project deadlines. With many restrictions lifted, projects like Netflix’s recently released The Gray Man were produced with a judicious use of VP and location production shot with multiple 8K camera rigs. Netflix also shot the project in the highest resolution possible to future-proof the project so it would be streamed in a wide range of frame rates to meet network performance limitations around the globe for optimum consumer enjoyment.

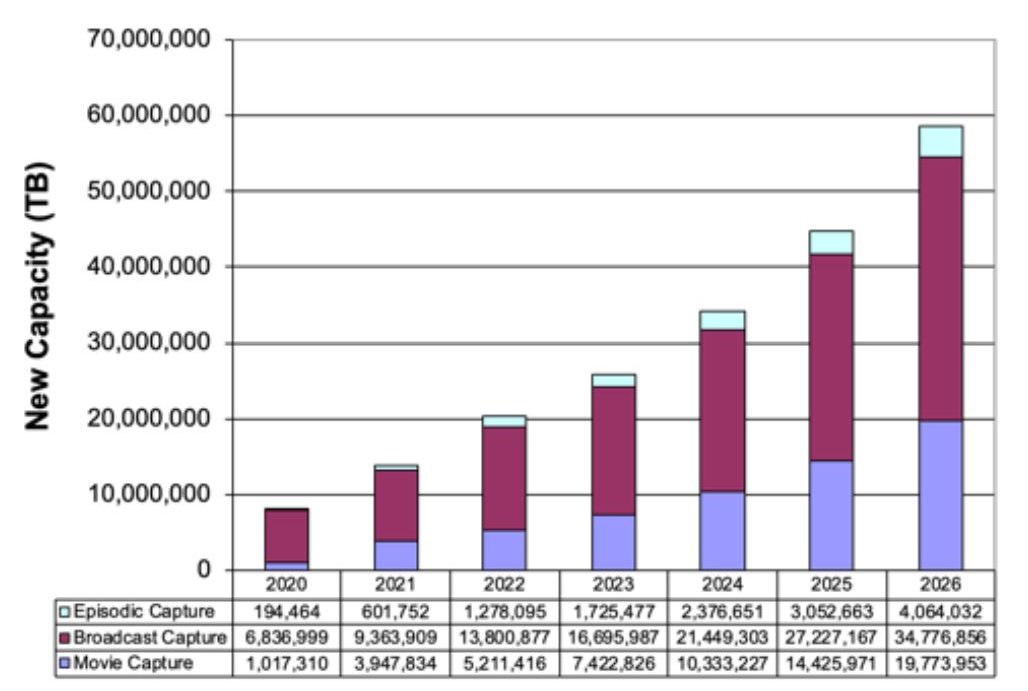

Tom Coughlin of Coughlin Associates noted that content creators are using the highest-quality digital cameras at the highest resolution possible to future-proof their projects. Even when the film or show will not be delivered in 8K, they want to capture it in the highest quality, highest resolution possible.

Resolution, the number of pixels per image, provides finer image detail and more immersive content the higher you go.

To further future-proof their work, production teams are using high-performance cameras, whether it’s Red Digital Cinema’s RED or Blackmagic Design’s Ursa cameras at the highest frame rate possible, including 60 fps to 120 fps. The best film/show cameras also incorporate HDR (high dynamic range) for greater detail across the complete lighting range, greater color depth or color precision, enhanced color gamut/color range, and RAW image capture to ensure the creative team has all of the content possible for now and in the future.

Top Gun: Maverick and soon-to-be-released (December) Avatar: The Way of Water were shot at 60 fps and 8K for today… and tomorrow.

The result has been a dramatic increase of data captured and stored on set and in the cloud. For an increasing number of films/shows, video capture is rapidly approaching hundreds of petabytes of digital content that must be reliably, safely and economically stored on set and throughout the production process.

Capturing, managing, and tracking camera output has become a vital task for today’s DITs and data wranglers. Solutions such as Frame.io have become a critical component in the production process by providing a fast, easy, secure way to get footage from the cameras on set to collaborators, eliminating delays throughout the production and post-production processes.

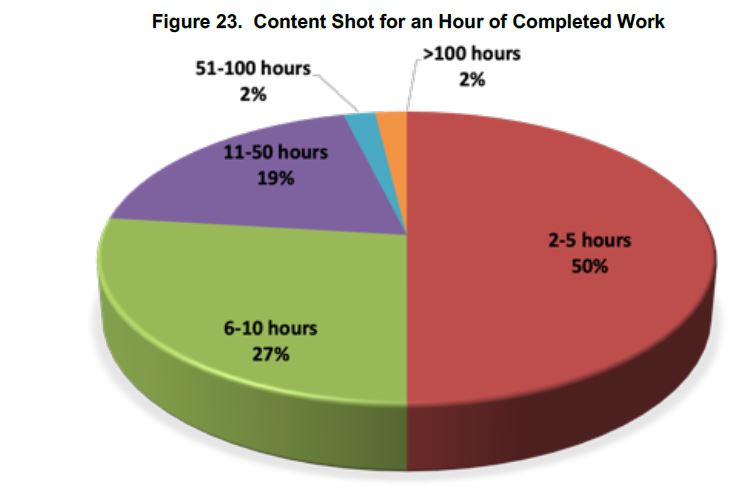

Most of the cameras used for today’s film/episodic projects use flash memory; and to meet tight production schedules, filmmakers tend to keep cameras rolling and capture more data than may ultimately be needed… again, just in case.

It may appear to be overkill at first blush, but with the majority of today’s content born digital, the cost of reshooting scenes—time/financial—is far more expensive. In addition, while flash media can be reliably used multiple times, most filmmakers will reuse it three to four times before retiring it to the archives. While this is being done, Frame.io and similar workflow solutions also capture/store insurance copies (complete copy of digital data filed and never touched) of the project.

Remote post-production—editing, sound mixing, color mixing/grading, visual effects, etc.—was already being widely used by film/episodic producers prior to 2020; it grew up quickly in the past two years and has proven its viability and value, as we can see with the rush of audience-appealing theatrical tentpoles and the constant flow of unique content for streaming services around the globe.

We admit we weren’t major supporters of cloud storage and collaborative post workflow in the past (we always liked the idea of seeing someone doing stuff across the room until that became impossible); and today, we wonder how projects ever got completed before today’s cloud work.

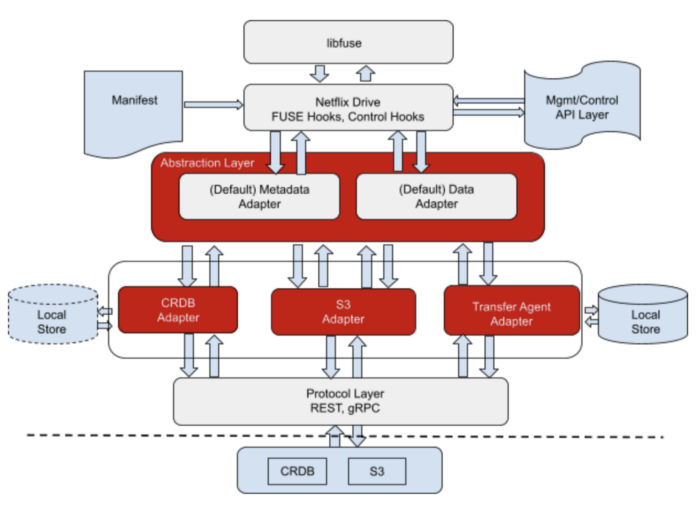

Workflow/production tools from Adobe, Avid, EditShare, Blackmagic (DaVinci Resolve), Atos (BNCS+), and others have proven invaluable to allow broadcasters and filmmakers to work with the best post-production specialists possible, regardless of their location. We were a little taken back, though, a month before IBC at FMS (Flash Memory Summit) when we saw Netflix on the program advancing the idea of a better solution for a cloud-based, edge workflow/work process solution. It turns out the tech-born streaming service wanted to ensure their content providers’ PB of data and media assets could be processed anywhere on the globe. They produce and serve content in more than 190 countries, so moving project assets quickly, accurately, and economically is rather important.

They survived/thrived the past two years in part by developing what Netflix’s Tejas Chopra called Netflix Drive for cloud-based video production. And yes, most of the workflow runs on flash memory, hence a subject that should be of interest to the storage folks as well as the content creation industry. It probably should gain even more interest/excitement because Chopra noted that the company, after proving the technology for their own projects, plans to make it available using open-source code, so it can be used by any business (including their streaming competitors) at no cost… a helluva deal!

Surprisingly, Chopra and the Netflix team haven’t been shy about explaining how Netflix Drive kept them producing a steady stream of content over the past two years and how any content creator/producer can use it as well. They’ve published several in-depth discussions/explanations of Netflix Drive, so any production/post team can speed their workflow while saving/protecting every bit/frame.

Sure, Netflix developed the solution for all of their content—regardless of who or where it was created—but it’s a cloud drive solution that works for any studio/media application. The company developed Netflix Drive to meet the global workflow requirements of today’s content teams to create, share, and work on media assets regardless of the location and, more interestingly, across any project.

Despite his abbreviated time available during the FMS edge computing panel, Chopra opened the company’s kimono to show how they and their content partners have been able to get projects on the track—despite all the chaos we’ve all been through.

If we’re lucky, we’ll be able to get more updates at next year’s SMPTE (Society of Motion Picture and Television Engineers) conference and the HPA (Hollywood Professional Association) Tech Retreat.

Sure, the M&E industry is a tough business where everyone seems to be willing to do whatever it takes to get to the top, but sharing fundamental technology and production/post experience helps everyone and raises all boats.

And as Charles Xavier said in Doctor Strange in the Multiverse of Madness, “Just because someone stumbles and loses their way doesn’t mean they’re lost forever.”

There’s still a lot of great content to be created for entertainment-hungry theatrical, appointment TV, and streaming viewers around the globe.

Is this a great industry or what?