What and who is new.

AR is like that science fiction movie you saw and keep waiting for it to materialize in real-life—it’s almost there at your fingertips, you can almost taste it—almost.

The concept still excites and attracts developers, investors, conference creators, and attendees, and the press. AR will be as important and integrated into our lives as AI will be, and like AI, we’ll wonder how we ever got along without AR. And, obviously, AI will enhance AR, while AR will help improve the AI, a perfect symbiotic relationship that will make our lives better, safer, and more efficient. The real irony is that like AI, AR will become such a part of our lives that we won’t even think about it. It’s just there and the idea of a conference for AR wouldn’t make sense. What kind of AR? Heads up displays? Industrial AR? Mobile phone travel AR applications? In fact, segmentation is well on its way, which is a major phase in a technology’s acceptance.

Leap Motion

Leap Motion is a company that was founded to deliver human–computer interfaces. The company believes the fundamental limit in technology is not size, cost, or speed; it’s how we interact with it. “These interactions,” says David Holz, co-founder and CTO at Leap Motion, “define what we create, how we learn, how we communicate with each other. It would be no stretch of the imagination to say that the way we interact with the world around us is perhaps the very fabric of the human experience.”

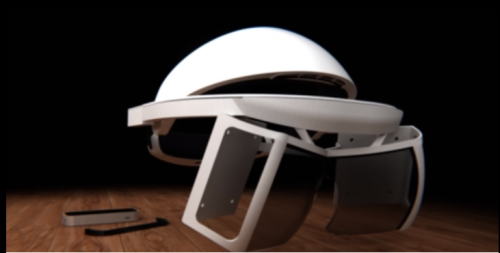

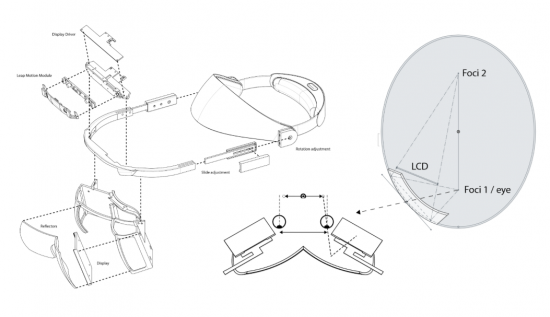

Leap Motion thinks this human experience is on the precipice of a great change. In preparation for the coming change, Leap Motion has built an HMD they call, “Project North Star.”

North Star is an augmented reality platform that Leap Motion thinks will allow us to chart and sail the waters of a new world, where the digital and physical substrates exist as a single fluid experience.

The first step of this endeavor was to create an augmented HMD with two low-persistence 1600 × 1440 displays capable of 120 fps and an FOV of over 100 degrees. It is coupled with the company’s famous 18-degree hand tracking sensor.

The team accomplished something else too, the design of the North Star headset is fundamentally simple and will cost under one hundred dollars to produce at scale.

Furthermore, Leap motion will make the hardware and related software open source, with the goal being that the discoveries from these early endeavors should be available and accessible to everyone.

Leap Motion says they hope that these designs will inspire a new generation of experimental AR systems that will shift the conversation from what an AR system should look like, to what an AR experience should feel like.

The company will be disclosing the details of the hardware itself but emphasizes that it’s the experience that matters most. Leap Motion hopes their platform will let people forget the limitations of today’s systems; and enable users and developers to focus on the experience, the software and the interface, which is the core of what Leap Motion is about.

The story of the development of North Star can be seen here.

“The fundamental limit in technology is not its size or its cost or its speed, but how we interact with it,” added Holtz.

Daqri sees 3D

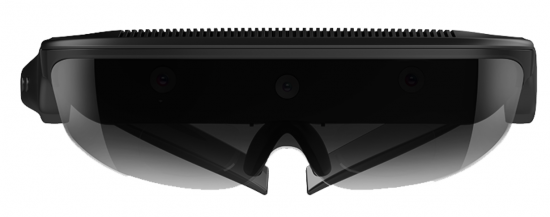

Developers of the hard hat AR helmet, Daqri has expended their technology and know-how and developed the Daqri smart glasses.

The glasses offer a 44-degree FOV, a 6th generation Intel Core m7 Processor, and a suite of sensors to capture valuable information about the surrounding environment.

It’s the sensor array that is most interesting. The headset includes an Intel R200 depth-sensing camera, plus a dedicated 120-degree grayscale tracking camera, and a Lepton-3 thermal camera. Daqri has designed and built 6DOF positional tracker. A depth-sensing camera makes total sense when you think about the challenge of placing computer-generated images on top of what the wearer is seeing, and having them register correctly, and stick while the wearer moves around.

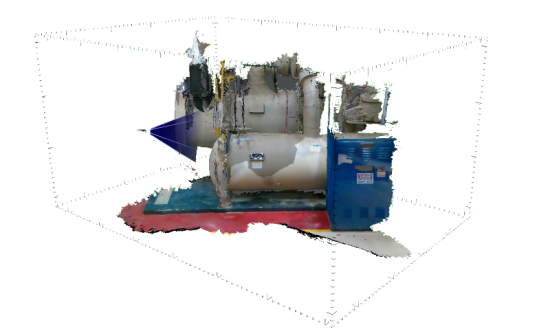

But, if you’re sensing the environment, why not capture and measure it too? And so Daqri developed and is introducing, Daqri Scan. Designed to let anyone critically assess an actual facility or piece of equipment, Daqri Scan captures environments as photorealistic, shareable 3D models.

Daqri Scan is ideal for retrofits, installations, and change detection. Daqri Scan models can be easily used by platforms from software vendors such as Autodesk or Siemens as well as popular 3D tools and engines like SketchUp or Unity.

The glasses are available now for $4,995, and the software suite is being released this month.

AntVR

AntVR was founded in Beijing in 2014. The company is dedicated to the development of VR and AR products. After its first successful crowdfunding campaign on Kickstarter, the company received investment from Sequoia Capital. Over the past few years, the company has produced several VR products—over 1 million of which have been sold in Europe, Asia, and North America. The company has also established close co-brand collaboration with well-known companies including Lenovo, Motorola, OnePlus, and AOC.

Now, the company is stepping into the AR market with another Kickstarter campaign to fund its AR product: AntVR MIX. The glasses will have a 96-degree FOV, two 1200 × 1200 displays with a 90-Hz refresh rate, 6DOF inside-out tracking with SLAM, and it is compatible with Steam VR. There is a video of the headset shot through its lenses with an iPhone X here.

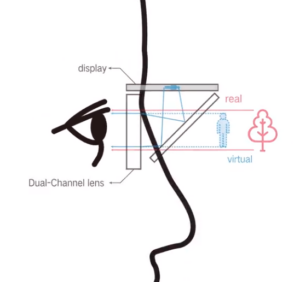

The display is above the wearer’s eyes and reflected via a half-silvered mirror to the viewer’s eyes.

The dual-channel mixed optical system has a compound structure with multiple layers. The key component is a set of dual-channel lenses with two different optical channels for enlarging the display light and bypassing natural light to present AR vision with a large FOV.

Content is key for any AR glasses, which is why AntVR has joined the SteamVR community to tap into its libraries. MIX connects to a PC to run games. Games with a dark background can be used on MIX, which will show the real environment instead of the dark area. It’s a new approach to AR with existing content. A demo and explanation can be seen here.

The HMD MIX weighs 130 g (4.58 oz) without the cable. It will sell for around $500, and AntVR will ship the units to backers by the end of 2018.

Black Rainbow’s Ghost

Black Rainbow, LLC is a design studio based in Marina Del Rey, California, and was founded in August 2015 by Jean Helfenstein and Iva Ilieva. Helfenstein had been at Tesla as a creative technologist, and at Apple in the same capacity before that. Ilieva was the design lead at Nokia.

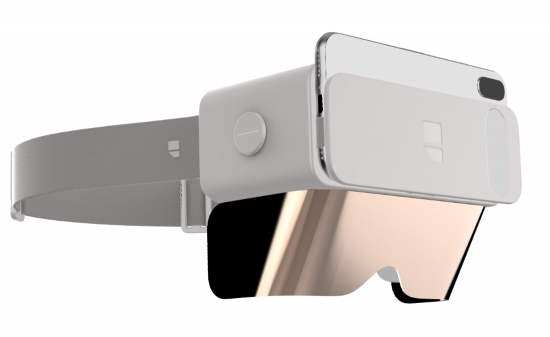

The Ghost HMD mounts a smartphone in the front like the Samsung Gear (and its many imitators), but unlike the Gear, the phone is above the viewer’s eyes, and its image is reflected down to a semi-transparent reflective screen. The magnetically attached front screen/visor can be replaced with an opaque screen, reflective on the inside to make the device an immersive VR HMD. This is a similar approach as Phase Space’s Smoke VR/AR HMD (see: AR glasses for normal people).

Black Rainbow says the Ghost has a FOV of 70-degrees, through the use of its circular lenses. The image is a function of the phone, with up to 538 ppi with Pixel XL and Pixel XL 2 display of up to 2880 × 1440.

The phone camera remains exposed to enable inside-out tracking powered by ARKit on iOS and ARCore on Android.

The company says building apps for Ghost is easy. Since it is powered by a smartphone the company and developers can leverage some of the best libraries out there such as ARKit/SceneKit, ARCore, and Google VR. When Black Rainbow starts shipping they will provide sample codes to get started with iOS, Android, Unity, and WebXR.

Available in two colors, Ghost white, and Onyx black, the SRP is $99, and now $79 as an Indego special. They don’t have accurate weight data yet as they only have 3D printed prototypes at this point. Black Rainbow will be showing our prototype at the Augmented World Expo at the end of the month.

Shadow Creator

Shadow Creator is a smart glasses developer out of Jinqiao, Shanghai that was founded in 2014 and has so far received nearly 100 million yuan (~$16 million) of financing. They introduced the Halo Mini AR headset last year, which had a limited 40-degree FOV. It was an early prototype and got criticized because the view from the tracking camera and the real-world view didn’t line up well. The tracking camera is located above the wearer’s eyes and thus has a slightly different view of the world. Targeted at gaming, the Halo Mini didn’t have multiple infrared sensors, which Microsoft’s HoloLens and Google’s Project Tango use to gain a sense of depth. The company claimed one could see a 140-inch screen 5 meters away through the 2-mm lenses.

The 1080p imaging system uses high brightness OLED chips, optical resin aspheric eyepieces (high definition, low distortion, big field of vision) and polycarbonate beam splitter lenses (high strength). The company says its Prism Optical path-correction algorithm ensures that images can be enlarged without distortion, thus restoring the true color of images.

It has built-in earphones, and a touch pad on the right side, plus an external gamepad hand controller.

The HMD uses a Mtk MT6735 (64-bit, 4-core)/MT6797(64-bit, 10-core, 2.0 GHz) SoC, has a 8MP/12MP camera; Sony light-sensitive sensor, and a Bosch nine-axis gyro and G-Sensor. It has Wifi, GPS, BT, micro USB 2.0, runs on a 3200-mAh battery and supported fast charge, 5v2A. The unit weighs 370 g and sells for $1,180

This year the company introduced the Action One AR Smart glasses.

The Action One glasses have 6DOF, a 45-degree FOV, with a 720p display driven by a Qualcomm 835, and powered by a 4000-mAH battery.

The company’s next offering, the New Air, will have a 60-degree FOV.

The company will disclose more of the details about the New Air at the AWE conference.

Emteq is watching you

Most AR head-mounted devices look at the world and report to the wearer. Emteq’s glasses will look at the wearer and report to the world, or at least to someone or thing external to the wearer. Emteq, based in Brighton, and founded in 2015, uses artificial intelligence and tiny sensors to read the electrical signals generated by muscle movement.

Understanding emotion is the key to unlock performance, behavior, and mood improvement, as emotion drives behavior and behavior drives action.

Research by psychologist Paul Ekman concluded that facial expressions give the most reliable information about our emotions, culminating in the categorization of facial gestures and their emotional intent.

OCOsense is a wearable platform for measuring facial muscle activity and emotional responses through glasses and VR & AR headsets. Using a range of multi-modal, patent-pending sensor technologies, proprietary algorithms and live data streaming the company says it has enabled the collection and interpretation of human emotional response in realtime in the real world.

Using a range of facial sensing techniques including electrical muscle activity, eye movement detection, heart rate and heart rate variability, stress response and head position to identify and recognize what your face is doing 1000 times per second. The device’s AI engine tracks and translates that information back into your physical expression and emotional state in near realtime.

The company will provide an API for developers and an iOS app to analyze data captured via the OCOsense glasses. The company hopes to have the glasses available by the end of the year, no price has been suggested yet.

What do we think?

AR, like VR, is subdivided into consumer and professional/commercial. However, AR has many more implementations including HUDs, first-responder and hard-hat helmets, the famous F35 fighter jet helmet, and someday maybe contact lenses. Virtually everyone is a potential user of AR glasses. Now, let’s assume that if someone doesn’t have access to a mobile phone, they’re not likely to be an AR user (ignoring the inconvenient fact that there are plenty of other ways to encounter AR including digital signage, computers, standalone devices, fighter jet pilot helmets…). The UN estimates that 6 billion people have access to a mobile phone. Various analysts estimate just under 50% of the world’s population uses smart phones, which again, seems a reasonable stat to further segment potential users. So let’s put the Potential Available Market of AR users at 3 billion. With an ASP of $100 for the glasses in the near-ish future that’s a hardware market of $300 billion, plus the commercial market (probably at least another $1 billion) and the software application and toolset segments. It wouldn’t be difficult to imagine a over-a-half-billion-dollar PAM by 2020. It’s not even totally crazy since populations keep growing, and we can hope conditions improve for people around the world, allowing them to think about technology that makes their lives easier, safer, or more fun.

It’s no wonder so many companies have entered, or said they would enter, the market. And like all nascent markets there will be some broken hearts, smashed dreams, and aggressive acquisitions.

The perfect consumer AR glasses haven’t been built yet, but there are some very promising early examples. The quantity and complexity of problems (challenges) to build AR spectacles that would be no more embarrassing or uncomfortable to wear than a pair of sunglasses is huge, but doable. They will be an accessory to your smartphone, and as such you can guess who will be the ultimate winners in the market.

Regardless of the machinations of the market’s development, I personally can’t wait to get some AR glasses—the more you can see, the more you can do.