More than Moore’s Law is at play.

A workstation can be anything from a remote workstation in a virtual machine mode, to an ultra-powerful machine that is a small supercomputer. Moore’s law is the basic transistor enabler of the processor advancements, but the processors themselves get new features and functions with every generation. When Intel x86 processors were first deployed in a workstation with Windows, back in 1997, one of the salient features was an integrated floating-point processor. Since then expanded memory mangers, security, communications, were added, and it went from one 32-bit core to 28, 64-bit cores, plus a 512-bit SIMD processor and transcoder engines.

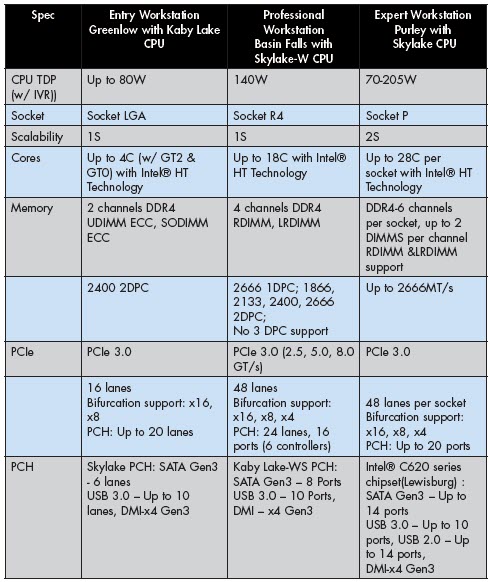

Intel has been leading the workstation CPU market for years, and the previous generation processor, the Broadwell, was a performance leader that delighted millions of workstation users. Intel continued with its processor developments and introduced the next generation of Intel Xeon processors for workstations—the Intel Xeon Scalable processor (Dual Socket capable) and the Intel Xeon W processor (Single Socket capable). Both processors are built on the Skylake architecture, with more cores, higher frequency, more cache, and expanded memory management and PCIe lanes. To differentiate the workstation-class Xeon processors from the server-class processors, Intel has added the “W” designation to the name.

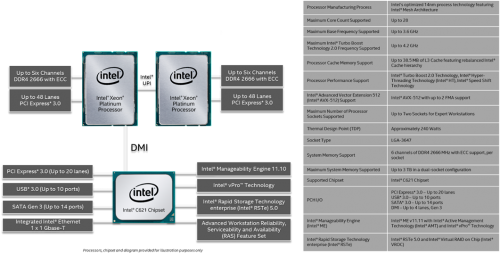

Intel designs their CPU architecture, and gives it a code name, in this case it is Skylake. That design can find its way into many different form factors from laptops to supercomputers. The processor is also named, such as an Intel Xeon Platinum processor. And those processors get used in a platform that is specifically designed for them. The platform includes supporting chips to provide USB 3.1 type A to C, Thunderbolt 3, gigabit Ethernet, SATA, and other ports. In the case of the Skylake platform, the supporting chip for the Intel Xeon Scalable processor is known as the Lewisberg PCH and for the Intel Xeon W processor it is the Kaby Lake WS PCH. It can get confusing at times because various people use the different names interchangeably. It gets even more complicated because the processor, supporting chip, and platform, all have arcane part numbers too, which among other things denote frequency, core count, and other esoteric elements. And, just to make you even crazier, things are expressed in acronyms.

However, you can get a better sense of the relationships through a block diagram like the following.

The bottom line is: the 28 core Skylake processor is coming to the workstation market and things will never be the same. With Intel’s launch, these are the new Intel Xeon processors:

- E5-1600 Product Family processor is now the new Intel Xeon W processor (Single Socket). This is the first product that is designed with a “W” for workstations in the product name.

- E5-2600 Product Family processor is now the new Intel Xeon Scalable processors (Dual Socket).

We’ve come a long way from that first Windows-Intel based workstation in 1997. It came with a 266-MHz Pentium II processor, and could on a good day hit 48.3 MFLOPS. The top of the line workstations of the day had a 300-MHz Intel Pentium II processor that could deliver 62.1 MFLOPS.

By comparison, in June 1997, the fastest supercomputer in the world was the ACSI RED at the Sandia National Laboratory; US Nuclear Arsenal, and it could do 1.3 TFLOPS. It took up 1,600 ft2, filled with 104 racks, that held 9,298 CPUs, 1.2TB RAM, needed 850 KW of power, and cost $46 million ($67 million today).

Today you can have a super computer small enough to sit under your desk that beats it. For example, Dell just announced the Precision 7920 Tower with dual Intel Xeon Platinum processors, each with up to 28 physical cores, running up to 4.2GHz Turbo frequency that is capable of 4TFLOPS and 112 threads. Four-times faster than the fastest super computer just 20 years ago for less than one ten-thousandth the cost, and uses less than one thousandth the power. Not only that, almost anyone can use it—very few people could use that magnificent ACSI-RED—which by the way is still at working giving the U.S. taxpayers a pretty good ROI.

What could you do with such a machine?

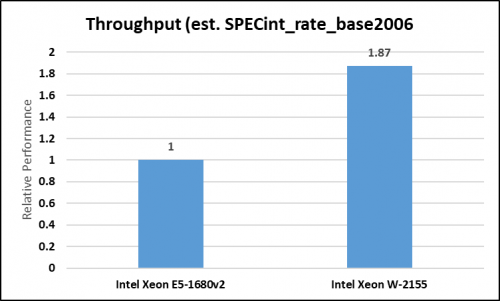

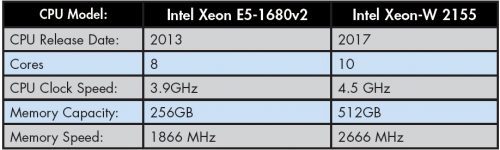

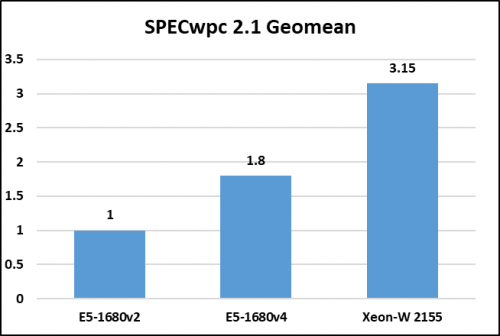

We made a comparison of professional workstation workloads. Compared to a four-year-old E5-1680v2, 3.9 GHz-based workstation, the new Skylake-based Xeon W would get an average of 87% more performance.

An 87% improvement in performance would be fine if all you wanted to do was render faster, or maybe load files faster, but the real payoff comes in being able to do what you couldn’t do before.

Famous computer graphics scientist Jim Blinn has an adage: as technology advances, rendering time remains constant. The point being, artists and directors for example, aren’t trying to reduce the time to produce a movie (although their bosses tell them that should be their goal), but rather they want to make the most beautiful movie they can. The same is true for engineers who are running simulations on ever more complex parts. Each time Intel raises the performance, and the number of threads that can be processed simultaneously, sim users celebrate. Why? Because they can make their model more fine-grained. Finer granularity in an FEA simulation makes for a more reliable, more efficient, and fine-tuned final product. Consider what that means when designing an airplane wing strut: a lighter and stronger airplane is not only more durable, it is also more fuel efficient!

The age of threads

It’s taken a long time, but the benefit of parallel processing is undeniable—performing processes simultaneously provides huge gains in productivity and accuracy. The problem has been the legacy apps that simply couldn’t be threaded and recompiled. Slowly the industry has built new apps with threading as an intricate part. And ironically, the ISVs doing that haven’t made any fanfare about it, it has just been a kind of given that any new app would be naturally multi-threaded.

The good news/bad news is if a user is stuck with old-fashioned single-threaded legacy software, the gains from a new processor and GPU are going to be slight. Due primarily to clock speeds (of CPU, GPU, and memory). But very few users are only using one app, and most apps (except some of apps developed in-house) have been upgraded and/or replaced completely with new versions.

When you consider the new Intel Xeon SP (Scalable Performance) Platinum series Skylake processors with 28 physical cores, running up to 3.8GHz Turbo frequency that is capable of 2 TFLOPS across 56 threads, and then double that in a dual-socket system you have 112 threads at 3.8 GHz approaching 4 TFLOPS. It’s almost unbelievable. Drop a modern add-in board into the system, such as a GPU designed for compute, and you have a theoretical 16 TFLOPS in a system that can fit under your desk, use conventional wall socket power, and doesn’t require any extra air-conditioning. Oh, and the whole thing would be under $15,000.

Benchmarks tell part of the story. Intel will tell you one can get a 300% performance improvement over a machine that is four years old (Xeon E5 v2). We think it is only 215%, because the increase is n-1 or an 80% improvement from the last generation to this one, based on the same data, and that’s all true. It just may not apply to you. Every use has his or her work load, so the best benchmarks can do is give you an indication of what you might achieve. However, over the years I have yet to hear of one person ever saying they didn’t get their money’s worth by getting a new workstation. The math is simple, do more, or better work in the same time, and calculate that against the cost of an engineer doing the work.

A quick comparison

The generational differences are impressive, and illustrate what you can do when you make billions of tiny transistors available to computer architects.

However, as mentioned above, it’s the application of all those speedy little transistors that is the real magic, and primary benefit to users and organizations.

What do we think?

Workstations don’t break, and aren’t cheap, and so they don’t get replaced every year, or every other year. In fact, they seldom get replaced more often than three or four years, and only then if there is a significant improvement in an application and/or the hardware. Although Moore’s law has been fairly predictable over the last forty years, with the move to 14 nm processes, there is more being accomplished than just clock speedups. With a smaller feature size, more transistors can be stuffed in a chip, and when that is done, more functions, faster, wider communications are realized, and specialized capabilities like security, AI, and power management

Intel has always been a leader in process technology and therefore in a perfect place to recognize and exploit the inherent opportunities of compute density and throughput, the Skylake processor is the latest instantiation of that skill, and the users are the beneficiaries.