Facial animation technology is advancing on several fronts. We profile several teams and products on the cutting edge.

By Kathleen Maher

Facial animation is a very tough business for a number of reasons. When we talk to each other we watch other’s faces and when we watch animated characters we do the same. There’s a lot going on in faces: we watch the eyes, the mouth, and everything in between. We’ve been trained to look for cues: Is this person lying? Does this person like me? Is this person good? Does this person need my help?

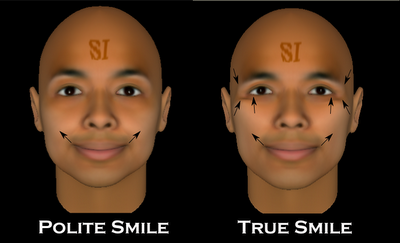

In 1978, Paul Ekman and Wallace V. Friesen of the University of California at San Francisco developed the Facial Action Coding System (FACS), which broke down facial expression into basic units, which they call action units. Their work was based on a system originally developed by Swedish Anatomist Carl-Herman Hjortsjö. One of Ekman’s major contributions was to establish the universality of human expressions. He traveled the world with photos and asked people from a variety of cultures—including people in remote areas far removed from the influences of modern culture—and asked them to interpret the expressions on people’s faces. Everyone knows how to read a face.

Ekman and Friesen then went on to develop FACS; the system has value in numerous contexts. For instance, to teach law enforcement professionals how to recognize danger in a face, or to help psychologists better understand their clients, and how to animate a dragon’s face. Ekman and Friesen continue to refine their system; their work has had an influence on animators as well as in the field of psychology. Malcolm Gladwell has written a fascinating article on the subject, The Naked Face, in the New Yorker.

Too close to realism

The subject of facial animation comes up routinely, every time there is a new animated title which pushes the bounds of animation. Good facial animation can sell a movie and it can certainly kill a movie. Marc Sagar is credited with pioneering the work of Ekman and Friesen in animation. Early examples include Toy Story, Monster House, and King Kong. But FACS isn’t the solution, it’s another tool. FACS is designed to help people read faces, not necessarily to create them even though it does provide a roadmap to expressions. Probably what’s most seductive about FACS is the ability to codify something as mysterious as human expression.

New breakthroughs in facial animation have come in the work of Sagar and others to animate the faces in Avatar. There was frustrated outrage when the actors Zoe Saldana, Sam Worthington, and Sigourney Weaver were not mentioned for Oscar consideration almost because their work was so good that people judging the performances praised the animation without recognizing it was being driven by human performance. It will get harder to draw the lines between actor performance and animation as new tools emerge.

There is another aspect to the challenge, our natural aversion to the nearly human. We humans are pretty fussy. Put us face-to-face with something that might be human and all our sensors go off. It is as if we’ve been hard-wired to watch out for zombies and alien invasions from the very start. Animated human characters bother us if anything tips us off that what we’re watching isn’t human.

For that reason, some of the best examples of facial animation are for characters that are non-human or stylized humans, which one supposes covers 9 ft. creatures from Pandora as well as negligent children in Toy Story and various fish, squids, ants, and animals cavorting through modern animation. Weta Digital’s work on Gollum continues to push the envelope with a character that is increasingly believable with every film outing.

Nonetheless animators will never give up the desire to create utterly realistic human creatures and 2013 will provide new opportunities for more advances. There are new game platforms coming with new compute power and the power of PC computer graphics is always growing.

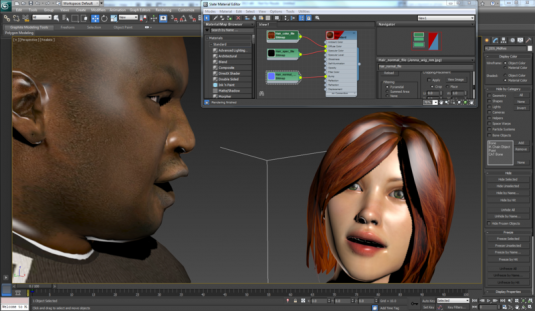

Introducing Digital Emily

When it comes to realistic human faces, one part of the equation is probably done, the ability to create a realistic human face. Paul Debevec and his team at the University of Southern California did an astounding job of recreating actress Emily O’Brien’s face for the Digital Emily Project. They used an array of cameras on the USC Light Stage to capture a 3D model of the face and used the photos as a surface map. The light stage has been used to create many realistic faces, but there are many other examples of realistic human faces including the work done by Luminous Studio for Square Enix on their games. It’s possible to put all the tools available to the digital artist to work to create a realistic face, but it’s when they start talking that the trouble starts.

Ironically, gaming is the most difficult and most alluring realm for facial animation. Players using interactive games want an immersive feel and they would love the idea of being able to watch a face for cues. For now most of the realism comes from the animatics, the videos created around and in between the game play. The real-time rendering required in gaming is yet another hurdle for facial animation but this is where a great deal of innovative work is going on.

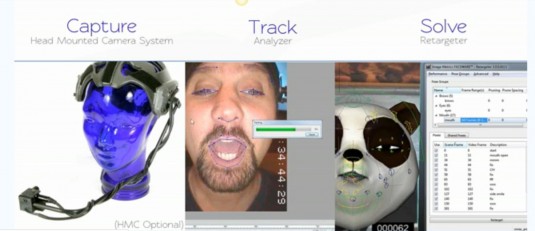

Faceware Technologies

Faceware Technologies is one of a few companies building professional level facial animation software. It came out of the game developer world, and they first showed their new technology last week at the Game Developer Conference. Faceware began life as Image Metrics. It was founded in 2000 by a team coming out of the University of Manchester, England who developed a system of markerless facial recognition motion capture and used their technology to do facial animation for game companies. For much of their life, the company has operated as a service operation.

One of their earliest customers is Rockstar Games, makers of Grand Theft Auto, Max Payne, Red Dead Redemption, and they did work on the movie The Curious Case of Benjamin Button, which won an Academy Award for its breakthroughs in facial animation. The company’s technology has been used in more than 80 games, movies, and commercials. Most recently they’re talking about the work they’ve done for Crytek’s Crysis 3, and Square Enix’ Sleeping Dogs.

In 2010 the company introduced its first standalone products for facial animation. Faceware was spun out of Image Metrics in 2012. Faceware has moved to Sherman Oaks and Image Metrics has moved to El Segundo. The two companies still share resources including R&D, which is in El Segundo.

Retargeter and AutoSolve

At GDC 2013, the company announced their latest product, Retargeter 4.0 with AutoSolve. Pete Busch, VP of Business Development at Faceware Technologies, says Retargeter represents a key piece of the puzzle for animators because it can cut down the amount of work necessary to animate faces by a factor of 10x or more. Faceware’s core technology allows them to capture facial movements with a camera and no markers. The company has its own head-mounted camera system, but the animation can be captured by any camera including a phone. The software identifies key facial features such as mouth and the eyes, and tracks movements to create an animation capture file. As Busch says, once you know where the eyes and the mouth are, you know where the rest of the face is.

Retargeter is Faceware’s tool for applying motion capture data to a model. Retargeter 4.0 is a breakthrough thanks to the new AutoSolve feature, says Busch, because it gives animators the ability to automatically assign animations so they can quickly get to a starting point to animate the face. Animators can define their characters by classifying expressions used frequently and linking them to expressions on their characters. As a result their character will have characteristic looks as identifiable as Clark Gable’s smile or Christine Baranski’s smirk. The latest Retargeter has a new Expression Set feature that gives animators a quick tool to match a character’s face with the proper expression. It’s interesting to watch the software in action because once the character’s expressions are defined, the characters can take on a life of their own independent of the actor playing them.

Key features

Faceware is offering its tools as plug-ins for Autodesk products for free. The catch is to use the animation you have to create Faceware IMPD motion files and that’s what’s going to cost you money. The company charges by the amount of footage processed; depending on the project charges may be gratis or a flat rate per project

Faceware has nailed down the complex task of facial animation with several key features including:

Expression Sets: A group of pre-defined, standard facial poses unique to each character in a project. Faceware says they derived the standard poses from their experience in creating facial animations for their clients and benefitted from the research in facial animation. Faceware’s Expression Sets help create a character as animators define the style and look of the universal shapes and movements of a character to drive AutoSolve.

AutoSolve: Combining the data from IMPD files generated from Faceware Analyzer along with pre-defined facial poses or Expression Sets, Retargeter’s AutoSolve can automatically create animation on a video performance, giving artists a great first pass in a matter of minutes. Artists can get to work faster, fine-tuning the animation through the Retargeter pose-driven workflow.

Tracking Visualization: Retargeter 4.0 makes use of an improved IMPD file structure that contains the complex data needed for AutoSolve—it also contains a helpful way to see the tracking information that comes out of Faceware Analyzer. This feature enables artists to understand better what is going on in the facial tracking process to better utilize Retargeter.

Batch Functionality: The command line abilities built into Retargeter 4.0 allow any function within Retargeter to be scripted, letting artists create a complete batch pipeline for facial animation. Hundreds of shots can be set-up overnight. Retargeter 4.0 will support a library of Mel, Python, and MaxScript commands.

Shared Pose Thumbnails: Retargeter 4.0 gives artists the ability to add thumbnail images for every pose, so that other artists on the team have a reference for every shared pose and can find what they need faster. Artists have the ability to custom define each thumbnail, such as focusing on a particular area of a pose or showing the entire facial pose.

Peter Busch says that the early response to Retargeter 4.0 has been really enthusiastic and he’s seeing it as a “game changer” for the creative community. “You can get to an animation in a matter of minutes rather than a matter of hours,” says Busch.

Tools for the future

During an interview, we talked with Faceware a bit about the companies struggling in the VFX world; Busch observed the companies having the most problems are older companies who have invented their own tools and their own ways of doing things. He, like many other people involved in animation and effects, says tools that can standardize some processes such as facial animation can save valuable time.

In the months since Faceware has been on its own, independent of Image Metrics, Busch says the pace of development has been much faster. Retargeter is the result of 12 years of development, he says, but they’ve made tremendous strides in the last year. The challenge was making the big transition from service to product. There were probably some hard lessons about the difficulty of building tools and interfaces for strangers compared to rolling their own tools for in-house use. Busch says it took the team about two and a half years to get from Retargeter 2 to Retargeter 3. They were able to get to Retargeter 4 within four or five months.

On the one hand, we talk about the crisis in the VFX community as established companies have shut down, but in crisis, there is opportunity. New companies are starting up worldwide to handle animation and effects jobs. Faceware is seeing their potential market expand worldwide. Busch is looking at a future where products like Faceware will be available in the cloud. He says a game called Republique from Kickstarter developer Camoflaj is coming in the fall. The iPad title is the first for Faceware, signifying the toolset is finally affordable for even the smallest of independent developers.

Faceware is another example of an off-the-shelf tool that automates difficult tasks in digital content creation. It doesn’t replace talent. In the Faceware workflow, there are actors behind the faces. And there are artists in front, fine tuning the facial movements to enhance the characters. Not only does technology like this enable smaller companies to do professional work, but it has the potential to expand the tools to more people. Figuring out what people will actually want to do with this technology is tricky, but the idea of making a character talk like you do is pretty cool, even if it is simply a joy ride through the uncanny valley.

The back story

Since their early days in Manchester, the Image Metrics team long harbored the ambition to create standalone tools for facial animation. And, as the company developed its standalone technology, it saw a wider use for it in consumer based applications, avatars, game player pieces, etc. That market has been notoriously slow to materialize. Image Metrics went public through a reverse merger with ICLA, International Cellular Technologies, in 2010 to raise revenues to pursue these new opportunities. The company raised money from Acorn Capital, Axiomlab, Close Brothers Investment, Digital Animation Group, MC Capital Europe and Saffron Hill Ventures. Ranjeet Bhatia of Saffron Hill Ventures is the Director of Image Metrics and also for Faceware. In fact, the Faceware board is a subset of the Image Metrics board.

Now that Faceware has spun out, Image Metrics is continuing forward as a public company, IMGX, albeit one that’s not making much money right now. In 2011, IMGX issued its last SEC 10-K before spinning out its breadwinner Faceware. The company reported revenue of $7.02 million dollars, up 17% from 2010 when the company reported $5.95 million. At the time, the company said the increase was due to sales of its new release of a standalone product. The funders have stayed with the company, demonstrating their faith in both Faceware and the future consumer uses of the technology.

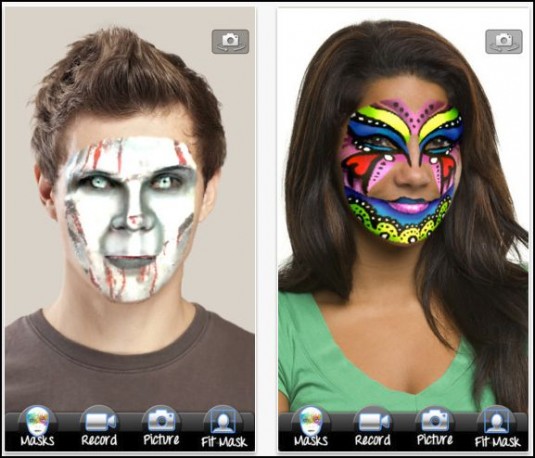

Now Image Metrics is selling software for the iPad including Mojo Masks, which lets users make masks to add to their faces. It’s not breakout stuff, but the company sells packs of masks for 99 cents and promises more apps based on their technology, which can map expressions for avatars, games, and other possible consumer applications.

Runtime faces

In spite of great tools, faces are still a major challenge for computer graphics. Nvidia CEO Jen-Hsun Huang regularly cites the work of Japanese robotics professor Masahiro Mori, who coined the term “Uncanny Valley” to describe the region where artificial human faces look realistic, but not real. The effect causes revulsion in humans. Avoiding the uncanny valley has caused animators to create stylized human characters that don’t look real. Or, in the case of gaming, to limit face-to-face encounters and hide characters’ faces. But, the search goes on for better tools to create faces.

To demonstrate the power of their latest graphics board, the Titan, Nvidia at the recent GPU Technology conference introduced its runtime, facial animation tool Face Works and a new character named Digital Ira. In collaboration with the ICT (Institute for Creative Technologies) at USC and Paul Debevec, the team created a realistic face using the Light Stage, a global array of cameras that can capture a full head or body image and using photogrammetry, to create a 3D model.

But first, to demonstrate how difficult facial animation actually is, Huang dragged out poor old simple Dawn, Nvidia’s buxom digital fairy; she smiled and posed on screen, and the wind blew through her hair. Nvidia was able to demonstrate great skin, fabulous hair, nice teeth, but an overall picture that’s not real convincing, and “just a little bit creepy,” said Huang. He then described other efforts including Angelina Jolie’s character in Beowulf, which Huang described as creepy beyond belief. Huang told the audience at GTC, we try character animation every generation and although it’s difficult, it’s worth it.

Enter Ira. Digital Ira is the result of creating a model from a real person. So, he is inherently more realistic than Dawn, an animation created using 3D animation software. The team then went to work animating, capturing, and creating expressions for Ira. The team captured 30 expressions. They came up with 32 GB of data, which they were able to compress into 400 MB, which the new Titan graphics board can manage. The expressions were stored almost like shaders, which are called up programmatically. An 8K instruction set puts Ira’s geometry through its paces. Running through some quick math, Huang said, running Digital Ira could be done within two teraflops—half the capability of Nvidia’s Titan, which has 2,600 cores.

Digital Ira looks good, there’s still some creep factor when he talks, but he’s an obvious advance thanks to the realistic image capture tools used in his creation. After all, he is created from a real person.

As an aside, here, Beowulf, featuring a digital Angelina Jolie, was directed by Robert Zemeckis, an effects enthusiast who has been an explorer in the uncanny valley since making Polar Express with Tom Hanks playing a conductor with none of the charm of Tom Hanks. He also made Christmas Carol in 2009 with Jim Carrey. Audiences have basically rejected Zemeckis’ digital characters even though he has pushed the science of performance capture forward with each film. Zemeckis has taken a break from performance capture and directed the live action film Flight with Denzel Washington. When a movie director finally gets performance capture right, it might well be Zemeckis who does it. He has the advantage of respecting the use of effects in service of the story rather than going the other way around.

FaceFX at GDC

Now that Sony is gradually releasing details about its upcoming PlayStation 4 machine, middleware partners are also stepping forward. OC3 Entertainment makes FaceFX a Windows-based facial animation system for game developers. The system includes lip synching tools, a curve editor, and a GUI featuring sliders for fine tuning animation. It also has scripting tools. Artists working with FaceFX work from sound files to animate their models.

Just before GDC, OC3 announced a brand new animation runtime component optimized for the PlayStation 4. The company says the increased performance of the PlayStation 4 will put the spotlight on characters and facial animation for this generation. More characters can be on screen at the same time and characters can be more complex, faces can have more polygons and bones.

The product is sold primarily as a standalone product, FaceFX Studio Professional for $899 or in an unlimited version for projects, which gives companies access to as many seats as they need. The $899 price is a reduction from earlier pricing at $1,995. OC3 has had plug-ins for Autodesk products—Max, Maya, Softimage, and MotionBuilder—and they can be used to import animations created in FaceFX Studio and to define bone poses but they’re not necessary because the same thing can be accomplished using the FBX pipeline.

OC3 has announced support for Autodesk’s new Project Pinocchio, which will make digital faces available to animators for free; they can then use FaceFX to create content.