The creative community comes out in big numbers to see what magic Adobe has cooked up for them. This year’s conference in San Diego saw the largest attendance yet and the best weather imaginable. During the conference users learned how Adobe is using AI, extending the use of 3D to more people, leveraging mobile and the cloud, and making it easy for everyone to create.

Adobe Max 2016 is becoming a new conference for a new company. That may be difficult to see sometimes because so many people seem to live and toil in their own separate neighborhoods of Adobe’s Creative Cloud. In fact, one of the long time long term challenges the company has been trying to meet has been to make it easier to wander between the Adobe applications and through the alleys connecting them. The year, Adobe Max featured some coming products that help provide a little glue different types of creative work.

One of the phrases that was often repeated was that “we’re doing this completely in the open.” It’s an underline for Adobe’s practice of releasing new technology to Beta for people to play with and comment on, but also it’s a comment on the company’s release of its underlying APIs to developers for add on products and to enable exchange between products.

An extension of that idea of openness is Adobe’s drive to the cloud. Narayen said several times that they are driving towards “cloud native” applications and that we’d see an increasing amount of cloud integration. The advantage of the cloud is openness on a level that’s not possible when creating content, assets, and ideas. They are all captive on different machines and drives.

New technology for 2017

Adobe unveiled several new apps and technologies that will be coming to the Creative Cloud 2017. Most are still under development but if there is a common theme it is that Adobe is trying to make the creative process easier. Probably what impressed us the most was Project Felix from the Photoshop group, but it’s hard to say because the company showcased significant capabilities.

Photoshop has been incorporating 3D elements for quite a while now and so has After Effects, but Project Felix is enabling a very specific task that challenges illustrators and designers and that is incorporating 3D images into Photoshop compositions for publications, illustrations, advertising, and art work. Product Manager Zorana Gee said that artists who have spent their lives creating gorgeous compositions using Adobe’s tools may often have little or no experience with the language of 3D. For many people, maybe most people, 3D tools can be difficult to learn. With Project Felix artists can bring in 3D objects from other tools (as .obj files) or select from a palette of pre-made objects or buy objects from Adobe Stock (which just happens to have added 3D objects to the products it now offers). Project Felix has push-button align to horizon and environmental lighting to make situating a 3D model within a photo background easy. The company is using V-Ray technology to provide fast rendering. For now, it’s using the CPU but Gee said they will be hooking up the GPUs soon. In addition, the company is using technology from Allegorithmic to enable textures for the 3D models.

Project Felix will be available as a Beta application at the end of the year.

Adobe has been showing XD (Experience Design) for over a year. It’s a clever tool that allows content creators including web designers and application designers to wireframe an interactive project, collaborate, and produce a working prototype so a team and clients can see how everything will work together. This has been a tough nut for many companies – how to enable designers and developers to communicate effectively. At the conference we heard customers worry that once they had done all the work creating a prototype in XD, they weren’t sure how to transfer that work to their development environment. In a chat with people working on XD we were told that this is an ongoing development, but that the goal is to create a tool that can fit comfortably into development environments. No one wants to redo work. Now people can share a cloud based version of the prototype to see how everything will work.

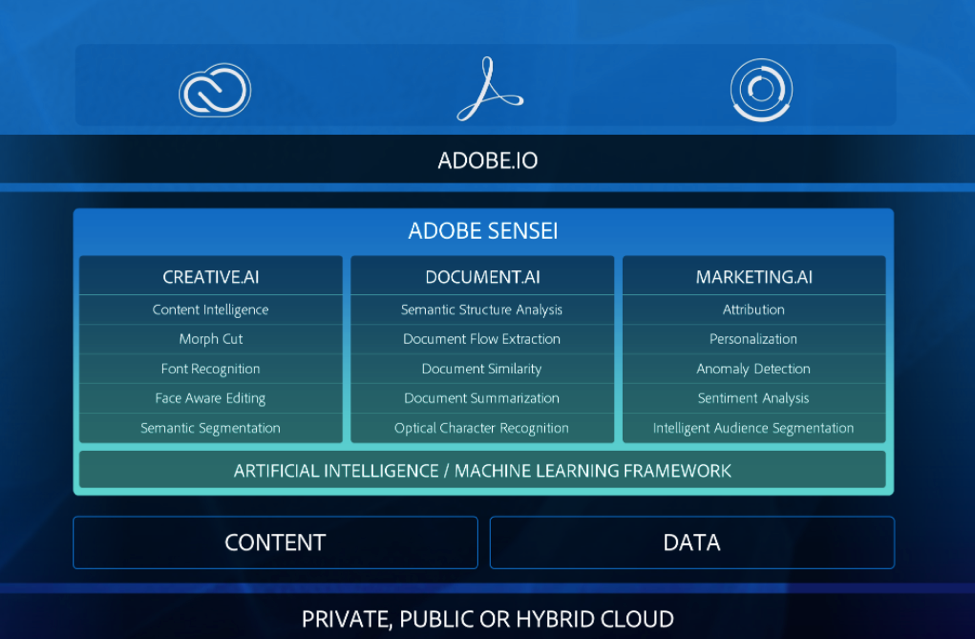

Perhaps the most ground breaking technology to be showcased at Adobe Max this year will be the one many people won’t even notice and that is Adobe Sensei. Sensei is the brainchild of Adobe CTO Abhay Parasnis, Executive Vice President and Chief Technology Officer, who came to Adobe in July 2015 after having worked at IBM, Microsoft, and Oracle. Most recently he was COO at software application developer Kony. Narayen told an audience of press and analysts at a Q&A session that Parasnis’ charter is to weave together all the disparate technologies in Adobe together into an ecosystem that can share and thrive on its developments. Sensei is a good example of how this could happen.

Using AI and machine learning, applications can learn to recognize similarities, user habits and preferences, and identify stuff for lack of a better word. Sensei is first showing up in Adobe products as a tool that can search through the millions of items in Adobe Stock and identify similar items. The example used is finding a picture on Instagram, coveting it, and instead of outright stealing it, searching stock for something similar that licensable. Sensei has been used to improve facial recognition and automatically smooth wrinkles. It can do intelligent compositing. No, you don’t have to take the computer’s advice, but it certainly provides a useful starting point.

Sensei can also interpret documents, compare them; it can be used with Adobe’s marketing tools to understand user response or preferences.

Sensei is being built by drawing on all the information coming through all of Adobe’s various knowledge inputs from questions to user behavior and preferences and a knowledge base is being built. What makes Adobe’s approach to machine learning and AI is that it is trying to find the keys to creativity and make it easier for creators to get to work faster and to reduce the need to stop, shift applications, search images, etc.

In March 2016 Adobe announced that it had passed the 15 million mark for images. It has far surpassed that number since then. At this year’s Max the company also announced an alliance with Reuters’ to provide access to that company’s huge stockpile of photography. In addition the company announced the addition of 3D models that people can use for Project Felix, After Effects, and Photoshop.

Summary

So, how is Adobe changing? Narayen told press and analysts that the days of silo’d companies trying to keep customers penned into their products through proprietary formats, and closed pipelines were over. The next step on this journey is a cloud based infrastructure that helps eliminate the boundaries between hardware platforms, file formats, client machines, and can make assets, communications/collaboration, and immense processor resources available to everyone. Adobe isn’t racing for a finish line, it’s taking a long journey and bringing its customers and partners along for the what will hopefully be a very productive ride.