No manual needed for voice queries.

As we confidently tell ourselves, technology works when it disappears.

Perhaps the biggest AI shift will slip in right under our noses. In fact, maybe it already has in the form of voice interaction. As evidenced by fruitless arguments with Siri, voice can be among the most frustrating interfaces when they don’t work. But several long-term relationships with Alexa prove that we can learn to love a machine that talks to us. Lord, it’s a rocky dating phase, though.

Voice is at the forefront of AI development across all platforms for some really simple reasons. The number one reason being we all know how to work voice. Developers are telling us voice is also powerful because it does create a relationship that can be further exploited by companies advertising goods, or just seeking to strengthen their brand relationship with customers.

At Transform 2018, a recent conference discussing voice and AI, VentureBeat founder Matt Marshall said over 41% of businesses will be using AI to work with customers. I’m not sure where Marshall got his stat from, but a recent Harvard Business Review study that said only 20% of respondents to their survey of over 3,000 executives are currently using AI in their businesses, but 41% said they are planning to implement AI or have a pilot program in process. So, let’s go with that.

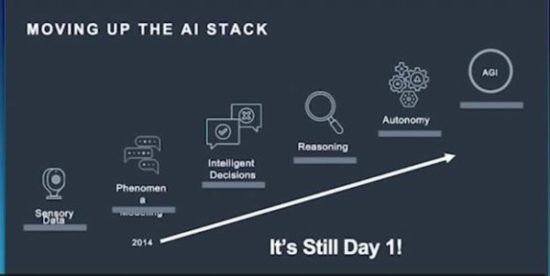

The point is the evolution of chat and voice is increasing rapidly thanks to a number of factors, not the least of which is the advancement in AI driven by cloud-based processing.

Throughout this Fall, we have seen companies address the importance of voice including Google, Amazon, Microsoft, and Adobe. Earlier this year at Google I/O, Google’s Scott Huffman outlined the basic changes Google is making to voice input to make it more natural including some context awareness to allow follow-on questions without having to keep using the signal Hey Google. In addition, Google has enabled multiple requests such as play the movie and turn out the lights in the living room. The trick, says Huffman, is that the AI has to be able to sort out separate requests in sentences from compounds. He uses the example, “who won the game between the Warriors and the Pelicans,” versus “what is the roster of the Warriors and the Patriots.” The second example is actually two requests: what is the roster of the Warriors and what is the roster of the Patriots. At the Transform conference, he said voice makes AI accessible to more people.

Amazon’s Alexa has a head start in the voice sweepstakes, but she’s not so good with context or multiple requests. At Micron’s Insight ’18 conference, Amazon President for Alexa and NLU, Prem Natarajan talked about the ways in which Amazon is also enabling more conversational interactions with customers but his focus at Insight ’18 was to talk about the ways in which AI is helping speed development as a critical mass of information is built. Amazon is building an informational lake through its stores—the sales and reviews, AWS services, the queries that come to Alexa, and so on.

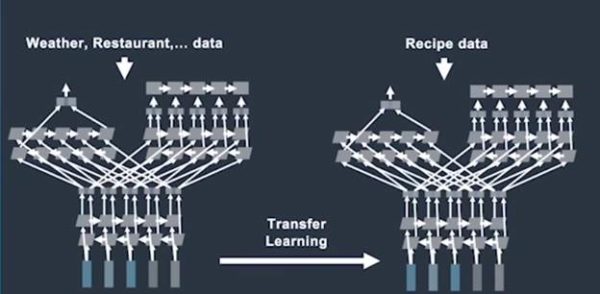

As a result, says Natarajan, the data that was mined and developed for informational systems can make it much easier to build new applications. Natarajan uses the example of a recipe application that can answer the question, what should I make for dinner tonight because it already has information about restaurants, the weather, preferences, etc. Also, can Alexa learn from the experiences of others? If a customer corrects Alexa about the name of a TV show, or the channel information, can that learning carry over to other users asking similar questions? Techniques such as active learning and transfer learning can reduce the amount of work programmers have to do to create applications and the applications can be better.

Natarajan also talked about the use of context to build more productive interactions between Alexa and her customers. Knowing where a person is can give Alexa more information about the query. For instance, if a person asks for Hunger Games, Alexa might be able to make a better choice about whether the person wants to see a movie (because they’re in the living room) or read one of the books (because they’re in the bedroom). Likewise, if Alexa knows who is asking, she can make more informed choices about books, music, calendars, etc.

Voice has the advantage of being direct, it doesn’t require a manual, but as one speaker at the VentureBeat’s Transform conference said, there is no page 2 with voice. At Insight ’18, Natarajan says that screens are a super power for voice input because the ability to show content may clarify a query or enable a final choice for shopping. Because the one thing we have to learn over and over is that voice is not precise. It’s open ended.

Microsoft VP Lili Cheng is leading research into voice interaction at Microsoft and she talked at Insight ’18 as well about the work she is doing. Cheng and her team at Microsoft Search Technology Center Asia (STCA) are working on understanding conversation. As an experiment, Microsoft created Xiaoice in 2014, a bot who lives in China and has a huge following, over 600 million people. She is not just an answer machine, she’s a conversationalist and people in China spend a lot of time with her. Cheng says that Xiaoice is called on to write poetry, tell stories to children, offer relationship advice, etc. She’s invited to parties to join the conversation and she’s been a guest on TV shows.

Li Di, one of the early developers of Xiaoice in China, says that Xiaoice was created as an EQ-based bot, suggesting that Emotional Quotient aspect is as important to the functioning of a successful bot as an IQ. Di says, “while IQ is to response user’s demand about task completion, EQ is to keep and care about the relationship during interaction.”

Di says in the process of evolution, Xiaoice began by using retrieval mode, meaning the bot answers using a set selection of responses, but the STCA team has been able to add generative capabilities, which is how Xiaoice manages to create stories or write poems. As Di says, “Xiaoice is also a content provider.”

At Insight ’18, Cheng outlined several key findings Microsoft has taken away from their work with Xiaoice, and which informs the group’s future development:

- AI should be able to keep a conversation going

- AI should have social skills and a decent EQ (emotional quotient)

- AI should contribute to positive relationships with people

- New technologies being developed need to be added to a developer ecosystem to empower businesses to prosper, and for everyone to create solutions for their customers.

Cheng said the last point became evident as Microsoft worked with their enterprise customers to develop applications for AI. She says Microsoft has a division of developers that works with customers who may not have the resources to do their own research and development work around these emerging technologies. She said that they were surprised to discover that many of their enterprise customers want to take advantage of AI and voice to establish a relationship with their customers. But, she says, “people need to have control.” And, she says they need to own the interaction. One of the really interesting observations Cheng made was that companies are seeing voice AI as an opportunity to fill in some of the blanks in their relationships with their customers. For instance, most companies’ web pages were never designed to interface with people. And we all know how miserable current phone or web systems are. Most of all, companies want to create an experience that is a reflection of the company, its culture, and they want it to be unique.

People also need tools, says Cheng, so they can create their own applications, but those tools have to enable people to personalize interaction and add distinct personalities. To that end Microsoft has gathered up the tools and learnings their teams have put together through working with customers and made it available as an open resource on GitHub under Microsoft/AI.

In an online chat with Lili Cheng, she said, “Our goal with the Cognitive Services (AI services for vision, speech, language and knowledge) and Bot Framework is to democratize AI development, so more people can create their own products with AI. The Bot Framework is available opensource on GitHub. You can download the tools, samples, and SDK (software development kit).”

If you’re interested in trying out Microsoft’s AI EQ, the company is experimenting with a salient bot called Zo in the U.S. You can talk to via text in Skype. She soon will be able to speak, and VR (voice rec) is next. However, at this stage she can be a tad irritating as she tries a little to hard to be funny, and hip.

It’ll get better, Microsoft has over 300,pl]000 developers using the Bot Framework to build conversational apps.

Cognitive Services which include speech, vision, language, and knowledge development are services for Microsoft’s Azure cloud and are not open sourced but can be used by accessing the APIs.

As this is being written, Microsoft has closed its deal to acquire GitHub, which it pledges to keep open as a resource for developers.

Tool kits

VentureBeat’s Transform conference highlighted companies building tools to help companies connect differently with their customers. For instance Hyper Giant worked with TGI Fridays to create a bartender bot named Flanagan, after the Tom Cruise character in the movie Cocktail (remembering that movie was probably quite an AI feat in itself). People can talk to Flanagan and tell him how they’re feeling and what they might like, and he can whip up a personalized cocktail from the TGI Friday list of ingredients. He can also remember you and what your tastes are. It’s a far cry from the generative abilities of Xiaoice but Sherif Mityas, Chief Experience Officer at TGI Fridays, says Flanagan is helping reconnect a new generation to TGI Fridays and give the company’s brand a personality.

In another example, Reply.IO worked with the Cosmopolitan hotel in Las Vegas to create Rose, an agent who can take care of concierge tasks, provide tips on hotel activities, and alert customers to discounts. Cosmopolitan director of digital marketing, Mamie Peers, says that Rose has been a huge hit, and she believes Rose has helped increase the number of customers to return to the Cosmopolitan instead of trying out some other Vegas hotel.

Interestingly enough, at Adobe Max this year, the company showed off its new XD development tool with capabilities for voice. Right now, it’s the most rudimentary implementation, but XD is actually a pretty interesting tool for building something like a voice application since it’s a node-based environment that lets users wire up content together to see what they can come up with. At Max, Adobe CEO Shantanu Narayen said Adobe’s apps are being voice enabled.

There are a myriad of tools out now to enable companies to implement some kind of voice interaction as you’ll see if you try Googling tools for voice interaction, or voice AI. Companies are building stockpiles of tools for bot and voice development on every major platform. IBM has tools and tutorials for development on Watson. Google has a range of freely available tools for its Tensor AI platform at Google I/O last Spring, the company also introduced Google Duplex which is a voice assistant that can make phone calls for you. We do sort of love the idea of a robot working its way though a phone response tree. Amazon, of course, also offers tools for the development of voice apps. The better to grow Alexa with. This Fall, we’re seeing a real upswell for developing voice applications that we can only hope will keep us from throwing the phone out of the window one more time.