Frame.io opens its capabilities to photographers.

Adobe releases updates to its video products as NAB 2023 is about to start. The company focuses on the theme of working together, but its AI integration through Sensei gives Adobe Premiere Pro a valuable gift: the capability of text-based editing.

NAB 2023 is just days away, and Adobe is getting a jump on things by announcing video-related updates that are pertinent to conference-goers. The big news, however, is the addition of the Text-Based Editing feature, achieved through Adobe Sensei, in Adobe Premiere Pro, part of the Creative Cloud suite. Also, After Effects, which is celebrating its 30th anniversary, has included a context-sensitive Properties Panel and other workflow improvements including native support for ACES and OpenColorIO. And, Frame.io celebrates Camera to Cloud’s second birthday with new security features, native Camera to Cloud partners, and new cloud workflows, with an expansion to photography and PDF documents from its previous video-only platform.

It’s clear that these recent changes, particularly those pertaining to Sensei, Adobe’s machine learning and AI framework, are designed to save creatives precious time by automating and streamlining certain tasks. This is especially important today, as demands to meet the need for standout video content continue to increase. According to Adobe, the demand for content has doubled over the last two years and is expected to grow by five times over the next two years.

While AI is the headliner in this year’s Adobe announcements, there is a wider underlying focus. For that reason, this year Adobe has homed in on the theme “Create your best work all together now.”

“I really love this phrase because it has so many meanings, at least to me. It’s about collaborative editing and working together, no matter where you are. It could be about our apps, and even our partner solutions, all working together in concert to help you get the job done. Finally, it’s about being back together in person at shows like NAB,” said Paul Saccone, senior director of pro video marketing at Adobe.

What do users want? According to Saccone, Adobe questioned the pro community, and what matters most to them is quality, stability, and performance. “We believe that if we enable every creative stakeholder to participate in the process earlier and more often, all together, they’ll be able to create their best work,” Saccone added. And that is what Adobe is working to achieve with its products.

Premiere Pro

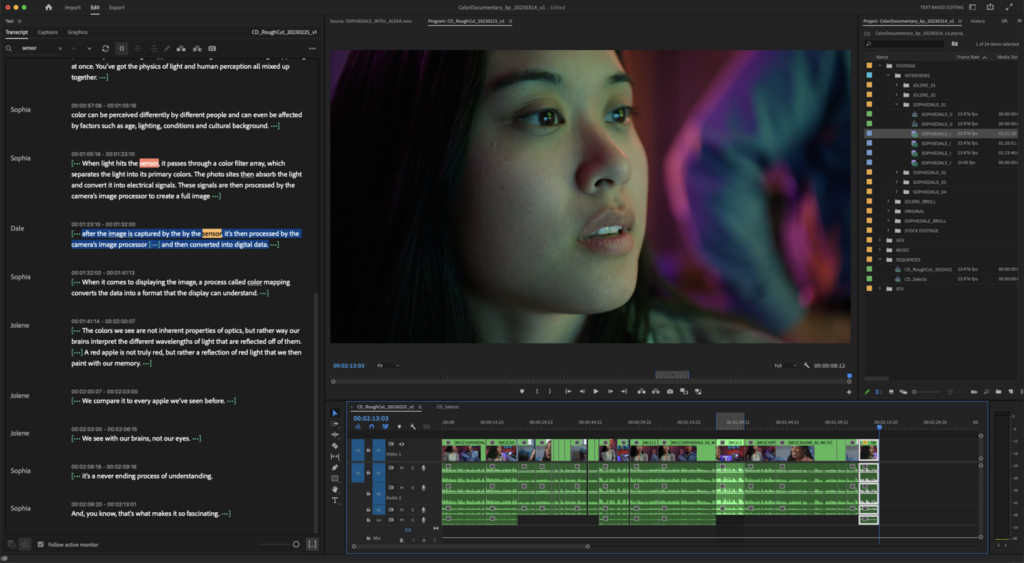

Premiere Pro is Adobe’s video editing solution, and with the new addition of the Text-Based Editing feature, users now have an entirely new way to edit in the software. Francis Crossman, senior product manager of Premiere Pro, notes that with this integrated capability, building a rough cut is as simple as copying and pasting text, “and there’s no way to get started [on a project] faster.”

As Crossman pointed out, Premiere Pro is the only NLE with this integrated technology. The Text-Based Editing feature, which is powered by Sensei, utilizes speech-to-text technology. It automatically analyzes and transcribes video clips directly on the device being used, so no Internet connection is required to perform the transcription, which can be done in the background. The process is fast, about 10×, so the feature can transcribe a 30-minute interview in about 3 minutes, with accuracy in the upper 90%, according to Adobe. Moreover, it uses keyboard shortcuts already familiar to the user. The software also automatically detects the language being spoken, and the various speakers can be separated and identified. Plus, the transcripts are searchable for single words or phrases. Then, editors can copy and paste sentences into any order they want and see them appear instantly on the Premiere Pro timeline.

Premiere Pro has also made color management much easier. New to the software is automatic tone mapping and log color detection for maintaining great-looking and consistent color when mixing and matching HDR footage from different sources into the same SDR project. As a result, users no longer have to use LUTs or balance the images manually.

New to Premiere Pro: the AI-driven Text-Based Editing feature, for an all-new way to edit.

Adobe has also added features to bolster collaborative editing. These include Sequence Locking, so the active editors can make a sequence view-only while they work; Presence Indicators show who else is working in shared files; and Work While Offline enables editors to publish changes while working offline, without overwriting others’ work when they return online.

Also included is a background autosave capability, system reset options, more GPU acceleration capability, and other improvements requested by users. Crossman noted this is the fastest and most stable version of Premiere Pro that has ever shipped.

This latest release of Premiere Pro, including the beta versions of the Text-Based Editing and Automatic Tone Mapping features, will be available in May.

After Effects

After Effects, now 30 years old, is Adobe’s visual effects, motion graphics, and compositing application, and it continues to be widely used throughout the video industry. Users now can gain fast, easy access to the most important animation settings in the new Properties Panel. Because the panel is context-sensitive, it shows key controls based on selections, so editors no longer have to navigate through the timeline searching for them.

Color management functions have also been improved, including native support for ACES and OpenColorIO, giving users consistent colors when sharing assets across other post-production applications. Numerous other user-requested features have been integrated as well.

Like Premiere Pro, this updated version of After Effects will ship in May.

Frame.io

Ever since Adobe acquired Frame.io in the fall of 2021, it has become central to the company’s cloud-based video collaboration platform for sharing media and tracking review and feedback. Now, Adobe has extended Frame.io’s Camera to Cloud platform from video production to include photos and PDF documents. So, photographers can now transfer photos right from their cameras to the Frame.io cloud platform without having to remove or download memory cards to hard drives.

This update broadens the cloud-based offering’s appeal in the creative sphere beyond the reported 3 million creatives currently using Frame.io. Now, Frame.io offers users an end-to-end workflow from content capture to edit, review, and approval through one centralized cloud-based hub.

In related news, Adobe has natively integrated Frame.io’s Camera to Cloud capabilities in Fujifilm X-H2 and X-H2S cameras for instant photo capture and cloud collaboration, enabling instant sharing of RAW photos from shoot locations. Frame.io Camera to Cloud integration for the Fujifilm cameras is available now.

In the area of security, Adobe is previewing Forensic Watermarking, which is an invisible watermark that’s undetectable to the human eye and embedded in video, thereby strengthening cloud security for enterprises, particularly when working on sensitive pre-release material.

According to Charlie Anderson, senior partnerships, cloud devices manager, the forensic watermarked video assets can survive file copy, screen recording, and even external recording from cameras and mobile devices to provide account owners with information associated with the last known stakeholder who had custody of the video assets in question. The invisible watermarks emit over a dozen one-frame, pixel-level bits at various coordinates in a video asset that can be as short as 30 seconds in length. (Most similar video services require an asset that is at least 120 seconds in length.) Notifications are generated in the video player every time an asset with forensic watermarking is selected for playback.

Firefly

OK, rewind to back to the subject of AI. Last month at Adobe Summit 2023, Adobe talked about the Enterprise version of Express, which includes Firefly, a new family of creative generative AI tools and models. While no further updates were on tap at the NAB pre-briefing concerning Express or Firefly, Adobe did a quick recap of this impressive offering, mostly addressing the elephant in the room pertaining to the concern over generative AI as it relates to artist ownership of their work. Adobe iterated that it trains its models using hundreds of millions of Adobe Stock imagery, which are openly licensed and public domain content. Meaning, the content that’s being generated is safe for commercial use, Adobe said, and the resulting assets being produced are of really high quality.

“We’ve taken great care to ensure that Firefly won’t generate content based on other people’s brands or IP,” said Saccone.

Generative AI can be an incredible tool to help editors visualize creative intention, Saccone noted. And no one can argue that. A script or even natural language text templates can be used to generate storyboards, to add an effect that changes day to night, or to change seasons by adding snow simply by just typing text into a prompt. AI also can be used to organize footage into bins and string-out, automatically matching location and camera metadata, analyzing speech, and then creating a rough cut based on the character names and lines that are in the script.

“The point is not to edit for you. It’s to act as your creative assistant, so you have more time to work on your craft. So, we’re thinking really deeply about how Adobe Firefly can help editors and motion designers be more excited about its potential,” Saccone added.