You can’t just draw bigger boxes to describe better resolution.

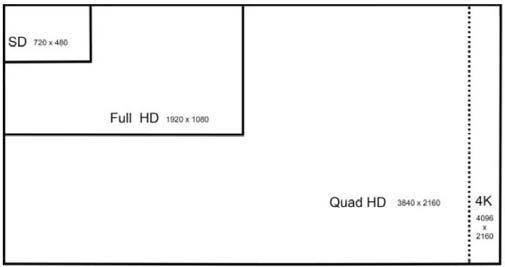

When some people make comparisons of resolution, they do so by drawing boxes, like this one.

The above diagram is a mathematical representation of resolution, showing more pixels per line and more lines, while holding the pixel size constant. The other misrepresentation of resolution is to suggest you’ll see a wider and taller point of view. You don’t.

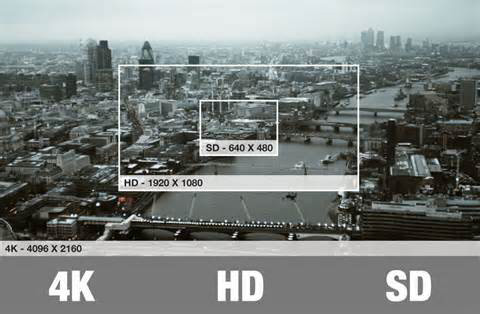

Monitors don’t change the field of view (FOV) as resolution changes; that is a function of the app. The pixels get smaller in the same size monitor.

The following images are a more accurate way to show it.

And if you drill down, you can get an even better understanding of the benefit of higher resolution.

How you get the signals for that 4K screen you’re thinking of buying is another issue to consider. HDMI, you say, no doubt. But not all HDMI is created the same. Most people think when you have a device with HDMI 2.0, it can support 4K at 60 frames per second and includes HDCP (High-bandwidth Digital Content Protection) 2.2. It turns out that just as with previous generations of the standard, there are optional items that may or may not be included. However, all are marketed as HDMI 2.0.

HDCP is the content protection scheme that Hollywood insists on to protect against piracy. In the consumer space, most TVs, STBs, AVRs, and other related equipment will have HDCP 2.2 installed. However, the China consumer market is probably not going to have HDCP 2.2 built in. That means upcoming Hollywood movies won’t play on these TVs.

Regardless of security, the most troubling aspect is the data-rate capability of an HDMI 2.0 product.There are essentially two levels of HDMI. Level A is the one most consumers expect to get when they buy a product. It will support 18 Gbps in a 600-MHz chip set. But the current HDMI chips run off a 300-MHz clock and offers 10.2 Gbps. This is actually fast enough to support 4K/UHD at 60 fps but with only a 4:2:0 color sampling and 8 bits. This is the capability that probably every TV out there today supports.

The current Blu-ray spec outputs content in 8 bits per color and 4:2:0 color sub-sampling, so that may indeed be sufficient for most consumer needs

600-MHz chips are in TVs next year, what will they be called—HDMI 2.0 or something else to differentiate them from the current crop of 300 MHz transceivers? And what happens when the new Blu-ray spec is finalized? We’ll have to wait and find out.