Nvidia adds a wealth of features for gamers to the GeForce GTX including an expanded VRWorks, the Ansel camera, multi-GPU support with expanded over-clocking options, and optimized views for VR, curved screens, and multiple monitors.

By Jon Peddie

Nvidia announced its GeForce GTX 1080, the first gaming add-in board based on the company’s new Pascal architecture. Nvidia says the new board will provide up to two times more performance in virtual reality than the GeForce GTX Titan X. The company said Pascal is optimized for performance per watt, and that the GTX 1080 is three times more power efficient than its predecessor Maxwell architecture.

Based on TSMC’s 16-nm FinFET process, the GTX 1080 has 7.2 billion transistors. It comes with 8GB of Micron’s GDDR5X memory, and can deliver up to 9 teraflops of performance. The 256-bit memory interface runs at 10Gb/second, contributing to the company’s claim that it offers 1.7x the effective memory bandwidth as that delivered by regular GDDR5. Nvidia claims it is the first (and currently only) company to have a 16-nm product in production.

That was all we could tell you a couple of weeks ago. And then Nvidia held a Dream Hackathon in Austin, Texas and invited bloggers, press, and a couple of analysts to a big roll out and deep dive on the P104 chip, GTX1080 board, and a bunch of supporting software.

At that time everything was still NDA. Even the screen saying it was NDA was NDA. And to my great surprise, the press, bloggers, and analyst all honored it. There was one little leak, which got shut down ASAP.

And now that we can finally tell you more, there’s a lot to tell.

The GTX 1080 AIB, based on the P104 GPU has 2560 Cuda cores packed into 20 streaming multiprocessors (128 cores each), with 20 geometry units, 1670 texture units, and 64 raster operators (ROPs). The chip is running 4th gen delta color compression with a lossless compression improvement from (the GTX 980) of 1.7X, which is pretty phenomenal, but also necessary at these elevated clock rates and expanded memory width.

The GPU base clock is 1.6 GHz (may vary a bit by vendor), but most importantly it can be overclocked above 2 GHz (mileage may vary. . . )

Big head room, designed for overclocking

The GPU’s base clock speed is over 1700 MHz, and it is over-clockable. The company has achieved high-speed clocking, measured in pico-seconds, by designing a highly tuned, tightly designed circuit board, which it was able to do because of its craftsmanship and years of experience. Furthermore, Nvidia says the new AIB consumes only 180 watts of power.

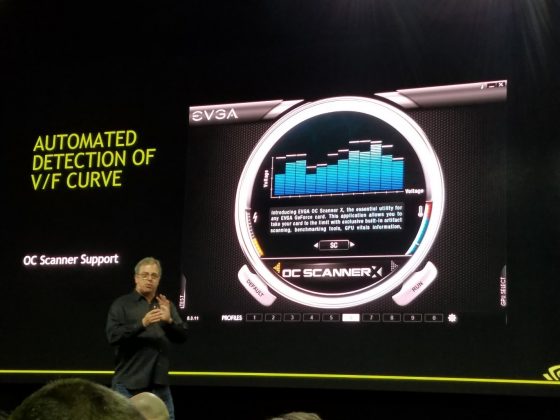

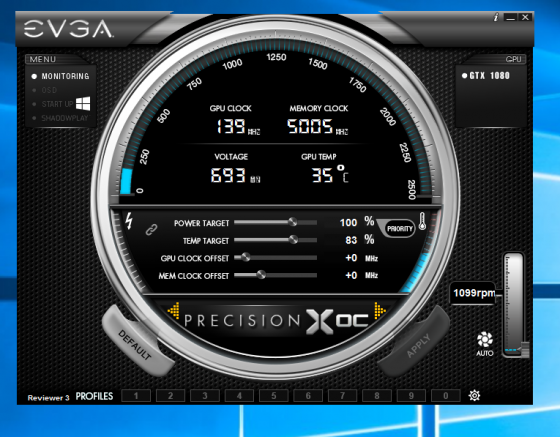

With Pascal, the company has expanded the exposure to several domain clocks within the chip, making it possible to tune up to 12 different and independent voltage adjustments.

EVGA has built a Precision OC tool for this and it will be available for download after the 27th of May (just in time for Computex, where EVGA plans to show it off along with other new features and products.) Notice, by the way, the scale in the picture of EVGA’s control panel goes ups to 2.5 GHz—I’m just sayin . . .—a little dry ice and who knows. . .

It may come to pass that eSport game contests require each contestant to set their EVGA controls to same point as is done in F1 races.

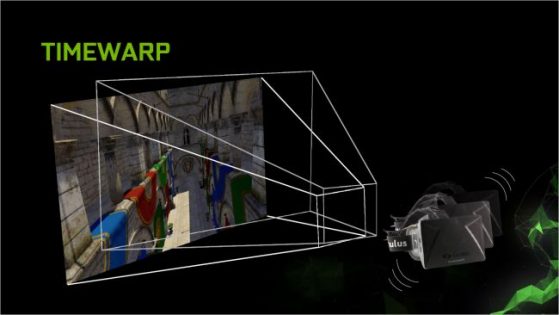

Remembering state—preemptive interrupt

SIMDs are great for rapidly processing datasets that are massively (some say embarrassingly) parallel, as long as the processing (the function, the kernel) is consistent (the “S” in SIMD). When there’s a branch, or an interrupt the SIMD has to be flushed, reloaded and restarted. At 2 GHz speeds that wouldn’t seem like a big deal, but when dealing with mega tons of data and complex 32-bit algorithms, it can take time to get everything settled in and ready to go to work. The GPU is being tasked for ever more functions like audio, physics, post-processing and rendering, and VR timewarp.

Compute Preemption and Graphics Preemption are new hardware and software features added to Pascal GPUs that allow compute and graphics tasks to be preempted at instruction level granularity, rather than thread block granularity as in prior Maxwell and Kepler GPU architectures. Both technologies can improve task responsiveness and user interactivity when multiple tasks are running.

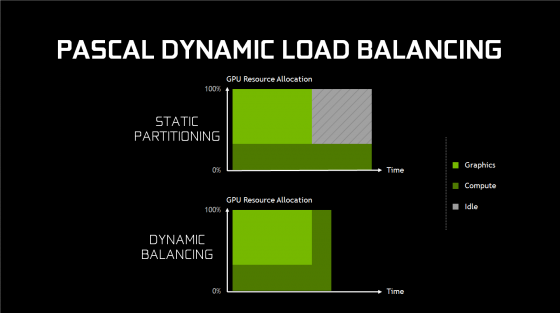

Certain workloads don’t completely fill, or use all of, the GPU. In those cases, there is an opportunity to get more performance by running two workloads at the same time on the GPU—for example a PhysX workload running concurrently with graphics rendering, which is not an unusual occurrence. For such overlapping workloads, Nvidia added dynamic load balancing in the Pascal GPU design.

In Nvidia’s previous generation GPU, Maxwell, overlapping workloads were put into static partitions as subsets, one for graphics, and one for compute, which assumed the work between the two loads roughly matched the partitioning ratio. Obviously, if one workload took longer than the other, and both had to be completed before a new job could be started, then one of the pipelines, would fall idle, which of course would reduce performance. Hardware dynamic load balancing addresses this issue by allowing either workload to fill the rest of the machine if idle resources are available.

With dynamic load balancing due to per-pixel preemption capability, the overall job (compute and graphics) will get finished sooner, thus increasing performance.

That just don’t look right

You know the old joke, when all you have is a hammer, every problem/opportunity looks like a nail. Well in CG if your hammer is a flat 2D screen, every opportunity looks like flat 2D. Render faster-flat2D, tessellate-flat 2d, bump-map-flat 2d, etc.

The world gets unflat, from a rendering point of view when you use multiple monitors and put them non planar from each other. It gets real unflat when you warp the image in order to compensate for the fat distorting lens put in VR headsets, and depending upon the size, its gets unflat when you use a curved screen. Flat is so, so, so yesterday, it’s like 8-bit color and VGA.

But getting unflat requires the GPU to do (yet more) math as it twists, stretches, and squeezes the image’s mesh. The GPU already does that when it distorts the image displayed in a VR HMD, so that it looks correct after its passed through those lenses. The lenses are there because your eyes, in an effort to focus, would pretty effectively eliminate most, if not all, of the image when it’s an inch away from your eyeballs.

That is why, when a VR presentation is shown simultaneously on a monitor, it appears as two fish-eye or barrel-shaped views. Your brain is clever enough to untwist that and let you get the idea of what’s going on, but you can’t discern proper detail or depth cues.

So Nvidia decided to do some extra geometric math to lessen distortion in VR, and while at it, they fixed the perspective for multiple monitors.

Perspective Surround

If you have ever set up Nvidia’s Surround view on three landscape monitors to get a better FOV in a game, you’ve noticed the left and right monitors are warped with a perspective error proportional to the angle you have the monitors turned in.

Perspective Surround is configured to know that there are three active projections—one for each monitor and each primitive, and will apply each of the active projections for each display on the fly. The result is a correctly rendered surround view, with no loss of performance.

Simultaneous multi-projection to the rescue

One of the requirements of VR rendering is that two projections have to be generated, rendering to each eye separately, which results in twice the amount of work for the entire pipeline, starting from the driver and the OS, all the way through to the GPU’s raster back end.

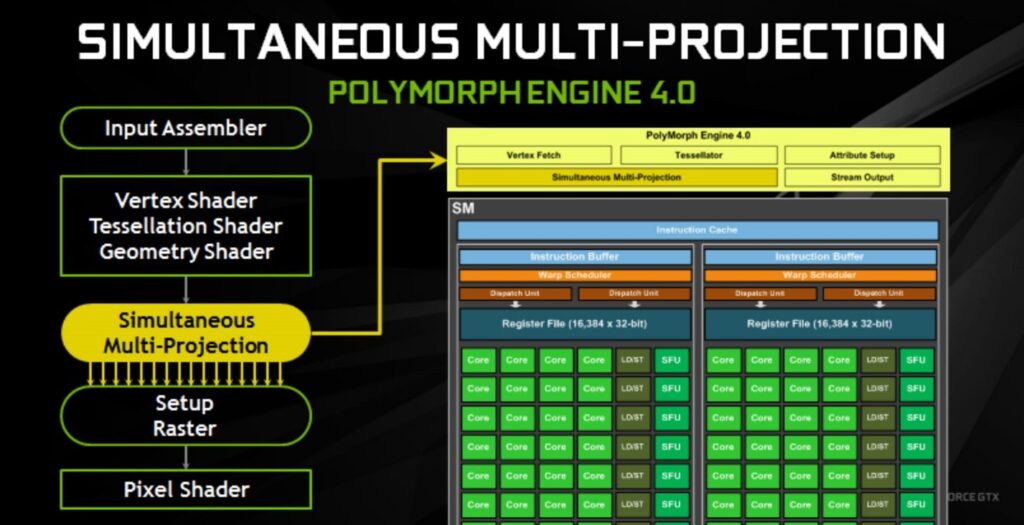

Nvidia’s fix for this is called called Simultaneous Multi-projection (SMP), and the name says it all, but not enough because it does a lot more.

Nvidia actually put an special projection engine in Pascal to solve this problem and do it at speed. The SMP engine supports two separate projection centers rendering the stereo projections directly in a single rendering pass. This SMP capability is known as Single Pass Stereo and with it all the pipeline work, including scene submission, driver and OS scheduling, and geometry processing on the GPU, only have to be performed once, which results in substantial performance gains.

In Single Pass Stereo mode, the application runs vertex processing only once, and outputs two positions for each vertex being processed. The two positions represent locations of the vertex as viewed from the left and from the right eye. The SMP hardware supplies a number of projections to the current primitive, which is important for combining the Single Pass Stereo with Lens Matched Shading, which we will discuss next.

Lens matched shading

Leveraging SMP’s ability to use multiple projection planes for a single viewpoint, you can attempt to approximate the shape of the lens-distorted projection. This feature is known as Lens Matched Shading.

With Lens Matched Shading, the SMP engine subdivides the display region into four quadrants, each quadrant having its own projection plane. The parameters can be adjusted to approximate the shape of the VR lens distortion as closely as possible. As a result, the source image can be as much as 66% fewer pixels per eye providing a great reduction in shading rate that translates to a significant increase in throughput available for pixel shading. Developers will have the option to use different settings; for example, one could use a setting that is higher resolution in the center and under sampled in the periphery, to maximize frame rate without significant visual quality degradation.

VRWorks SDK

Nvidia has also introduced an expanded version of VRWorks, an SDK that the company says lets game developers bring new features and performance to gaming environments. Included in the SDK is a new in-game image-capturing technology they call Ansel, which, if implemented by a game developer, will allow gamers to share their gaming experiences and edit the games’ artwork.

With Ansel, gamers can compose the gameplay shots they want, pointing the camera in any direction and from any vantage point within a gaming world. They can capture screenshots at up to 32x screen resolution, and then zoom in where they choose without losing fidelity. With photo filters, they can add effects in real time before taking the perfect shot. And they can capture 360-degree stereo photo spheres for viewing in a VR headset or Google Cardboard. Ansel will be available in upcoming releases and patches of games such as Tom Clancy’s The Division, The Witness, Lawbreakers, The Witcher 3, Paragon, No Man’s Sky, Obduction, Fortnite, and Unreal Tournament.

Ansel is part of a SDK and as such has to be embedded by the game developer. That’s important because some game developers and artists may not appreciate you modifying their art work, or copying it.

Ansel can also grab the raw buffers in order to capture a Raw or EXR picture. ILM developed the OpenEXR format in response to the demand for higher color fidelity in the visual effects industry.

VRWorks SDK, says Nvidia, offers developers a new level of “VR presence.” It combines what users see, hear, and touch with the physical behavior of the environment to convince them that their virtual experience is real.

With VRWorks, Nvidia also introduces a simultaneous multi-projection capability that renders natively to the unique dimensions of VR displays instead of traditional 2D monitors. It also renders geometry for the left and right eyes simultaneously in a single pass.

Included with the SDK is VRWorks Audio, which the company says is using Nvidia’s OptiX ray-tracing engine to trace the path of sounds across an environment in real time, fully reflecting the size, shape and material of the virtual world. To generate physically based spatial or 3D audio. In other words, it’s following the bounces of sound rather than the bounces of light.

And the SDK will, according to Nvidia, incorporate Interactive Touch and Physics: Nvidia PhysX for VR detects when a hand controller interacts with a virtual object, which enables the game engine to provide a physically accurate visual and haptic response. It also models the physical behavior of the virtual world around the user so that all interactions—whether an explosion or a hand splashing through water—behave as if in the real world.

Nvidia has integrated these technologies into a new VR experience called VR Funhouse, which will be available on Steam.

New faster, smaller, SLI

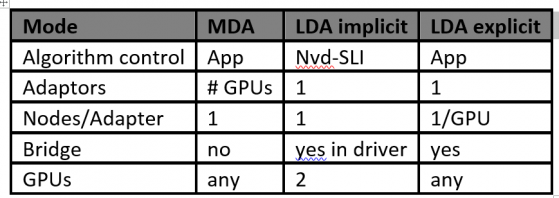

Microsoft made several changes in DirectX 12 that impact multi-GPU functionality. At the developer level, there are two options for use of multi-GPU on Nvidia AIBs: Multi Display Adapter (MDA) Mode, and Linked Display Adapter (LDA) mode.

LDA Mode has two forms: Implicit LDA Mode which Nvidia uses for SLI, and Explicit LDA Mode where game developers handle the responsibility for multi-GPU operation. Microsoft says MDA and LDA Explicit Mode were developed to give game developers more control.

In LDA Mode, each GPU’s memory can be linked together to appear as one large pool of memory to the developer (although there are certain corner case exceptions regarding peer-to-peer memory); however, there is a performance penalty if the data needed resides in the other GPU’s memory, since the memory is accessed through inter-GPU peer-to-peer communication (like PCIe). In MDA Mode, each GPU’s memory is allocated independent of the other GPU: each GPU cannot directly access the other’s memory.

LDA is intended for multi-GPU systems that have GPUs that are similar to each other, while MDA Mode has fewer restrictions—discrete GPUs can be paired with integrated GPUs, or with discrete GPUs from another manufacturer—but MDA Mode requires the developer to more carefully manage all of the operations that are needed for the GPUs to communicate with each other.

By default, GeForce GTX 1080 SLI supports up to two GPUs. 3-Way and 4-Way SLI modes are no longer recommended. As games have evolved, it is becoming increasingly difficult for these SLI modes to provide beneficial performance scaling for end users. For instance, many games become bottle-necked by the CPU when running 3-Way and 4-Way SLI, and games are increasingly using techniques that make it very difficult to extract frame-to-frame parallelism. Of course systems will still be built targeting other Multi-GPU software models including:

- MDA or LDA Explicit targeted

- 2-Way SLI + dedicated PhysX GPU

Even though Nvidia no longer recommends 3 or 4 way SLI modes, some gamers will do it anyway. And, some games offer great scaling beyond two GPUs. Therefore, the company developed an Enthusiast Key that can be downloaded from Nvidia’s website and loaded into an individual’s GPU.

HDR too

The GeForce GTX 1080 supports all of the HDR display capabilities of the Maxwell GPU found previously in the GeForce GTX 980 – with the display controller capable of 12b color, BT.2020 wide color gamut, SMPTE 2084 (Perceptual Quantization), and HDMI 2.0b 10/12b for 4K HDR. In addition, Pascal introduces new features such as:

- 4K@60 10/12b HEVC Decode (for HDR Video)

- 4K@60 10b HEVC Encode (for HDR recording or streaming)

- 4-Ready HDR Metadata Transport (to connect to HDR displays using DisplayPort)

Nvidia says they are working with game developers to bring HDR to PC games and is providing developers with the API and driver support as well as guidance needed to properly render an HDR image that is compatible with new HDR displays. Some forthcoming games featuring HDR capability will be The Talos Principle, The Witness, Rise of the Tomb Raider, Shadow Warrior 2, and The Witness, others are in the pipeline so to speak.

Syncing the screen quickly

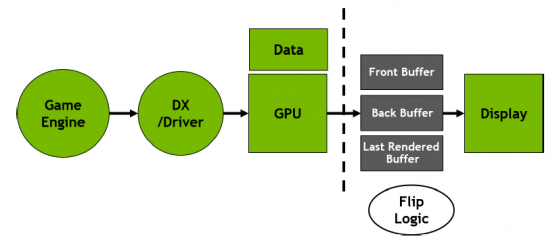

Fast Sync is a latency-conscious alternative to traditional Vertical Sync (VSync) that eliminates tearing, while allowing the GPU to render unrestrained by the refresh rate to reduce input latency.

The game engine is responsible for generating the frames that are sent to DirectX. The game engine also calculates animation time; the encoding inside the frame that eventually gets rendered. The draw calls and information are communicated forward, the Nvidia driver and GPU converts them into actual rendering, and then spits out a rendered frame to the GPU frame buffer. The last step is to scan the frame to the display.

If you use V-SYNC ON, the pipeline gets “back-pressured” all the way to the game engine, and the entire pipeline slows down to the refresh rate of the display. With V-SYNC ON, the display is essentially telling the game engine to slow down, because only one frame can be effectively generated for every display refresh interval. The upside of V-SYNC ON is the elimination of frame tearing, but the downside is high input latency.

When using V-SYNC OFF, the pipeline is told to ignore the display refresh rate, and to deliver game frames as fast as possible. The upside of V-SYNC OFF is low input latency (as there is no back pressure), but the downside is frame tearing.

Nvidia has decoupled the front end of the render pipeline from the back end display hardware. This allows different ways to manipulate the display that can deliver new benefits to gamers. Fast Sync is one of the first applications of this new approach.

With Fast Sync, there is no flow control. The game engine works as if V-SYNC is OFF. And because there is no back pressure, input latency is almost as low as with V-SYNC OFF. Best of all, there is no tearing because Fast Sync chooses which of the rendered frames to scan to the display. Fast Sync allows the front of the pipeline to run as fast as it can, and it determines which frames to scan out to the display, while simultaneously preserving entire frames so they are displayed without tearing.

Fast sync basically reintroduces the notion of front and back buffers between the GPU and the display.

Founder’s edition—a collector’s item

The Nvidia GeForce GTX 1080 “Founders Edition” will be available May 27 for $699. It will be available from Asus, Colorful, EVGA, Gainward, Galaxy, Gigabyte, Innovision 3D, MSI, Nvidia, Palit, PNY, and Zotac. Custom boards from partners will vary by region, and pricing is expected to start at $599.

The GeForce GTX 1080 will also be sold in fully configured systems from system builders, including AVADirect, Cyberpower, Digital Storm, Falcon Northwest, Geekbox, Ibuypower, Maingear, Origin PC, Puget Systems, V3 Gaming, and Velocity Micro, as well as system integrators outside North America.

The Nvidia GeForce GTX 1070 Founders Edition with 6.5 teraflops performance will be available on June 10 for $449. Custom boards from partners are expected to start at $379.

What do we think?

Nvidia has really raised the bar on what a GPU can do and deliver with more new features and capabilities than we can remember being introduced in a new chip in a very long time. That’s the good news, no, the great news. The bad news is that as consumers we won’t see the benefits of these new features for some time. Although Nvidia works well in advance with game developers, exposing its roadmap and advance code samples, it still takes time for the game developers to assimilate all the functionality and find ways to incorporate it.

That also means the consumer will get a great, long term investment with the GTX 1080, the gift that keeps on giving so to speak.