Solving the decades-old problem of video lag between refresh rate and image quality.

By Jon Peddie

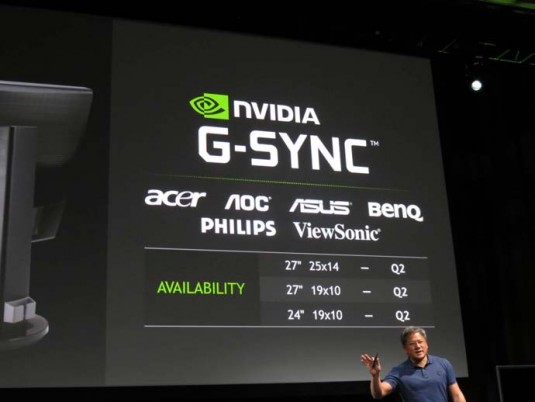

Last October, at an event in Montreal organized by Nvidia, the company introduced its “G-Sync” technology, solving what Nvidia says is the decades-old problem of onscreen tearing, stuttering, and lag. G-Sync is a variable refresh rate technology for displays, which, the company claims, enables perfect synchronization between the GPU and the display. It’s realized on a small circuit board that gets mounted in the monitor. The board will add about $100 to $120 to a monitor’s end user price now, and will presumably drop in price as (if) volume builds. At CES Nvidia showed working prototypes of production monitors from Asus, BenQ, and others (BenQ just announced the XL2720Z, which has G-Sync in it). They should be in the market in Q2. Nvidia also gave a sneak peek at G-Sync for 4K showing live demos.

The problem Nvidia proposes to solve with its G-Sync is the conflict between refresh rate and image quality. LCDs are refreshed at the power line mains frequency, 60 Hz in North America; most of South America, Taiwan, Japan; and other parts of Asia. However, power frequency is a red herring. Although power frequency was used to determine the initial frequencies chosen for TV to avoid beating between the TV and lighting, the circuitry for vertical scanning is independent of mains. Furthermore, monitor refresh rates have always been 60 Hz or higher.

Avoiding tear

Most games will allow the user to force GPU render updates to synchronize with the monitor’s 60 Hz refresh using V-Sync. However, depending on scene complexity, this can cause lag and stutter whenever the game render rate fails to keep pace with the monitor refresh. The alternative is to turn off V-Sync and allow the GPU to update the image being scanned out whenever the GPU has completed rendering instead of waiting to sync with a monitor refresh. In this case, the image shown on the monitor is always a composite of different rendered frames, the oldest on the top and the newest on the bottom, which produces a tearing effect between the images.

So Nvidia added some memory and sync circuits to keep the images running as fast as they can without lag, frame drop, stuttering or tearing, and they call it G-Sync, borrowing on the “G” of GPU—so far so good.

Of course this technology is available only on Nvidia GPUs, so we were curious: what is AMD’s plan?

At CES 2014 AMD gave private demos to the press and analysts in their meeting room in a hotel. AMD showed two Toshiba laptops and said that its display supports a feature that AMD has had in its last three generations of GPUs, which they call “dynamic refresh rate.” AMD explained that they built this capability into their GPUs for power saving, since unnecessary vertical refresh cycles use power with no benefit.

The reduction of frame write-cycles per second saves power consumed by the timing controller (TCON) DisplayPort receiver (in the monitor) and especially the down-stream source row and column drivers that drive the pixel gates. There is also benefit on the GPU side, where the memory-read cycle is reduced, yet another optimization opportunity.

AMD presented this concept of dynamic variable refresh rate (by adjusting the vertical blank time) to the display standards body VESA, and it has been incorporated into the next generation of the VESA DisplayPort specification, DP 1.3. AMD says it has been adopted by some panel makers, but not on a widespread basis. AMD says their Catalyst drivers already support it.

However, the way AMD supports dynamic refresh rate has been added in the embedded eDP spec (since version 1.0, currently VESA, is at 1.4) and the eDP compliance spec. AMD says they have verified this with many TCON vendors and panel vendors. Embedded display port (eDP) is used in notebooks.

AMD believes that several monitor suppliers are going to be ready with hardware to support DP 1.3 when it comes out, which is expected later this year. However, previous releases of DP specifications have not been smooth or universally accepted by the monitor suppliers and have not even been accepted by the AIB suppliers immediately.

Nonetheless, AMD says that by exploiting the inherent variable frame rate of modern monitors, via the VESA specifications, they can accomplish the same results as Nvidia’s G-Sync, for free— well, at least in a notebook.

It turns out that both vendors could be correct. The reduction of frame rate has been used with embedded notebook displays for quite some time to conserve power, particularly for static images. The concepts can be found in the first generation of the VESA DP spec for eDP used to connect notebook displays directly to GPUs.

What do we think?

Variable refresh rate is a great thing. The resulting effect in games is stunning. We commend Nvidia for leading and bringing it to market. However, let’s just admit that this is kind of a high-class problem for a select set of gamers using desktops and monitors. Probably most of these people do have Nvidia GPUs in their systems. The first wave of users will be the high-end gamers, Nvidia’s core customer base. This will likely become a standard feature in displays in the future, especially in UHD and higher, but the cost of implementation needs to come down. As always, healthy competition will drive this … but for now, kudos to Nvidia for making the investment to bring this to market.