In less than a year after its release, a flood of researchers and tinkerers are finding ways to re-purpose the Kinect that have nothing to do with games. Two UCSD researchers are adapting the inexpensive and popular motion sensor to gather archaeological data.

The November 2010 launch of the Microsoft Kinect for Xbox 360 immediately unleashed a tidal wave of tinkerers who found clever ways to extend the capabilities of the 3D motion sensing device. At first Microsoft sought to protect access to the Kinect’s inner workings, then realized they had a gold mine on their hands and released a free software development kit. Now serious researchers are joining the ranks of Kinect modders. Research projects currently underway range from robotic surgery to navigation for the blind.

As intended by Microsoft, the Kinect “reads” body movement with an onboard color camera and an infrared sensor, to control game action. One of the most promising adaptations of Kinect in recent months is to use the captured streaming data to create 3D scans of small objects and people. A professor/student research team at University of California San Diego (UCSD) are now preparing a modified Kinect for use as a data collection tool for archaeological expeditions.

Jürgen Schulze, a research scientist at UCSD’s division of the California Institute for Telecommunications and Information Technology, along with his master’s student, Daniel Tenedorio, are training UCSD engineering and archaeology students to use their modified Kinect to collect data on an upcoming expedition to Jordan.

The modified Kinect—dubbed ArKinect—will be used to collect data at various sites, which will then be converted into 3D models. UCSD has a StarCAVE, a 360-degree, 16-panel immersive reality projection room that enables researchers to interact with visual data. Three-dimensional models of artifacts provide more information than 2D photographs about the symmetry (and hence quality of craftsmanship, for example) of found artifacts, and 3D models of the dig sites can help archaeologists keep track of the exact locations where artifacts were located.

“We are hoping that by using the Kinect we can create a mobile scanning system that is accurate enough to get fairly realistic 3D models of ancient excavation sites,” says Schulze, whose lab specializes in developing 3D visualization technology.

The modified Kinect system currently relies on an overhead video tracking system, limiting its range to relatively small indoor spaces. However, Schulze notes, “In the future, we would like to make this device independent of the tracking system, which would allow us to take the system outside into the field, where we could scan arbitrarily large environments. We can then use the 3D model, walk around it, we can move it around, we can look at it from all sides.

“There may be experts off-site that have access to a CAVE system,” Schulze adds, “and they could collaborate remotely with researchers in the field. This technology could also potentially be used in a disaster site, like an earthquake, where the scene can be digitized and viewed remotely to help direct search and rescue operations.”

Schulze adds that it may even be possible to simulcast live reconstructions in the StarCAVE of 3D scans of objects or scenes taken in the field by the Kinect with a standard 3G or wireless broadband connection.

The Kinect was originally intended to sit atop a television and sense the movements of users playing videogames. UCSD’s Tenedorio modified a Kinect to capture 3D maps of stationary objects. The scanning process requires moving the device by hand over all surfaces of an object. Schulze says the ability to operate a Kinect freehand is a huge advantage over other scanning systems like LIDAR (light detecting and ranging), which creates a more accurate scan but has to be kept stationary in order to be precisely aimed.

How it works

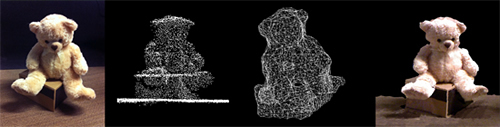

The Kinect projects a pattern of infrared dots (invisible to the human eye) onto an object, which then reflect off the object and get captured by the device’s infrared sensor. The reflected dots create a 3D depth map. Nearby dots are linked together to create a triangular mesh grid of the object. The surface of each triangle in the grid is then filled in with texture and color information from the Kinect’s color camera. A scan is taken 10 times per second and data from thousands of scans are combined in real-time, yielding a 3D model of the original object or person.

One challenge Schulze and his team faced was spatially aligning all the scans. Because the ArKinect scans are done freehand, each scan is taken at a slightly different position and orientation. Without a mechanism for spatially aligning the scans, the 3D model created would be a discontinuous jumble of images. To compensate, Tenedorio outfitted the ArKinect with a five-pronged infrared sensor attached to its top surface. The overhead video cameras track this sensor in space, thereby tagging each of the ArKinect’s scans with its exact position and orientation. This tracking makes it possible to seamlessly stitch together information from the scans, resulting in a stable 3D image.

The team is working on a tracking algorithm that incorporates smartphone sensors, such as an accelerometer, a gyroscope, and GPS (global positioning system). In combination with the existing approach for stitching scan data together, the tracking algorithm would eliminate the need to acquire position and orientation information from the overhead tracking cameras.

ArKinect scan progress can be assessed on a computer monitor in real time. Notes Schulze, “You can see right away what you are scanning. That allows you to find holes so that when there is occlusion, you can just move the Kinect over it and fill it in.” This is in contrast to conventional scanning devices, where data is collected and then analyzed offline—often in a separate location—which can be problematic if any holes are present.

Deleting unnecessary data, in fact, turned out to be the research team’s most daunting challenge. “Simply adding each frame into a global model adds too much data; within seconds, the computer cannot render the model and the system breaks,” says Tenedorio, who is joining Google as a software engineer following completion of his master’s degree this summer. “One of the primary thrusts of our research is to discover how to throw away duplicate points in the model and retain only the unique ones.”

The Kinect streams data at 40 megabytes per second—enough to fill an entire DVD every two minutes. Keeping the amount of stored data to a minimum will allow a scan of a person to occupy only a few hundred kilobytes of storage, about the same as a picture taken with a digital camera.

A Kinect retails for about $150. This low price tag, coupled with Schulze’s efforts to make it a portable self-contained, battery-powered instrument with an onboard screen to monitor scan progress, makes it feasible to send an ArKinect with students to Jordan.

Click on the image below for a video of Tenedorio and Schulze explaining the technology and its application.