The Virtual Windscreen uses the entire windshield as a head-up display.

Jaguar Land Rover (JLR) are in the “performance driving” segment of the auto market, a competitive space where the vendors are always looking for an edge. JLR thinks part of that edge in the future will be to offer its customers an augmented reality dashboard projected on to the windshield (“windscreen” in Britspeak), tied to gesture-based controls.

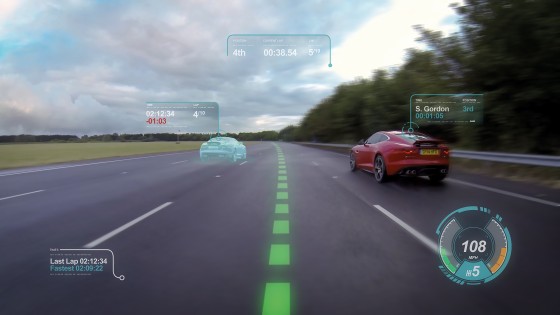

The Jaguar Virtual Windscreen concept, announced last week, uses the entire windshield as a display so the driver’s eyes need never leave the road. High quality hazard, speed and navigation icons are all projected onto the screen together. For performance drivers, imagery that could aid track driving includes:

- Racing line and braking guidance: Virtual racing lines on the windshield appear to be marked on the track ahead, with changes in color to indicate braking guidance.

- Ghost car racing: Drive against a simulated opponent that appears as a second car in the heads-up display. The ghost car could be the driver’s results on a previous lap or created from data provided by another driver.

- Virtual cones can be laid out on the track ahead for driver training, and moved as the driver’s ability improves

JLR’s gesture control research uses e-field sensing, using the latest generation of capacitive discharge touch screens. A smartphone today detects the proximity of a user’s finger from 5mm. The JLR system increases the range of the sensing field to around 15cm which means the system can be used to accurately track a user’s hand and any gestures it makes inside the car.

The Jaguar research team is also looking at technology to replace rear view and external mirrors with cameras and virtual displays. Using two-dimensional imaging to replace mirrors is limited by the fact that single plane images on a screen do not allow the driver to accurately judge the distance or speed of other road users. So they are working on a 3D instrument cluster using head and eye-tracking technology to create a natural-looking, specs-free 3D image on the instrument panel. Cameras will be positioned to track the position of the user’s head and eyes. Software then adjusts the image projection in order to create a 3D effect by feeding each eye two slightly differing angles of a particular image. This creates the perception of depth which allows the driver to judge distance.

Gesture control is starting to find a market in consumer technologies, and augmented displays are finding use on mobile devices, but JLR notes the whole package they envision is not ready for market yet. “It has the potential to be on sale within the next few years,” says Dr. Wolfgang Epple, JLR director of research.