Do you measure to get better workstation performance or choose ignorance as bliss?

Special to GraphicSpeak by Bob Cramblitt

When it comes to measuring workstation performance, clichès spring to mind: Faster is better. You get what you pay for. Mileage may vary.

There is a kernel of truth in all of these sayings or they wouldn’t be clichès. But if it’s so simple, why benchmark performance at all? Just buy the most expensive machine and be secure in knowing that your investment is delivering the absolute best in performance.

You know better, of course. Especially if your work isn’t as simple as blasting pixels onto a display as quickly as possible or processing repetitious instructions.

Dramatically different use cases

If you’re a CAD/CAM engineer, data visualization expert, or a 3D animator, you know that performance is an ever-shifting terrain, defined by myriad factors within an application and a particular task. The model you’re working with, the type of display modes, display resolution, type of operations you’re performing, how well the developer optimizes software for certain functionality: they all play a role in whether your machine is Usain Bolt or a mall walker.

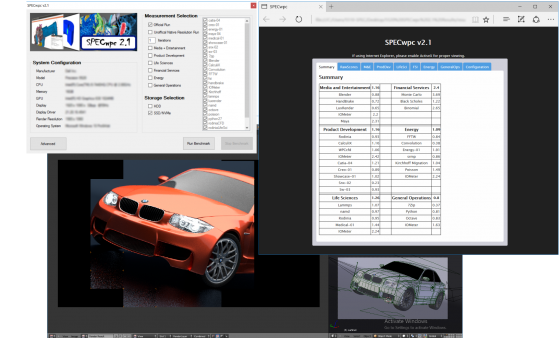

Professional workstations can span a wide range of dramatically different use cases, according to Alex Shows, chair of the SPEC graphics performance characterization (SPECgpc) group that develops the SPECviewperf benchmark.

“Interactive cases often require high-frequency, low-core-count CPUs, fast (but perhaps not high capacity) memory, very fast GPUs for real-time rendering of complex scenes, and storage with very high and random input/output (I/O) processing,” he says. “Contrast that with computational usages that require high core counts—even to the point of sacrificing frequency for more cores—high-capacity memory, and in some cases storage with high sequential throughput.”

Staggering number of choices

Then there’s the workstation marketplace to consider. It’s a jungle out there.

“While the number of competitors in the workstation market is fairly limited, and everyone has access to the same technologies, the number of choices within vendors’ product lines is staggering,” says Tom Fisher, chair of the SPEC workstation performance characterization (SPECwpc) group. “Figuring out the best configuration is very challenging, and benchmarking is the only real way to figure it out.”

To spin another clichè, one size doesn’t fit all. The workstation that screams while playing games might grind to a crawl during a seemingly simple operation on a production-sized model within SolidWorks, PTC Creo or Siemens NX.

Know thy applications and patterns

The first step is truly understanding your applications and use patterns. If you have the technical acumen to benchmark your most widely used applications and the functionality you use most frequently within those applications, that’s the best approach to truly assessing workstation performance.

Second best—and probably the option for the more mortal engineer—is to find a commercial benchmark that closely profiles your application and the work you do on a daily basis and run it on your workstation.

A third option is to look at workstation performance results that are posted publicly on places like the SPEC website. Ideally, these should undergo a peer review process before publication.

After that, it’s probably a matter of asking yourself how much you really care about whether your workstation is delivering the performance you think it should based on your investment. This is widely known as the “ignorance is bliss” approach.

If you truly care about productivity for some of the most valuable people in your organization—those using workstations for tasks critical to your bottom line—measuring performance matters; it’s the first step in improving performance.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies.

To find out more about graphics and workstation benchmarking, visit the SPEC/GWPG website, subscribe to this enewsletter or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.