SPECapc benchmark results are available online.

By Bob Cramblitt

Performance evaluation is complex.

There’s no silver bullet or one-size-fits-all. Performance is predicated on many factors that change from application to application, model to model, computing path to computing path, display mode to display mode.

Nowhere is performance complexity more prevalent than in the benchmarks from the SPEC Application Performance Characterization (SPECapc) group.

SPECapc provides methods for evaluating and comparing the performance of computers across vendor platforms and configurations. SPECapc benchmarks are application-based, representative of end user needs, and characterize total system performance—graphics, I/O, and CPU. They require that users have a licensed or demo version of the application on which the benchmark is based.

An alternative way

If the SPECapc requirements seem a bit too rigorous for the average user seeking to evaluate performance of a professional workstation running their frequently used application, there is fortunately an alternative: looking at the SPECapc website results.

Currently, there are SPECapc benchmark results online for Autodesk 3ds Max and Maya, PTC Creo, Siemens NX, and Dassault Systemes Solidworks. If you cannot find results for a configuration that interests you, contact a workstation vendor and ask if SPECapc results are available. Results are also frequently published in reviews from media outlets such as Jon Peddie Research‘s TechWatch.

SPECapc results posted on the SPEC website come from vendors and other parties running the benchmark under run rules established by SPECapc. The results undergo peer review by SPECapc members and, if accepted, they are posted on the website along with detailed information on the system configurations that were tested. The detailed configuration information helps ensure that others are able to recreate the same performance as that documented on the SPEC website.

How to use the results

Step 1

Go to the SPEC/GWPG website and select the benchmark that reflects your most frequently used CAD/CAM or Digital Content Creation (DCC) application.

Step 2

Click on the “Current Results” link at the top of the left-hand menu. This will take you to the results summary page for the benchmark. There you will see information on the configuration, the testing date and composite results for graphics and CPU tests. In some cases, you’ll also see composite numbers for I/O or various rendering modes.

Step 3

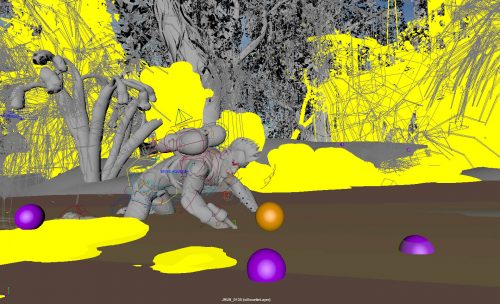

Click on the link for the company/product that interests you. You’ll be taken to a page with more detailed system configuration and benchmark information. A sample from SPECapc for Siemens NX 10, which will become the GUI model for future results pages across all SPEC/GWPG benchmarks, is shown below with vendor names pixelated out.

![]()

Step 4

Dive deeper into the results.

- Take a close look at the scores, both composites and subcomposites (subtests). The scores will give you an idea about what is happening at various operational levels. Results for SPECapc for Siemens NX 10, for example, provide a detailed look at performance for different display modes. The results for SPECapc for 3ds Max detail scores for subtests characterizing performance for different-sized models and forms of computing, rendering and modeling.

- Composite scores are based on a “bigger is better” result. Individual test results are derived by taking the total number of seconds to run each test. The score is then normalized based on a reference machine, leading to a “bigger is better” composite result.

Keep up to date

To find out more about graphics and workstation benchmarking, visit the SPEC/GWPG website, subscribe to the SPEC/GWPG enewsletter or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies.