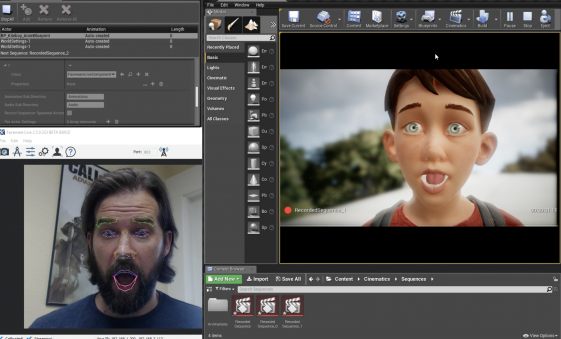

Producing facial animation in real time allows digital characters to interact with live audiences.

Faceware Technologies has made a significant under-the-hood upgrade to Faceware Live, its real-time motion capture (mocap) and animation software. Much of the new technology comes from its parent company, Image Metrics, which is creating commercial/consumer apps including L’Oreal’s MakeupGenius and Nissan’s Die Hard Fan.

Faceware Live produces facial animation in real time by automatically tracking a performer’s face and instantly applying that performance to a facial model. Faceware Live only needs one camera, which can be an onboard computer video or webcam, the Faceware GoPro or Pro HD Headcam Systems, or any other video capture device.

Specific updates or new features include:

Advancements to face tracking technology — Stability has been improved for face tracking with different types of faces (different skin tones, heavy facial hair, glasses, etc.). Once detected, Faceware says Live 2.5 captures 180 degrees of motion with significantly less jitter than before.

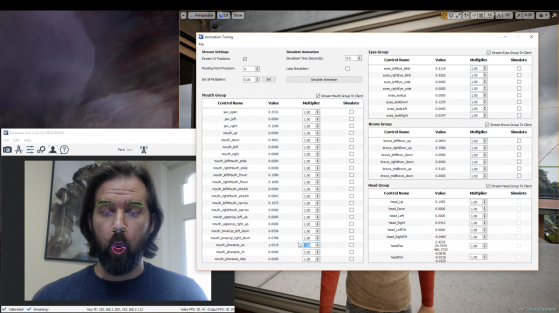

New animation tuning workflow — Animators can customize the live streaming animation to, for example, amplify or suppress a specific motion, such as an eyebrow raise or a smile. These fine tune adjustments are done on a pose-by-pose basis. Those animations (and all settings) can be saved to a profile. Animators can even isolate controls to pinpoint certain areas of the face that require extra attention. Animators can also simulate data without need for a live camera feed, simplifying the character setup process.

Command line calibration — Animators can now trigger the calibration and toggle via command line for improved setup automation.

Animation preview characters — Live 2.5 now includes animation preview characters, which animators can use to determine how certain types of motions might look on various facial structures.

A full list of feature improvement can be found on the Faceware website.

Use cases

Faceware suggests a number of new use cases for their software, based on the potential of the upgrade. These include:

- Live performances that incorporate digital characters. Digital characters can be “puppeted” in real time, allowing interaction with live audiences and people.

- Digital characters interacting in real time on kiosk screens in theme parks and shopping malls.

- Creating facial animation content instantly for previz purposes.

- Person-driven avatars in VR and AR. Users can stream their own personas into digital and virtual worlds—perfect for training applications as well as interactive chat functionality.

What do we think?

The pressure is on to deliver ever more captivating forms of video and animation for commercial purposes. Face tracking in real time opens the door to a more complete merger of interactive marketing with the latest visual technologies. Driving digital characters in real time will be for the flat screen today, but soon for virtual and augmented reality environments as well.

It was not that long ago that face tracking software required markers and rigs and all sorts of cumbersome procedures and vast processing time. Faceware markerless technology dumps all that into the dustbin of graphics history.