Standard tests don’t tell the whole story. The best-use scenario for the W9000 is in the most demanding of graphics tasks.

By Alex Herrera

The AMD FirePro W9000, one of the first members in a full family of new professional-caliber graphics cards from AMD, is now available. Jon Peddie Research got ahold of one and ran it through our standard battery of workstation-relevant tests. We’ve got numbers to review not only stand-alone, but in context with results from benchmarking the company’s previous family of cards.

Launched under the company’s Radeon brand in the first quarter of 2012, gamers might view AMD’s “Southern Islands”, a.k.a. “Graphics Core Next”, as old news. The consumer ranks tend to see the newest technology first, while professional/workstation caliber products based on the same technology might appear three to nine months later. And so it was with the first round of Southern Islands FirePro, released around two quarters after Radeon.

Why two quarters later? At first glance, the lag would seem counter-intuitive. After all, workstation applications demand the maximum graphics performance available. Absolutely, but while performance is critical, so is reliability, a feature which carries far less weight among gamers. So while overclocking bleeding-edge technology with beta drivers is OK, even desirable, for some LAN gaming party, it doesn’t cut it for professional applications.

Workstation products take more time to get to market to allow adequate testing, additional driver development and both system- and application-level certification. Accordingly, end user hardware life cycles are longer, contributing to that less-urgent need to get brand new technology out as fast as humanly possible.

In any case, AMD’s Southern Islands has arrived to serve workstation applications, as the first salvo of products was launched at the end of summer: the mid-range W5000, the high end W7000 and W8000, and the ultra-high end W9000. The top two leverage the highest-performing of the Southern Islands trio of GPUs, Tahiti, while the lower two tap the family’s middle child, Pitcairn.

|

|

FirePro W9000 |

FirePro W8000 |

FirePro W7000 |

FirePro W5000 |

|

MSRP |

$3,999 |

$1,599 |

$899 |

$599 |

|

GCN GPU |

“Tahiti” |

“Tahiti” (slower clock) |

“Pitcairn” |

“Pitcairn” (slower clock) |

|

Memory size |

6 GB GDDR5 |

4 GB GDDR5 |

4 GB GDDR5 |

2 GB GDDR5 |

|

Memory BW (peak) |

264 GB/s |

176 GB/s |

154 GB/s |

102.4 GB/s |

|

Display output |

(6) Mini DP 3D stereo Framelock / genlock |

(4) DP 3D stereo Framelock / genlock |

(4) DP 3D stereo (optional bracket) Framelock / genlock |

(2) DP (1) dual-link DVI 3D stereo (optional bracket) |

|

Max power |

274 W |

189 W |

150 W |

75 W |

|

Form factor |

Dual slot |

Dual slot |

Single slot |

Single slot |

The new top-half of the FirePro line-up, courtesy of Graphics Core Next (a.k.a. Southern Islands). (Source: AMD)

|

|

Tahiti |

Pitcairn |

Cape Verde |

| CUs (Compute units) |

2048 |

1280 |

640 |

| Transistor count |

4.3 billion |

2.8 billion |

1.5 billion |

| Aggregate floating-point throughput |

3.79 TFLOPS (single precision, DP at 1/4 rate) |

|

|

| Memory bus width |

384 |

256 |

128 |

The core Southern Islands GPU trio of Cape Verde, Pitcairn and Tahiti. (Source: AMD)

Getting a taste of the first FirePro card tapping Southern Islands raises the obvious question … how much improvement does it offer over the previous Northern Islands GPU generation? Well, thanks to AMD, we already had the opportunity to review four Northern Islands FirePro cards: the high end FirePro V7900, the mid-range V5900 and the entry-class V4900 and V3900. We benchmarked the four cards shortly after their releases, but not simultaneously. We tested the V5900 and V7900 in August 2011, the V4900 in November 2011, and the V3900 in March 2012.

Even better, we benchmarked them all on the same testbench that we used for the W9000 review, an HP Z800 with a 6-core Intel Xeon X5670 CPU (Westmere generation, running at 2.93 GHz), paired with 12 GB of 1333 MHz DDR3 memory. With the base system identical, all differences can be directly attributable to the swapped-in graphics card and its driver.

|

|

Component |

| CPU |

Intel Xeon X5670, 6-core @ 2.93 GHz |

| Memory |

12 GB of 1333 MHz DDR3 |

| Disk |

2 x 500 GB (7200 rpm SATA) |

| OS |

Windows 7, 64-bit |

Configuration specifications for our HP Z200 review machine. (Source: Jon Peddie Research)

Not only was our test workstation the same, but so was our test suite. We tapped SPEC’s Viewperf to isolate the stress on the graphics cards, and we used SPECapc, Cadalyst and Cinebench tests to get a handle on how well the cards support whole-system performance for key verticals like Digital Content Creation (DCC) and CAD. Choosing among the SPECapc suite of benchmarks, we chose Lightwave and 3ds max, representing two popular applications used in those spaces.

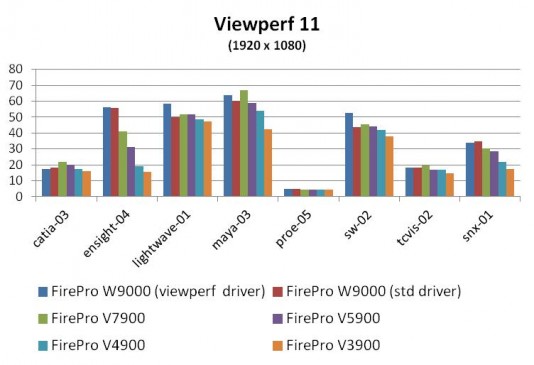

Viewperf 11

First up was Viewperf 11, the most recent revision of the long-time graphics benchmark. As we did with the W9000’s Northern Islands predecessors, we installed the W9000 making sure to download the latest-and-greatest drivers from AMD’s support site. With the W9000, we used three drivers, a 3ds max specific driver, one optimized for Viewperf, and a general-purpose version (8.14.01.6288).

Where 1280 x 1024 used to be a reasonable common denominator for setting resolution, we’re now pushing to 1920 x 1080, as the resolution is now generally pervasive and supported by even the most modestly priced monitors. We ran Viewperf 11 on each card with three iterations.

As one would expect, the W9000 with the Viewperf-optimized driver led the pack, followed by the W9000 and standard driver, followed by the previous-generation cards. Now, we include the Viewperf-specific driver results for completeness, but we generally defer to the standard-driver results. Why? Because the vast majority of professionals would be using the latter, making it the more relevant and comparable metric (and which is why we’ve avoided focusing much on any Viewperf-specific driver in the past, regardless of whether the vendor was Nvidia and AMD).

But while the order of results was what we’d expect, the magnitude of incremental performance from one product to the next was not. The W9000’s standard-driver edge over the W7900 was very small (1.4%, averaged across datasets, and for what it’s worth, the Viewperf-optimized driver fared little better).

|

FirePro W9000 (Viewperf) over W9000 (std) |

FirePro W9000 (std) over FirePro V7900 |

V7900 over FirePro V5900 |

V5900 over FirePro V4900 |

V4900 over V3900 |

|

|

catia-03 |

-5.0% |

-15.3% |

9.2% |

13.6% |

9.4% |

|

ensight-04 |

0.1% |

35.9% |

31.0% |

64.6% |

22.2% |

|

lightwave-01 |

16.8% |

-3.3% |

-0.2% |

6.6% |

2.8% |

|

maya-03 |

5.7% |

-10.2% |

13.6% |

9.2% |

27.8% |

|

proe-05 |

1.1% |

0.6% |

0.2% |

2.2% |

1.8% |

|

sw-02 |

21.2% |

-4.7% |

3.4% |

4.8% |

11.2% |

|

tcvis-02 |

-0.3% |

-6.7% |

15.1% |

1.0% |

13.9% |

|

snx-01 |

-3.1% |

14.6% |

7.1% |

28.6% |

27.8% |

|

Average speedup |

4.6% |

1.4% |

9.9% |

16.3% |

14.6% |

FirePro W9000 Viewperf performance, relative to the previous generation. (Source: Jon Peddie Research)

Now factor in that the W9000 retails for $4,000, while the W7900 can be purchased (street prices) for under $700. That raises some concern about the W9000’s competitiveness. However, benchmarks as a whole can tell an incomplete story, especially when looking at one in isolation. For example, there are surely high-demand usage models where the W9000’s 6 GB frame buffer would mean huge performance advantages over the W7900’s 2 GB memory. But if the benchmark doesn’t touch such cases—and Viewperf generally doesn’t—we won’t see it in the results.

So let’s take the Viewperf results with a grain of salt (at least for now) and move on to some application-level benchmarks to get a broader picture of where the W9000 might stand.

SPECapc: Lightwave and 3ds max 2011

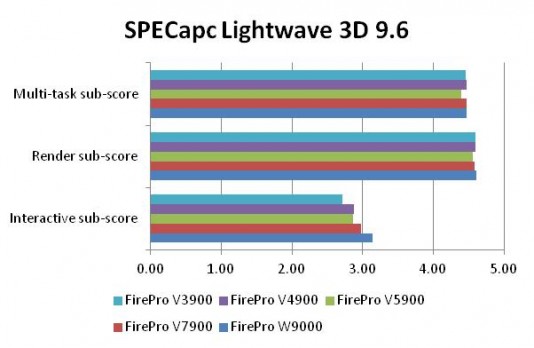

To evaluate overall system performance, we employed two SPECapc tests, for 3ds max and Lightwave, along with Cadalyst 2011 and Cinebench. While 3ds max is increasingly used in CAD spaces like architecture, engineering and construction (AEC), both have primarily been used by digital content creators.

Now with SPECapc, neither the graphics nor the rest of the system are solely responsible for scores. Rather, it’s the sum of the parts that are being tested, to try to give an idea of how the system might perform in a real-world environment. Where GPU-accelerated rendering is the bottleneck, throughput will vary by graphics card. But where it’s not (CPU, memory or I/O limitations), the test won’t show a difference. Average it all out, and the results for different cards in the same system will usually show substantially smaller differences than they do for Viewperf.

For our purposes of stressing the graphics, SPECapc for Lightwave’s Multi-task and Render sub-scores are of little interest. Both components are notoriously CPU-bound, (ironic for something named “Render”, but in this case rendering is performed in software on the CPU, not via GPU-accelerated APIs like OpenGL or DirectX) … so no big surprise that the results for all five cards were very close.

By contrast, the Interactive sub-test does more GPU-based rendering than the others, and the results were more in-line with expectations; no huge differences, but substantial as one moves up the price points.

The W9000 fared a bit better here, and results were especially interesting in that the performance delta over the V7900 was bigger than it was for Viewperf, something we don’t see very often. The W9000 outpaced the V7900 by about 5.5%, a number that may reflect the former’s ability to deal with larger datasets effectively.

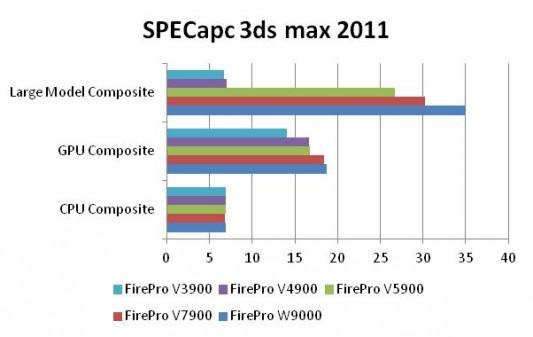

That ability looks to have delivered a more pronounced edge for the W9000 in our next application-level test, SPECapc for 3ds max 2011. As with the test for Lightwave, not all components of SPECapc for 3ds max 2011 scale strongly or directly with the GPU.

As the name would suggest, the CPU composite score does not vary by graphics card. The GPU composite may or may not, depending on where other bottlenecks in the system might be. In the case of the W9000, if it could have outperformed the V7900 substantially on the GPU composite, we might not know, since the system overall topped out just 1.3% faster. That’s certainly not a positive for the W9000, but it may not be negative, either.

Fortunately for cases like this, where we’re trying to sort out bottlenecks, the relatively new SPECapc for 3ds max 2011 has a third component called Large Model Composite. The overall Large Model Composite is a weighted blend of test results from two sequences: the CPU creating a large scene, and the GPU viewing the scene (with varying viewpoints/ports). The former should put trivial stress on the CPU, and the latter should so overwhelm the GPU that any CPU overhead should be effectively hidden.

Exercising the GPU with bigger render viewsets should open up a great opportunity for the W9000 to shine. And it did, not dramatically, but significantly more than it did in Viewperf or SPECapc for Lightwave benchmarks. The W9000 delivered a 15.8% bump over the W7900.

Cadalyst

The CAD space represents the largest chunk of workstation and professional graphics users. But while 3ds max gets a share of work in CAD as well as DCC, there’s one application that dominates the former space: AutoCAD. And when it comes to getting a feel on how well a card or system can handle AutoCAD, we fortunately still have the mainstay Cadalyst benchmark (2011 version).

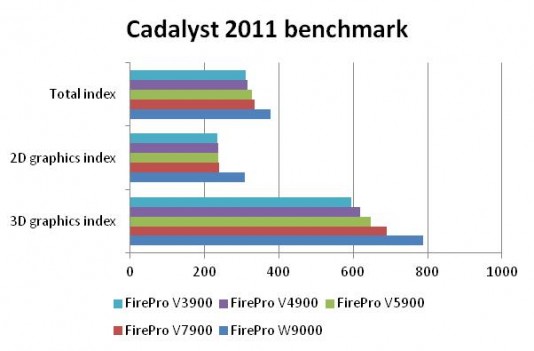

Results from the Cadalyst 2011 tests are mostly in agreement with the cards’ relative pricing and positioning. The more the test relies on the GPU, the bigger the performance delta as we step up the range of FirePro SKUs. And the less the test or sequence relies on GPU horsepower—that is, execution ends up being CPU or memory bound—the less of a difference measured.

But again, while the order of results were in-line with expectations, the magnitude of the differences were not. Specifically, we would not have expected the W9000 to surpass the W7900 by very much. But in actuality, it scored substantial incremental performance bumps: 14.4% for 3D, 29.4% for 2D, and 12.8% overall.

We wouldn’t have expected that for technical reasons, nor would we have for marketing reasons. A $3K+ card like the W9000 is not being positioned to lure AutoCAD users, and due to the nature of the benchmark, high-end cards don’t typically show a significantly higher score.

Cinebench

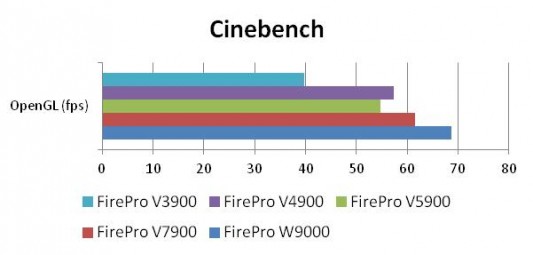

Last but not least, we have Cinebench. Cinebench renders a 3D sequence (currently, a car chase through town) utilizing both CPU and GPU resources. It reports a composite performance number for both CPU (CPU) and graphics (OpenGL). Since we’re benchmarking graphics cards, and comparing two cards on the same Z800 test bench, we focus on the OpenGL component.

Again the W9000 does fairly well, offering a significant—if not spectacular—performance edge over the V7900 and its Northern Islands brethren.

The performance rewards reaped by moving up the FirePro line

Of interest to both a graphics card supplier and its customers is how its products are positioned with respect to one another and from generation to generation. The customer of course cares, because no point spending more to get a trivial return—or even no return at all—on the incremental dollars of buying a new or higher end model. And accordingly, the vendor cares, because it must show customers—especially the biggest ones, the workstation OEMs—that there’s a sensible progression of performance and capability as one considers the merits of upgrading from the previous generation to the current one. And that goes double, when we’re talking a card carrying a $3K+ price tag, like the W9000.

|

Benchmark |

Test / Metric |

FirePro W9000 performance edge over V7900 |

| SPECapc for 3ds max 2011 |

Large Model |

15.8% |

|

GPU |

1.3% |

|

| SPECapc for Lightwave 9.6 |

Interactive |

5.5% |

| Cadalyst |

3D Graphics Index |

14.4% |

|

Total index |

12.8% |

|

| Cinebench |

OpenGL |

11.5% |

| Viewperf 11.0 |

Average speed-up across viewsets |

1.4% |

Performance gains stepping up to Southern Islands FirePro W9000

versus Northern Islands FirePro V7900. (Source: Jon Peddie Research)

The final verdict

Commensurate with its higher price, the W9000 shows consistent performance superiority over the previous generation (and much less expensive) V7900, but that superiority varies considerably from test to test, and viewset to viewset. Among the application-level tests, the W9000 does deliver substantial performance speedups, with % gains in the teens. But on Viewperf, the differences were negligible.

It’s unfortunate for AMD that the numbers don’t jump from last generation’s $700 card to this generation’s near-$4K card, especially for Viewperf. It would make for a lot less explaining and much better prospects at stealing back high-end market share from Nvidia, which dominates the segment (as well as the overall market).

But in this case, there is an argument to be made that these otherwise very reasonable benchmarks do not tell the whole story, especially for certain corners of the end-user community. And that’s because this card’s big advantage, its 6 GB memory, is less likely to be stressed by any of these more mainstream tests. In the few places where they brush up against bigger datasets is when the W9000 starts to shine. But we don’t know the extent to which it would theoretically outperform (or not), because we feel as though the tests themselves are limiting for a card with this much memory.

As a result, while we cannot enthusiastically recommend the card based on the results of our testing we’d also caution against ruling it out as well. The bottom line is this: if you’re running AutoCAD or on a remotely stringent budget, this isn’t a card you were likely to consider, regardless. But if you’re a high-demand user dealing with large, complex models, and you can consider a price tag like this one, it might be worth doing some real evaluation of this card running your own workflow and models. And see if you find any substantial benefits in its supersized memory, benefits the typical benchmarks are less likely to reflect.

Alex Herrera is Senior Analyst at Jon Peddie Research.