And finds its targets in the places its competitors can’t or won’t go.

AMD has its own kind of quiet, competent glamor, which means that the company isn’t always appreciated in-between its big announcements or stock market leaps. With its latest announcements, the company has brought the spotlight back around to its significant achievements and its plans for the future.

At a recent event named AMD Next Horizon, the focus was clearly on the data center with three major product announcements:

- AMD introduced the announced significant deals in the data center including three new instances available through Amazon and deals with Cray, Microsoft, Baidu.

- AMD is launching the 7 nm AMD Radeon Instinct, based on the Vega 20 GPU, as a data center GPU, accelerator later this year.

- AMD introduced the next in line in the Zen family Zen 2, code-named Rome.

AMD introduced the first Zen processor in February 2017—not that long ago. Forrest Norrod joined the company as SVP and general manager of AMD data center and embedded solutions business group. At that time, we wrote that he had affected a fairly jaunty, what-in-the-world-have-we-got-to-lose attitude about AMD’s plan to re-enter the data center. He was right on that front. AMD had squandered whatever the position they won from Intel with years of hard work for Opteron. By the time Zen came along, there AMD really didn’t have a market position. However, just saying you’re ready to retake the market doesn’t mean the clients will come along. The enterprise players AMD was courting needed to see that AMD had the goods and the stamina for the long game.

This year AMD was able to announce the launch of the Zen 2, provide some detail about its design and evolution, and more important than all that, AMD is coming to the table with significant wins in its pocket. At the event, AMD’s CEO Lisa Su highlighted deals between AMD and AWS and the rest of the HPC heavyweight community, showing two “usual suspects” slides listing the major OEMs and ODMs. In a financial call, Su said that the company’s sales of its first-generation Zen processors have gotten it to mid-single digital market share, which is coming out of Intel’s battered hide. She’s confident the company will build on those gains in 2019.

Su said AWS will be announcing the immediate availability of three machine instances based on Amazon Elastic Compute using AMD Zen cores, the R5a, M5a, T3a. AWS’ Matt Garmon, VP of Compute services, commented that these three products are the most popular options in the company’s portfolio. The little “a” on the end signifies the processor is an AMD rather than one from Intel. In other words, customers can sign up for the processors in exactly the same way they currently sign up for Intel-based instances, which adds a nice bit of equivalency.

Cray also announced a new deal with AMD at the event. The company says it has added Epyc processors to its Cray CS500 product line, Shasta. The company said, “the combination of AMD Epyc 7000 processors with the Cray CS500 cluster systems offers Cray customers a flexible, high-density system tuned for their demanding environments.” The combination enables organizations to take on HPC workloads with having to rebuild and recompile their x86 applications.

Both Cray and AWS emphasized the value of giving their customers choices. And, said AWS’ Garmon, “never have I met a customer who wasn’t interested in a lower price.”

It’s all about the architecture

AMD’s CTO Mark Papermaster said AMD made its choices over two years ago as the realities of physics make negotiating shifts to new process technologies ever more expensive and complicated. AMD has invested in its Infinity fabric for faster interconnects. Papermaster said the company decided to leapfrog 10 nm because AMD engineers recognized that “10 nm was not what our customers needed.” The Infinity Fabric technology is as much an ace in the hole as process technologies—it gives the company architectural flexibility. The decision to skip 10 nm has given AMD the opportunity to beat Intel to 7 nm, which Papermaster describes as a heavy lift that owes a great deal to the long working relationship AMD has enjoyed with fab partner TSMC.

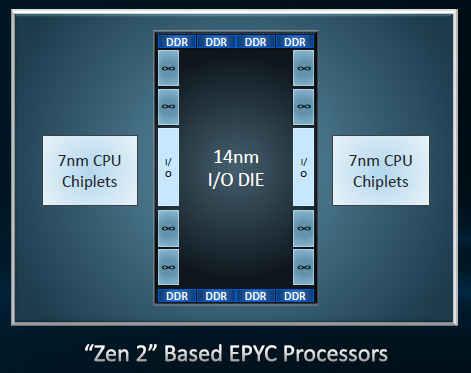

It’s part of AMD’s DNA, to find clever architectural solutions to the manufacturing challenges of advanced process technologies and the Zen 2 is an example of this as AMD manages to get the best of two process technologies with its “chiplet” approach. AMD is combining Zen 2 chiplets built on TSMC’s 7-nm process connected via an enhanced Infinity Fabric link to a 14-nm I/O core giving AMD a design that benefits from the cost savings of using a mature process for the I/O and getting the advantages of 7 nm for the CPUs. The 14-nm core includes the memory controller and eight DDR DRAM interfaces enabling equal memory access to the CPUs.

Generational updates to the Zen core include:

- An improved execution pipeline, feeding its compute engines more efficiently.

- Improved branch predictor, better instruction pre-fetching, re-optimized instruction cache, and larger OPcache.

- Floating point enhancements—doubled floating point width to 256-bit and load/store bandwidth, increased dispatch/retire bandwidth and maintained high throughput for all modes.

- Advanced security features—hardware-enhanced Spectre mitigations, taking software migration and hardening it into the design, and increased flexibility of memory encryption.

GPUs computing in the data center

AMD’s David Wang made what as for many of the attendees his first appearance as the company’s senior VP of engineering for the Radeon group. Wang is succeeding Raja Koduri who left AMD for Intel. Wang has returned to AMD after a trip through Synaptics that several AMD colleagues made when former AMD VP Rick Bergman left the company to become Synaptics’ CEO. Wang made it back just in time to announce the new 7-nm products. The MI50 and MI60 both based on Vega 20 and designed for the data center as accelerators. The top levels of the semiconductor engineering community are a pretty small club. It’s interesting to see how the doors revolve and probably useful to remember that company culture is preserved even as titles change hands.

These new GPUs feature ultra-fast floating-point performance and HBM2 (second generation high bandwidth memory) with up to 1 TB/S memory speeds. In addition, AMD says, they are the first GPUs capable of supporting next-generation PCIe 4.0, which gives them a 2× faster connection to the CPU. The new GPUs also take advantage of AMD’s Infinity Fabric Link for GPU to GPU connections that are 6× faster than PCIe 3.

The feature list for the MI50 and MI60 looks like this:

- Deep learning operations: The new Instinct GPUs provides mixed-precision FP16, FP32 and INT4/INT8 capabilities for training complex neural networks and running inference against those trained networks.

- Double precision PCIe 4.0 capable accelerator: Offering up to 7.4 TFLOPS peak FP64 performance, the AMD Radeon Instinct MI50 delivers up to 6.7 TFLOPS FP64 peak performance which can support VDI and desktop as a service and also a variety of deep learning workloads.

- Up to 6× faster data transfer via two Infinity Fabric Links per GPU capable of up to 200 GB/s of peer-to-peer bandwidth enabling the connection of up to 4 GPUs in a hive ring configuration (2 hives in 8 GPU servers).

- HBM2 memory: The AMD Radeon Instinct MI60 provides 32GB of HBM2 error-correcting code (ECC) memory, and the Radeon Instinct MI50 provides 16GB of HBM2 ECC memory.

- Secure virtualized workload support: AMD MxGPU Technology hardware-based GPU virtualization solution is based on the industry-standard SR-IOV (Single Root I/O Virtualization) technology designed to provide security at the hardware level making virtualized cloud deployments more secure.

The one more thing

Lisa Su took the stage to do the official unveiling of Rome, the next Epyc processor based on Zen 2. It is designed especially for the data center and will up the ante with 64-cores, 128 threads. This is the real new horizon for AMD, says Su. All of Zen 2s features, PCI 4.0, the updated Infinity Fabric, HBM2, add up to a processor with double the density, double the bandwidth per channel adding up to 2× the performance per socket and 4× the floating-point performance compared to Naples. Rome will have support for PCI Express 4.0 with twice the bandwidth per channel the better to connect with AMD’s new Instinct GPUs.

And, says Su, “the road to Rome leads through Naples and on to Milan.” AMD pledges socket compatibility through Zen 3, Milan providing flexibility and improved TCO for the data center.

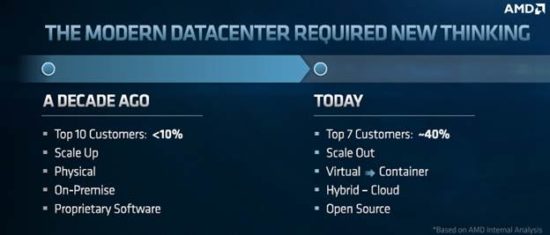

So, this year Norrod is back on stage with a new generation of Zen. “We could not be happier with the first year and a half of Epyc,” he said, adding that AMD’s Epyc and data center GPUs are well suited for the dramatic changes affecting the data center over the last decade. He noted that there are more large companies [in that segment now] and they represent 40% of the market. IT is looking at hybrid and cloud systems, and organizations are scaling out. They’re building on to what they have and everyone is looking for a TCO edge, said the VP.

Norrod believes AMD’s single socket and multi-core combination give AMD a unique TCO advantage. To back up a bit (even though readers probably know this), AMD outflanked Intel by offering its server CPUs with a single socket option as well as dual socket. At the time, Su said this one decision was likely to help AMD gain market share over Intel. AMD jumped first to 32 cores and now to 64 cores and the equation is pretty simple 1 socket + a whole bunch of cores = cheaper than Intel.

Now, says Norrod, this bet is panning out.

Norrod said he’s proud of how AMD’s customers are building on Epyc, and how they’re building on Epyc in unique and differentiated ways.

For instance, as we mentioned AWS has put AMD processors into their Amazon Elastic Cloud so customers can choose them or Intel through the same process. Microsoft is building differentiated instances using Epyc family products, they’re focused and specific. Oracle has remote computing options with VM and they offer “bare metal” options, which Norrod says are 66% lower cost per core compared to competitive options. Baidu is offering a range of data center services for AI, big data, and cloud compute, while Tencent is using Epyc for its infrastructure as a service. Also, AMD is signing up partners to provide hosting services including Packet, Hivelocity, and Hetzner.

AMD’s executives, including Lisa Su, Mark Papermaster, David Wang, and Forrest Norrod, are united with one message: “we’re just getting started.”