By Bob Cramblitt

An in-depth look at SPEC’s latest.

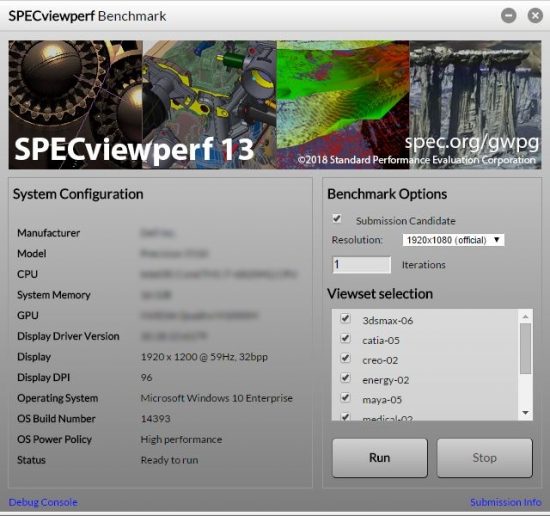

The SPEC Graphics Performance Characterization (SPECgpc) subcommittee recently released SPECviewperf 13, a major revision of its software for measuring the graphics performance of workstations running professional applications.

SPECviewperf 13 includes new volume visualization viewsets for energy (oil and gas) and medical applications, a redesigned GUI, improved scoring and reporting methods, support for 4K-resolution displays, and updated viewsets for Maya and Creo to support the latest versions of the applications on which they are based.

Available for free downloading

SPEC offers SPECviewperf 13 under a two-tiered licensing structure: It’s free of charge to the user community and available for a $2,500 fee to vendors of computer-related products and services.

The two-tiered arrangement gives users free access to the benchmark, while providing a way for vendors who are not SPEC/GWPG members to take advantage of a valuable tool and contribute to on-going benchmark development efforts. The bulk of the funding for SPEC/GWPG benchmarks comes from its members, which currently include AMD, Dell, Fujitsu, HP, Intel, Lenovo, and Nvidia.

Internal and external improvements

The internal improvements in SPECviewperf 13 are part of ongoing efforts to make the benchmark easier to use for performance comparisons. They include new reporting methods, including JSON output that enables more flexible result parsing; a new user interface that is being standardized across all SPEC/GWPG benchmarks; and various bug fixes and performance improvements.

The changes that have the most impact on performance measurement are those to the SPECviewperf viewsets. Viewsets are workloads based on real-world models performing the same type of graphics activities that users employ in their day-to-day work.

Applications represented by viewsets in SPECviewperf 13 include 3ds Max and Maya for media and entertainment; Catia, Creo, NX and Solidworks for CAD/CAM; Showcase for visualization; and two datasets representing professional energy and medical applications.

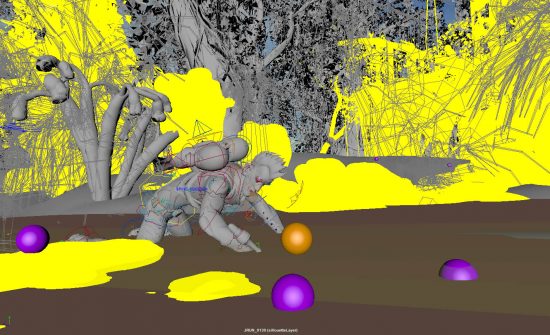

The Creo, Maya, energy and medical viewsets underwent the greatest changes in SPECviewperf 13, ranging from new models to tests representing new application functionality.

The art of application tracing

SPECviewperf 13 can be considered a synthetic benchmark because it does not run on top of a real application like SPECapc benchmarks do. But, it separates itself from other synthetic benchmarks by capturing graphics API calls directly from the application being tested. Except for the energy and medical viewsets, application data is captured by a process called tracing.

Tracing for the new SPECviewperf 13 Maya and Creo viewsets was done with internal tools from SPEC/GWPG members AMD and Nvidia.

Richard Geslot of AMD performed the tracing for all of the Maya viewset tests and three of the Creo tests. Ross Cunniff of Nvidia did the tracing for SPECviewperf 13 of the Creo viewset tests and integrated both the Creo and Maya viewset traces into the SPECviewperf 13 framework.

The tracing tools are proprietary to AMD and Nvidia, but the basic process is the same: The tracing tool is attached to the application, running the desired workload while the tool captures the graphics calls. The tool then generates the test DLL from the captured workload. DLL stands for dynamic link library, the term Microsoft uses to describe the file that contains instructions other programs can call upon to perform certain operations.

Although the term tracing suggests a child-like operation, it’s far from a simple procedure. Tracing an application accurately requires intimate knowledge of the API calls to make sure the captured functionality performs as it does on a user’s workstation in the real world.

Porting the traces to the SPECviewperf framework provides additional challenges, especially in sorting out the proper levels of API overhead and determining what calls are involved in the initiating portion of an activity versus those in the steady-state interactive session you want to measure.

Like most aspects of software development, tracing a graphics-intensive application is a mix of programming know-how, application awareness, performance expertise, and a massive amount of trial and error. But, when done right, it results in a benchmark much closer to representing performance experienced by application users.

New models and methodologies

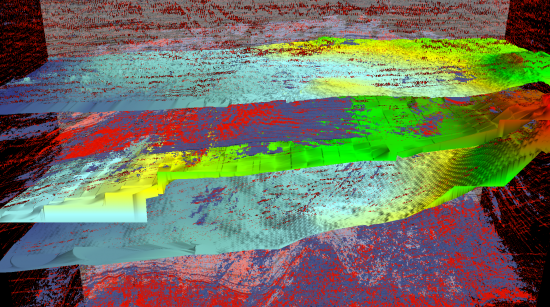

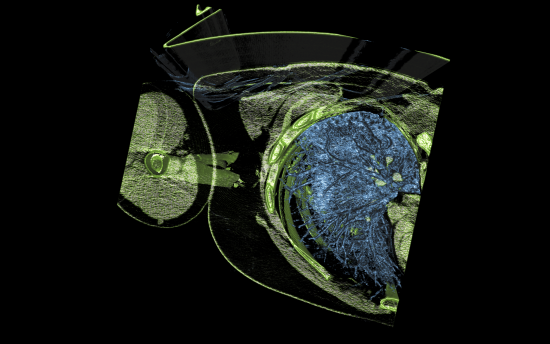

The biggest SPECviewperf 13 changes can be found in the energy and medical viewsets, which represent the latest methodologies found in modern applications within those fields.

Unlike the rest of the SPECviewperf 13 viewsets, neither the energy-02 nor medical-02 viewset is based on an application trace, since static traces cannot capture the dynamic nature of volume visualization.

In energy-02, the all-new volume tests are based on seismic data from the field, with accompanying “horizons” showing boundary layers of interest. The viewset has transitioned completely to a ray-casting volume visualization technique, which casts rays from the eye through the volume, accumulating density and shading at each volume sample.

In medical-02, medical data from magnetic resonance imaging (MRI) and computerized axial tomography (CAT) scans have been added. In a literal example of “skin in the game” devotion, the scans contain real data from long-time SPEC/GWPG representative Ross Cunniff.

The vast majority of the tests in the new medical viewset use ray-casting, but two of them continue to use slice-based rendering to represent parts of the medical imaging market still using this technique.

The medical-02 viewset also uses a novel 2D transfer function and a volume visualization technique called “bricking, ” where the volume data is loaded into the GPU as smaller 3D “bricks” rather than as a single monolithic volume.

A call for participation

SPECviewperf 13 is the result of thousands of hours of work since SPECviewperf 12 was released in late February of 2015 and updated in August 2016. With the exception of input from some ISVs, the vast majority of that work was done by SPEC/GWPG members.

Tens of thousands of people download and use SPEC/GWPG benchmarks, so they are obviously important to graphics and workstation users and vendors. So, in the interest of greater participation in the benchmark development process and more timely updates, here are some ways different constituencies can help:

- ISVs—Help SPEC/GWPG create new and updated benchmarks by providing real-world models, workloads, and input into user practices.

- Users—Offer models that can be freely distributed and help with guidance into how you exercise applications in the real world.

- R&D and academia—Join SPEC/GWPG as an associate member or provide models or input into performance characterization.

- Vendors that are not current members—Join SPEC/GWPG to contribute to benchmarking development or ensure that your company pays for properly licensed benchmarks.

As with most endeavors, the more involvement, the better and faster the yield.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies. To find out more about graphics and workstation benchmarking, visit the SPEC/GWPG website, subscribe to the SPEC/GWPG enewsletter or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.