Machines are getting smarter and can work more efficiently in carrying out routine jobs.

You may have been noticing some pretty cool videos out there demonstrating fast rendering, image reconstruction, facial recognition, and so much more. A great benefit of relatively recent breakthroughs of machine learning is that the rate of advances is accelerating. Machines are becoming able to take advantage of what they already know and get smarter. This was a focus at the CVPR conference, which took place this year in June in Salt Lake City.

Nvidia and its competitors have been highlighting the papers that have been presented at AI and computer vision conferences over the past year and they’re all racing to make practical use of the discoveries that have come tumbling out of well-funded research centers. Nvidia is concentrating a great deal on AI and machine learning with its own research because this technology is hungry for processing power. But it isn’t all about supercomputers churning away at big discoveries, what we’re going to see a lot more of is routine tasks or commonplace imaging jobs getting a giant boost.

One of the great examples of this is the ability to create beautiful slow-motion videos. There were multiple papers on this subject at CVPR. People love slow-motion and cameras are increasingly able to capture slow-motion video … to a point.

Filming at high frame rates for slow-motion is limited but effective using traditional film technology. Digital video can create a better slow-motion video but the complexity is compounded by the amount of data required to create compelling content.

AI researchers have taken a completely different approach. They’re creating slow-motion video from existing video captured at normal rates. The approach is outlined in a paper, “Super SloMo: High Quality Estimation of Multiple Intermediate Frames for Video Interpolation” available from the Cornell library. In the current work being done to create slow-motion video from scientists from Nvidia, the University of Massachusetts Amherst, and the University of California Merced engineered an unsupervised, end-to-end neural network that can generate an arbitrary number of intermediate frames to create smooth slow-motion footage. They call the technique “variable-length multi-frame interpolation.”

This work is an example of a GAN, a generative adversarial network, which was first described by Ian Goodfellow, a Ph.D. at Université de Montréal in 2014. So far, said Goodfellow, AI work was enabling identification and classification, but it was not enabling creative work. To enable creativity, Goodfellow proposed pitting two neural networks against each other: a generator DNN (deep neural net) and a discriminator DNN. The generator works backward from the output and tries to create data for input that would lead to the output. The discriminator rates the work of the generator with a 0 or 1. Responding to the discriminator, the generator adapts and resubmits its data. Scores get higher as the generator gets closer to the desired result. See TechTalks.com for a clear description of Goodfellow’s idea.

Creating slow-motion video from a video that has already been captured is a good task for a GAN because the problem is that there is not enough information to fill in the additional frames needed for smooth slow motion.

The researchers trained their Convolutional Neural Network (CNN) with more than 11K video clips with 240 frame-per-second videos. The CNN was then able to fill in the additional frames. The team used content created by the Slow Mo Guys, Gavin Free and Daniel Gruchy, who explore slow-motion photography. Their videos are fun and fascinating and the slow-motion AI technique described here makes their videos even slower.

Connect the dots

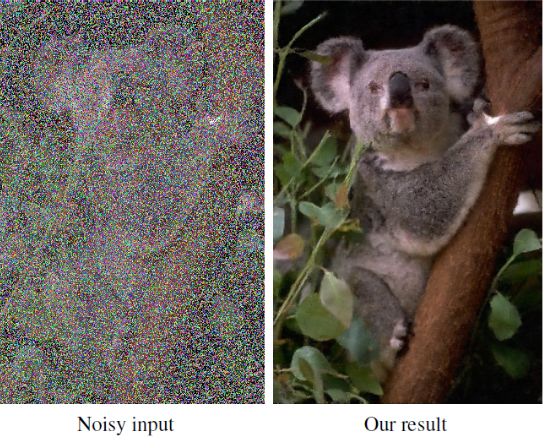

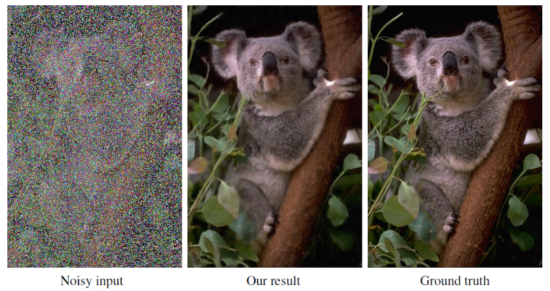

In another paper at another conference, Nvidia’s researchers demonstrated work reconstructing photographs that are grainy and blurry because they were taken without enough light. Something everyone has done. It’s better to have the photo than miss the moment.

What if, asks Nvidia in their developer’s blog, you could take your photos that were originally taken in low light and automatically remove the noise and artifacts?

The company is showcasing a tool takes advantage of the research being done in deep learning. In this case, researchers are training a neural network to restore images by showing example pairs of noisy and clean images. The AI then learns how to make up the difference. This method differs from earlier approaches such as the render speed-ups because it only requires two input images with the noise or grain.

The researchers applied basic statistical reasoning to signal reconstruction by machine learning—learning to map corrupted observations to clean signals—with a simple and powerful conclusion: under certain common circumstances, it is possible to learn to restore signals without ever observing clean ones, at performance close or equal to training using clean exemplars. The team showed applications in photographic noise removal, denoising of synthetic Monte Carlo images, and reconstruction of MRI scans from under-sampled inputs, all based on only observing corrupted data.

Without ever being shown what a noise-free image looks like, the AI can remove artifacts, noise, grain, and automatically enhance photos.

The work was developed by Jaakko Lehtinen, Jacob Munkberg, Jon Hasselgren, Samuli Laine, Tero Karras, Miika Aittala, and Timo Aila from Nvidia, Aalto University, and MIT, and was presented at the International Conference on Machine Learning in Stockholm, Sweden.

The researchers used eight Nvidia Tesla P100 GPUs with the cuDNN-accelerated TensorFlow deep learning framework, and a 40-core Xeon CPU. The team trained their system on 50,000 images in the ImageNet validation set.

They have shown that simple statistical arguments lead to surprising new capabilities in learned signal recovery and that it is possible to recover signals under complex corruptions without observing clean signals, at performance levels equal or close to using clean target data.

There are several real-world situations where obtaining clean training data is difficult: low-light photography (e.g., astronomical imaging), physically based image synthesis, and magnetic resonance imaging. The researcher’s proof-of-concept demonstrations point the way to significant potential benefits in these applications by removing the need for potentially strenuous collection of clean data. Of course, there is no free lunch—the machine cannot learn to pick up features that are not there in the input data—but this applies equally to training with clean targets.

The techniques used are a further elaboration on the idea of a GAN described above. An AmbientGAN as described by Ashish Bora in 2018 allows principled training of generative adversarial networks (Goodfellow et al., 2014) using corrupted observations. In stark contrast to the researchers’ approach, the others needed a perfect, explicit computational model of the corruption; the researchers’ requirement of the knowledge of the appropriate statistical summary (mean, median) is significantly less limiting. They found combining ideas along both paths intriguing.

Summary

We’ll be seeing further examples of this work at Siggraph. As mentioned at the top of this article, as these machines get smarter they can be significantly more efficient, and their work can be used to create programs and algorithms to improve the work we do and our lousy photographs.

Although Nvidia is helping to create public awareness of this work and the technology behind it, it does not belong to Nvidia alone. However, the company is finding practical ways to put research to work. Perhaps because it is Siggraph season we are seeing an intense interest in imaging tasks but you can expect to see quite a bit of this work going into the automotive field as well because that is another particular interest of Nvidia.

The prize-winning talk at the CVPR was “Taskonomy: Disentangling Task Transfer Learning” by Amir R. Zamir, Alexander Sax, William Shen, Leonidas J. Guibas, Jitendra Malik, and Silvio Savarese. The premise of the paper was to accept the sort of obvious proposal that imaging tasks are interrelated and also the logical corollary that works done to solve one task could be used to solve a related task—but not always. The work described in this paper was a description of ways to determine where the relationships between tasks actually are and how the work can be used and augmented to accomplish new tasks more efficiently. Amir Zamir presented the paper at CVPR. His presentation shows how practical this work can be for future AI explorations.

The paper describes another way machines are getting smarter and points the way to letting the machines help us get smarter. We’re fast getting to a point where this work is going to show up in our lives and increasingly we won’t need a supercomputer to do it. It’s a good thing because those supercomputers are going to be busy finding new problems.