Some of the best-looking models, such as those you see spinning at warp speed in hardware demos, may not tax the system realistically enough for benchmarks.

by Bob Cramblitt

Models, models, everywhere, but few suitable for a good benchmark.

That statement pretty much sums up the conundrum faced by the SPEC Graphics and Workstation Performance Group (SPEC/GWPG), the international benchmarking organization.

Do a search for 3D models and all kinds of options, free and paid, crop up. But very few of these models are representative of work done in the real world, and if they are, they often cannot be distributed freely to the public.

“We work closely with ISVs to get models that best represent customers’ work, but that can sometimes be difficult due to proprietary concerns,” says Ashley Cowart, SPEC/GWPG chair.

“In the past, we’ve had a few benefactors who have donated large and complex models that we can use in our benchmarks and sometimes we’ve paid for good models, but they are still hard to find. We’re constantly looking for new model sources.”

Looks are deceiving

Some of the best-looking models, those you see spinning at warp speed in demos, don’t represent models used in real work.

“A good graphics benchmark model includes all the detail, texture, rigging, parts, and interactions that an engineer or artist uses to do their job,” says Allen Jensen, vice chair of the SPEC Application Performance Characterization (SPECapc) subcommittee.

“Some models used in benchmarks are deceiving,” he says. “There might be an outer shell of a car that looks like the real thing, but the model uses huge surfaces with few attribute changes and doesn’t include all the smaller bits that sit inside the body of the car. If you tuned hardware for that car, you would end up focusing too much on large surfaces without considering all the other factors that have an impact on performance.”

Attributes are critical to performance because they define the look and position of every part that goes into a model. That information is used by the GPU to calculate reflections and shadows. Attributes also include matrices, which define a part’s position as the model is rotated, zoomed in on, or animated within an environment.

“In a fake CAD model,” says Jensen, “there are multiple bolts prepositioned within the model so only a single matrix change is required to rotate the car. In a real CAD model, lots of individual matrix changes are needed to reposition functional parts. Matrix changes introduce bubbles into what would otherwise be a smoothly flowing graphics pipeline. These bubbles can dominate the work done by highly parallelized GPUs. It’s not the pretty picture the vendor might want to paint, but it’s a performance reality, and that’s what we want to capture when we benchmark.”

Three marks of a good model

A good benchmark model encompasses three major criteria according to Ross Cunniff, chair of the SPEC Graphics Performance Characterization (SPECgpc) subcommittee.

- It represents real end-user work. A CAD model within a benchmark workload should represent something that can be built and used; a media and entertainment (M+E) model should be an asset that could be used in a game or movie production; a seismic model should contain actual ground density data used to explore for oil; and a medical model should use real scan data as the basis for volume rendering as it’s done in an actual application.

- It represents real end-user complexity. A CAD model should be a working part or assembly, with all the appropriate features and attributes; an M+E model should be a full scene, with animation, texture and shader components; a seismic model should be the actual size used by exploration geologists; and a medical model should include both slice-based and ray-casting rendering modes and all the variations of real-world voxel state changes.

- It should be in the native modeling language of the application. Creo, for example, uses parametric operations, while other programs use NURBS patches. Very few CAD applications process the piles of primitive triangles that sometimes pass for a CAD performance test.

Memory and frame rate

Two other factors to consider are the amount of video memory the model uses and the average frame rate.

The model should have a memory footprint that is representative of a real-world model for a particular application. As for the frame rate, if it is 0.5 fps or lower, updating the model display is slow and halting, creating a situation that an engineer or artist would not tolerate in the real world. At the other end of the spectrum, if the frame rate is greater than 300 fps on a high-end GPU, you are probably not measuring the performance that matters according to Jensen.

“Once the frame rate gets too high, performance boils down to how fast you can swap buffers and clear frames within the Microsoft OS. What the user cares about is the stuff in the middle, the model and all the bits associated with it. If you have a good model that measures the middle part, you can improve overall performance and deliver a better experience for an even bigger model.”

One model fits all?

Although it is tempting to benchmark graphics performance by selecting a few models that represent a range of those used within CAD or M+E applications, that approach is rife with problems.

The universal model approach doesn’t work because each application has unique ways of processing model data, including operations such as external referencing, modeling, preparation for downstream work, threading, and the final destination for the model, whether it is to build an engine assembly or maximize realtime performance for an online game.

“These types of details are crucial for accurately measuring performance based on the real work performed by users,” says Jensen. “As benchmark developers, the easy answer is usually not a good one. Our goal is to measure performance in a way that will give users information they need to run their applications faster and smarter.”

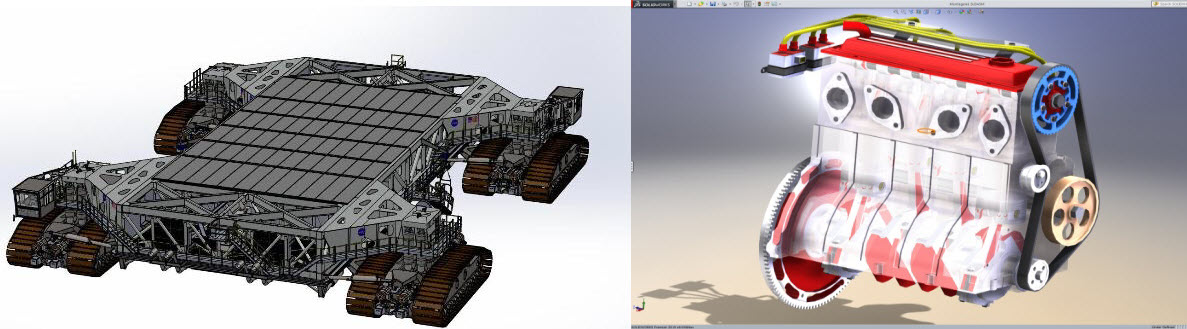

Even within the same application, model sizes vary according to the end product. A CAD model of an engine within the SPECapc for Solidworks benchmark, for example, takes up 392 MB in memory, while a complete NASA Crawler Transporter model uses a whopping 2.3 GB of memory. Likewise, a realtime game model that moves quickly across the screen requires very little of the resolution or detail needed for a realistic movie model that is seen in a stationary set-up for more than a few seconds.

Call for models

The scarcity of real-world models not only leads to benchmarks that are a poor reflection of what users deal with in their everyday work, it can also be an obstacle for updating good benchmarks.

An organization such as SPEC/GWPG will not pursue an application benchmark or a specific workload without having representative models, which can slow development or stop it before it starts.

“Having representational models is mandatory for us,” says Cowart, the SPEC/GWPG chair. “That’s why we are always on the hunt for native application models of varying sizes and complexity that enable us to build a relevant benchmark. If you have a realistic model for one of the professional applications we benchmark and that model can be distributed freely in the public domain, we’d love to hear about it.”

Anyone interested in submitting models for use in graphics and workstation benchmarks (SPECapc, SPECviewperf, and/or SPECworkstation) can contact SPEC/GWPG at [email protected]. Professionals who donate models will be making a major contribution to the performance evaluation community and will be recognized on the SPEC website and other communications outlets.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies.