Game studios are using real-time lighting to improve realism, but it will take a graphics ecosystem to take VR content to the next level.

A driving goal for the visual motion arts (video, cinema, games) has always been the creation of an immersive experience. The suspension of disbelief can be engaged with compelling stories — and compelling images. The newest graphics technology wave of Virtual Reality puts more in the creative toolbox to help create the immersive experience.

In 2016 the first truly comfortable VR headsets arrived. Performance is now at a suitable frame rate and there is sufficient graphical fidelity to help overcome the nausea factor for some user. New technology always creates new challenges, and the arrival of practical VR headsets has its own set of new challenges.

In a VR game, the user can “roam” around freely in a much larger space than in a game played on a flat screen. The desire to roam is higher in VR; a player can’t twist their neck to see what might be behind them when playing on the flat screen. Such ability means the game maker’s efforts has to work harder to guide the player with certainty.

Game developers want to save memory space and processing power by only providing the most realistic experiences where they are needed. But having less control over user experience makes game environment design more expensive in terms of memory, storage, processing, and creative overhead.

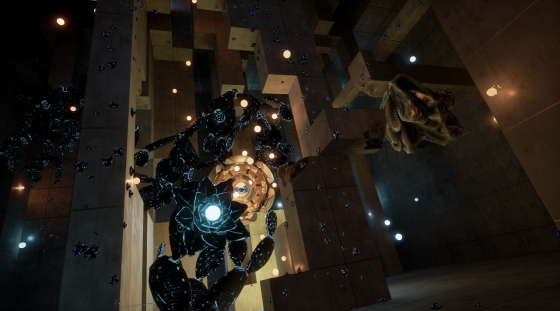

One technology that can help is real-time lighting. Traditionally in game development, a scene is created and then given “baked in” lighting. Background areas the user is not likely to view closely can be given less detail and less physically accurate lighting. Real-time lighting saves on the baking process, rendering scenes on an as-needed basis.

“It is possible to deploy dynamic light effects without impacting frame rates,” says Chris Porthouse, general manager of Enlighten, an ARM company. “Virtual reality experiences will be all the richer if developers design with this in mind.” Even this technique has limits, especially because VR headsets need data at 11 milliseconds, while a flat-screen game has the luxury of 33 milliseconds for a refresh. “When you have only 11 milliseconds you have to make some really hard choices,” says Max Planck, technical founder at Oculus Story Studio. “[It is a trade-off] between the number of triangles you put on a screen and the lighting model you employ. It’s a tradeoff with everything we do.”

“The last time I checked, the real world was lit with effectively real time lighting,” says Jamie Holding, a senior programmer at VR studio nDreams. “Dynamic lighting is incredibly important, however for the most part you have to cheat, otherwise it simply isn’t achievable within VR.”

“At the moment we have game engines that are purpose built for 2D,” notes Steven Cannavan, also a senior program at nDreams. “There are lots of techniques being used to reduce latency. Our GPUs today are all focused on throughput that has nothing to do with latency. Now we have to be much more specific and tell the GPU that it has to have certain tasks completed within a short time frame.” This transition to smarter rendering will require new game engines, Cannavan adds: “For the first few years at least, we’re going to be creating VR experiences using hybrid game engines and just muddling through.”

What do we think?

ARM is betting big on its Enlighten technology for real-time lighting to be a forerunner in improving the immersive behavior of VR, but it will take the entire graphics ecosystem working in tandem to take VR games and entertainment to the next level of immersive realism.