SPECwpc is likely to find wide use testing workstations.

By Kathleen Maher

There is no more contentious battlefield in the high-tech landscape than benchmarks. It was ever true for PCs and workstation and the fight has moved on to mobile, but what is everyone fighting over? Bragging rights mostly. Performance at gaming or on a particular game, supposedly, though in most cases no sentient human can tell the difference without the aid of a benchmark. In almost all cases it can be difficult to tell what components in the device are having the most influence. The problem for workstations can be a little more straightforward than general-purpose computers or mobile simply because workstation users often have very specific requirements. They need to know how well a particular machine can handle certain tasks and particular applications. So, SPEC (Standards Performance Evaluation Corporation), a non-profit industry organization, was formed so hardware OEMs, graphics companies, software companies, and customers could characterize and compare performance on a level playing field.

Introducing SPECwpc

Now, to kick off 2014, SPEC has introduced two new benchmarks. One, SPECviewperf 12, is a logical evolutionary step from SPECviewperf 11 (and all the previous versions). The other, SPECwpc, is designed to evaluate the ability of workstations in the most popular, professional-computing verticals. Jon Peddie Research is going to be using these benchmarks in its evaluation of workstations for its Workstation Report, and in fact, Alex Herrera has already put several machines through their paces. You can see some of his results in this hardware review published at GfxSpeak and in TechWatch.

Over the past couple of years, JPR has been happy to work with the SPEC group to discuss ways to encourage the use of their benchmarks. While in the past SPEC/GWPG (graphics and workstation performance group) benchmarks have focused on just graphics functionality (SPECviewperf) or specific applications that required licenses (SPECapc), SPECwpc’s whole-system testing gives a clearer idea of how the fully configured system—including graphics, memory, OS, I/O, and CPU—will perform for a wide range of applications. And it does not require licensed applications in order to run.

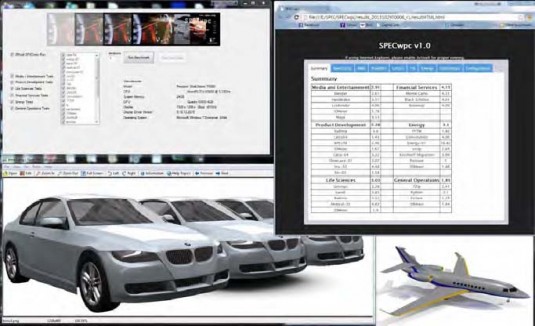

It’s got a clean, easy run-time interface, and generates the same auto-extraction of configuration as Viewperf 12. Results come in simple, organized, easy-to-process HTML and XML. SPEC even provides a converter to translate to XLS.

SPEC says SPECwpc is the “first benchmark to measure all key aspects of workstation performance based on diverse professional applications.” It’s designed to be comprehensive and, as such, comprises six separate suites, one per each of six verticals, including Media and Entertainment, Product Development, Energy, Life Sciences, Financial Services, and General Operations. Each suite includes anywhere from five to nine workloads, relevant to those specific spaces.

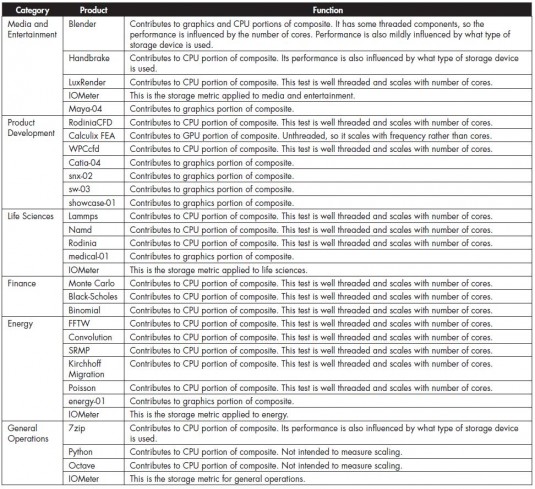

The chart below lists the tests included in SPECwpc and highlights the emphasis of the test. Primarily, testing looks at CPU performance. Most applications lean heavier on the CPU than the GPU, but there is strong graphics representation in the viewsets brought over from SPECviewperf 12. These viewsets represent the graphics pipelines for Autodesk Maya, Dassault Systèmes Catia, Siemens NX, Dassault Systèmes SolidWorks, Autodesk Showcase, and two new test suites based on energy and medical applications.

SPECwpc members—AMD, Dell, Fujitsu, HP, Intel, Lenovo, NEC, and Nvidia—have enthusiastically participated in the work of SPEC to help their customers understand differentiation between system configurations and to appreciate the value of a workstation over an enthusiast PC. For instance, in the latest round of testing, Herrera was able to see where a solid-state hard drive made a significant contribution and to gauge price performance trade-offs. Over the very chaotic past two years in the computer industry, workstation sales have remained consistent while PC sales have wildly fluctuated as customers experiment with new form factors.

When it comes to workstations, typical customers already know they want a workstation-class product, and they’re just trying to understand where to put their money as far as performance goes. As time goes by, SPECwpc will build up more data because more people are going to be able to perform these tests across applications, which will help companies, IT managers, and individual customers make choices and spot trends. It’s also going to help OEMs build machines that meet the demands of their customers. That’s why the participants in SPEC have contributed their work so tirelessly and generously. SPECwpc V1.0 is available under a two-tiered pricing structure. It is free for non-commercial users and $5,000 for commercial entities. SPEC defines commercial entities as organizations using the benchmarks for the purpose of marketing, developing, testing, consulting for and/or selling computers, computer services, graphics devices, drivers, or other systems in the computer marketplace.

There’s an interesting collateral benefit that’s liable to happen, too. Right now the loud and contentious marketing around products used for gaming has contributed to some confusion about where to truly get the most bang for the buck. The SPECwpc benchmarks are more straightforward. We expect to see the hard-core benchmarkers try out SPECwpc as well as their usual menu of game and productivity benchmarks, and as a result, we’ll see more nuanced results about the different machines out there. A larger pool of information around benchmarks can only add to the understanding about the interplay of CPU, GPU, types of memory, I/O, hard drive, etc. Testing results are available at the SPEC website: http://www.spec.org/gwpg/wpc.static/wpcv1info.html