Users can run synthetic benchmarks without having actual application installed and still get rigorous results.

By Bob Cramblitt

In ancient times when I was in college, there was a faddish rash of imitation leather jackets. Some looked authentic and others looked like a baggie with a zipper and pockets. It led to the inevitable question whenever anyone sported a new jacket: “Is that leather or pleather?”

A similar question pops up whenever benchmarks are discussed: “Is that a real or synthetic benchmark?”

The question was once a big concern.

In the early days of computer graphics, vendors would use all kinds of apples-to-oranges measurements to market their performance. Pixels per second was a popular one. But, what size were those pixels? How complex were they? What resolution are we talking about?

Performance results from these types of tests might have a correlation to how a graphics workstation would perform under a real application-based workload. Or they might not.

Keeping it real

Today, there are benchmarks that are real, benchmarks that are synthetic, and benchmarks somewhere in between. The question of real vs. synthetic isn’t black and white.

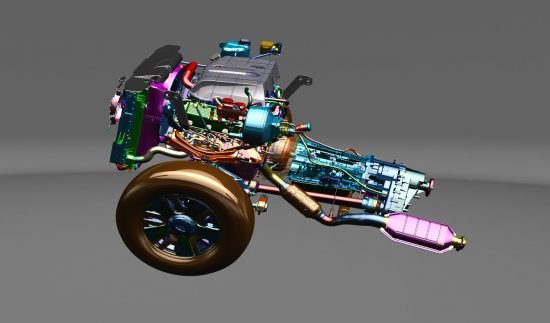

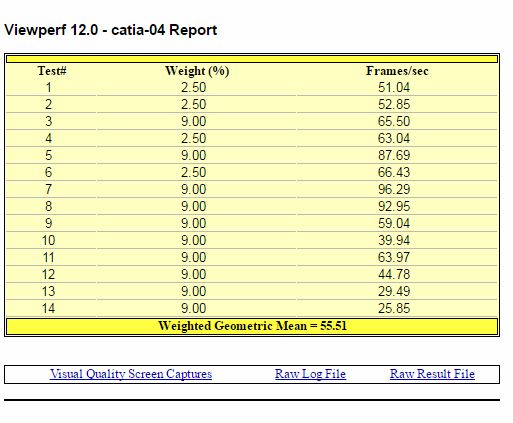

The graphics performance benchmark SPECviewperf and the workstation benchmark SPECwpc are prime examples of synthetic benchmarks that are very close to being real, but don’t require that users have an actual application installed to run them. They are simpler to install and run, but still provide much of the rigor of benchmarks such as those from SPECapc, which require the actual application to be run with it.

When it comes to synthetic benchmarks, it’s all in the design. SPEC graphics and workstation benchmarks use actual applications or traces from actual applications. The models come from real applications and are of the same size and complexity. As much as possible, test weightings are derived from the way users exercise the application in their day-to-day work.

Synthetic isn’t good or bad

Like clothing material, all synthetic benchmarks are not created equally. The ultimate judgment is based on not only looking like the real thing, but performing like the real thing: improvements in benchmark results should yield the same type of improvements when running an application in the real world.

As for those jackets I wore in college, they were the real deal, man. But I’ve since bought jackets made with synthetic material. They look nice and perform as well as or better than the real thing.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies. To find out more about graphics and workstation benchmarking, visit the SPEC/GWPG website, subscribe to the SPEC/GWPG enewsletter or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.