The varied aspects of GPU performance.

By Bob Cramblitt

It seems to be human nature to place things into large buckets that fit our preconceptions or simplify our day-to-day lives. But it very rarely works. That one-size-fits-all sweater ends up fitting nobody the way it should, and the food in that everything-for-everybody buffet just isn’t the quality you want when dining out.

So, it is with benchmarks that try to categorize performance under a single metric or use simple models that don’t represent work done in the real world. But the alternative isn’t easy.

To that point, Jon Konieczny, vice chair of the SPEC Graphics Performance Characterization (SPECgpc) subcommittee and an AMD representative in the SPEC Graphics and Workstation Performance Group (SPEC/GWPG) said, “applications behave very differently, so producing a benchmark that measures a variety of application behaviors and runs in a reasonable amount of time presents difficulties.”

“Even within a given application, different models and modes can produce very different GPU behavior, so ensuring sufficient test coverage is a key to producing a comprehensive performance picture.”

Embracing data diversity

Everybody wants simplicity, but in workstation performance benchmarking that often comes at the cost of accurately measuring performance as it pertains to specific applications. An average frame rate, for example, is not indicative of how a specific application, data set, or rendering mode performs.

“While measuring average frame rates might be good for getting a general sense of performance, it’s the variations by application, usage model, data set, and rendering mode that provide much more informative detail,” says Alex Shows, chair of the SPEC Workstation Performance Characterization (SPECwpc) subcommittee and a Dell representative to SPEC/GWPG.

Different as apples and pineapples

The SPEC/GWPG approach measures performance based on applications, whether the benchmarks run on top of actual applications as SPECapc benchmarks do, or they use traces of applications (SPECviewperf, SPECworkstation). This reflects the fact that GPU use varies significantly among different industries according to the type of data represented.

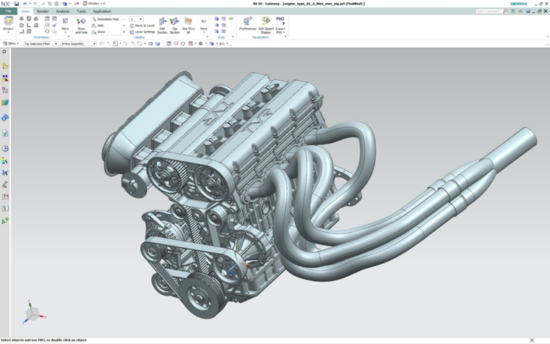

“A user working in a digital content creation (DCC) application such as Autodesk Maya might create a car model that looks similar to one from a CAD/CAM application, but to the GPU these two types of models are as different as apples and pineapples,” says Shows.

The DCC car will likely contain much simpler geometry to enable interactivity, but it will incorporate a wide array of sophisticated shaders, textures, and lighting to produce a photorealistic rendering. In contrast, the CAD/CAM car would likely be way more complex in geometry, as it is important to portray the exact dimensions, thicknesses, materials, and other mechanical attributes of an actual working vehicle.

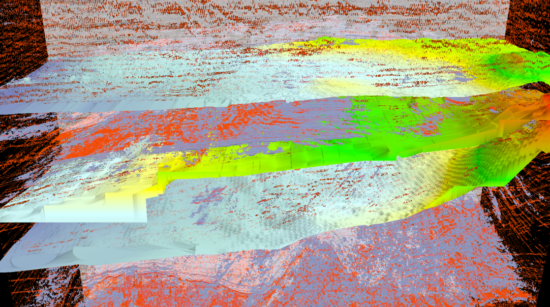

Volume visualization, as used in the energy (oil & gas) and medical viewsets within SPECviewperf and SPECworkstation benchmarks, poses the unique challenge of rendering an entire volume of data on the GPU. This is distinct from how GPU data computation is performed in applications such as financial modeling, AI, and other areas.

“Every model, every mode, is different,” says Allen Jensen from Nvidia, who serves as vice chair for both SPEC/GWPG and SPECapc. “Performance can be affected by primitive length, triangle size, shaders used, and geometric complexity, among other aspects. Different attributes are important to different sets of users.”

The challenge, according to SPEC/GWPG representatives, is creating a workload with the performance that is comparable as possible across different types of GPUs, as not all languages are supported in the same way within applications. That’s where having a committee representing a range of industry vendors is valuable.

“Picking a single, unified workload for a benchmark can sometimes be problematic,” says Konieczny, “but one of the advantages of SPEC/GWPG is that no single vendor is selecting what models and tests go into the benchmark.”

CPU versus GPU

Another ongoing challenge is the ever-evolving differences in what is processed in the CPU versus the GPU.

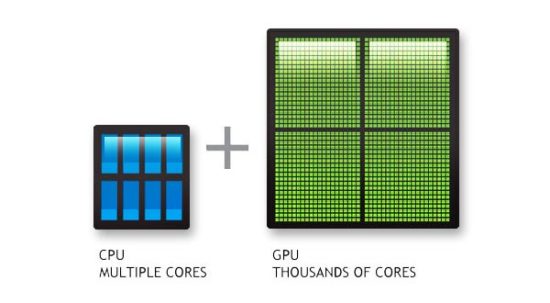

Alex Shows compares the CPU to a rally car that has to perform in a wide variety of conditions, while the GPU is like a drag racer designed for speed on a straight-away paved track.

“The rally car can race in virtually any environment, but it’s not going to outperform that dragster roaring down a straight track.”

Allen Jensen sees CPU performance as more static within a particular version of an application, while GPU performance is a constantly moving target.

“Application code is compiled before delivery to the customer, so CPU performance is sometimes frozen in time until a new version of the application is compiled. The application’s use of GPU is likewise frozen at delivery, but because the application writes to an API such as OpenGL or D3D, there is more room for innovation and improvement within the application cycle.”

The restless GPU

Given the inexorable march to improve performance through innovative technologies and new ways of configuring drivers for different applications, the traditional roles of CPUs and GPUs are being continuously redefined with new developments such as specialized computational blocks for AI and the use of GPUs for non-graphics applications.

Another factor cited by Konieczny is the rise of virtualization, which alters how applications are being run, particularly when GPUs are shared among multiple users.

“As these new GPU workloads make their way into the workstation market, SPEC/GWPG will need to design benchmarks to measure them,” he says, “particularly targeting the exact way specific applications are applying those workloads.”

To find out more about graphics and workstation benchmarking and the availability of free benchmarks, visit the SPEC/GWPG website, subscribe to the SPEC/GWPG enewsletter, or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.

Bob Cramblitt is communications director for SPEC. He writes frequently about performance issues and digital design, engineering and manufacturing technologies.