The true benefit of a hardware upgrade can be difficult to quantify using standard benchmarks and specs, but underestimating the benefits could be costly.

By Brad Holtz, President & CEO, Cyon Research

After four years, I recently upgraded my desktop. What has been most notable to me has been its impact on my interaction with my clients, resulting in top-line revenue increase. This latest upgrade has enabled me to engage with my customers in real-time, to get the answers they need while we are on the phone together, and has resulted in surprised and delighted customers. And, it was resulted in new work. At the core, the benefit of this latest upgrade is my ability to do things that were previously not possible. It’s not that the computer is a lot faster – it’s that the speed has crossed some imaginary barrier that has greatly expanded what I can practically do. For most of the things I do on a daily basis, the speed is nice, but has no impact. How fast Microsoft Word runs or a browser runs has long since become irrelevant – it’s fast enough that I don’t care anymore.

But when I am on a call with a client, every second counts. And because of this, there are some things that are just off limits in a call. In my case, that may mean attempting to slice data in a way that might take too long to process. In your case, you might not be able run an analysis of a design alternative – because neither of us can afford the dead-air time with our client while waiting for our computers to come back with results.

Domains of Improvement

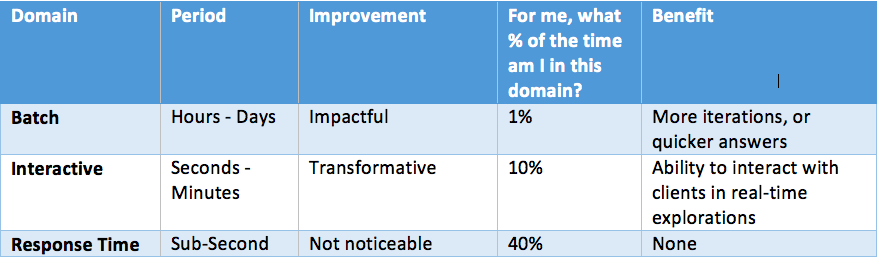

I like to think about hardware speed impact three separate time domains:

Depending on what your do, significant improvements in each of these domains may be notable, impactful, or transformative.

The Batch domain is dominated by processes, which take a long time, typically hours or days. Improvements in this domain may be impactful, without necessarily hitting your bottom line directly. It depends on how much of your time is spent waiting for results. For me, it’s not that often. For you it might be more. The real question is this: is what I am doing in the Batch domain on my critical path? If it is, you can justify spending a great deal for small improvements. Otherwise, you might be able to benefit more from the ability to run more iterations in the same amount of time, or from being able to run larger or more complex analysis in the same amount of time. In short, the benefits for speeding up batch processes may be real, but they can be difficult to quantify.

Most of us no longer need to pay attention to the Response Time domain, because for the past decade or so our computers have become fast enough for most of what we do that response time is no longer an issue.

That was not always the case. Slow response time can have a dramatic impact on our ability to achieve a flow state of work, by interrupting our train of thought. In1982, IBM released a paper by Doherty and Thadiani on the economic value of rapid response time (http://www.vm.ibm.com/devpages/jelliott/evrrt.html). The net of the paper is that when a computer’s response time drops below a critical figure (around half a second), the computer stops getting in the way of a user’s thought process, allowing significantly higher productivity. In other words, the work can “flow.” In the early days of computing, even word processors were slow enough that the response time would get in our way. With today’s processors, it’s rare for this to be an issue other than for the most demanding applications.

Interactive processes exist between the Batch and Response Time. Interactive processes take long enough to be measured with a second hand, but are short enough, and occur often enough, that switching tasks while you wait for the system to respond doesn’t make sense. When we run into performance issues that affect us in the Interactive domain, we have a strong tendency to find a way to avoid the activity. More often than not, entire categories of interactions are just never even considered. This is a limitation we aren’t even aware of most of the time. It is only after we’ve experienced a significant improvement in speed that we even begin to explore these areas. Prior to Windows 8, would you ever have considered rebooting your computer while on a call with a client? You just wouldn’t. Now, with faster computers and reworked software, you might.

It is in this domain of interactive processes, where I have been most surprised by the impact that my latest hardware refresh has had on my work. I had expected improvements in the first domain, but for my own work, not much of my time is spent there. Even with a 4x+ improvement, it’s hard to justify the expense when you don’t do something that often.

Client Interaction

I run Cyon Research. We are an industry analyst firm with a think-tank business model. That means that we fund our own research, which our customers (mostly CAD industry software vendors) subscribe to. (We also run COFES). Part of our engagement with our customers is diving into and exploring our data with our customers, in real-time.

Our research data sits on in a MS SQL-Server database on my workstation and I explore that data with Tableau Software while on one-on-one calls with our clients, via screen sharing. The data is quite complex and we often want to do deep slicing and dicing of the data. Tableau makes that easy, for the most part, particularly if the worksheets we use have been prepared in advance. In the past, if a client wanted to explore something that is more challenging to prepare, it was just not possible to do on a call. When exploring correlations in the data, the difference between something that takes seven minutes to return a result, and one that takes less than two minutes, is huge. Now, we can actively explore correlations in data while on the call. It no longer requires an offline effort. Nothing to schedule. Happier clients, more likely to renew their subscription to our data.

Survey Results

We just published our annual research report which, among other things, looks at hardware refresh rates, hardware refresh strategies, and perceptions of hardware improvements. In reviewing the data and the comments we received, I was struck by the observation that all the data, strategies and comments about hardware (refresh rates, strategies, and potential for improvement), focused on the bottom-line.

Our annual research report includes data from well over 600 respondents from more than 50 countries. On average, they refresh their desktops every 2.8 years; slightly more than half hold on to their hardware for designers, architects and engineers for at least for three years before refreshing. When we asked about their strategies for their refresh policy, ALL of the responses were related to bottom-line issues. Some were focused on why they were not refreshing, stating with strategies like cost avoidance, and cash flow management; others focused on what it would take to get them to upgrade. This latter group was split into those focused on the pain of not upgrading (with strategies like failure avoidance, compatibility, and obsolescence), and a much smaller group focused on gain from upgrading. Strategies mentioned for those focused on gain primarily related to speed and time savings.

All of the strategies mentioned were mentioned in the context of how they impact the bottom line for their firm: by reducing annual capital expense (not upgrading), by reducing the risk of productivity problems (pain), or reducing the time spent on projects (gain: speed and time). Not one respondent discussed the potential for building top line revenue, or the ability to do things they could do before.

Top-line Impact

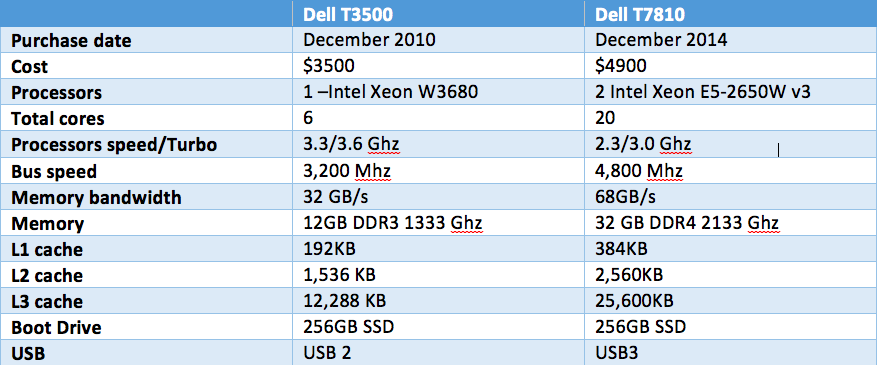

The bottom line results we saw in our research do make sense, but they are much less important to me than that the impact on my interaction with my clients. This potential for top-line revenue increase should get you excited. My new machine run single-core processes noticeably faster, and multi-core, multi-threaded, and hyper-threaded tasks are a 4.5x faster (in one example, a calculation that took four hours on the old machine took 53 minutes on the new one).

It’s difficult and impractical to try and quantify the time savings for interactive processes because every situation is different, but in all cases there has been improvement. It’s easiest to understand this way: when I tried to perform some of the analysis on a live call with a client prior to this latest machine, it killed the conversation and made the firm look bad. With this upgrade, not only am I able to deal with the analysis in real-time, our clients are surprised, impressed, and looking for more. It’s not about the numbers, it’s about client impression. And that impacts the Top-line.

Before and After