Cray built the Oak Ridge Laboratories Titan supercomputer from AMD CPUs and Nvidia GPUs. Power consumption is becoming as big an issue as compute capacity.

By Alex Herrera

Has the design of supercomputers changed forever? We tend to think so, Cray and Oak Ridge National Laboratories surely think so, and Nvidia is 100% convinced and committed to the premise. The three lined up on October 25 to unveil the long-awaited Titan supercomputer, powered in part by the more conventional x86 CPUs we’re all used to seeing. But in this new machine, every x86 CPU comes paired with an Nvidia GPU—18,688 Nvidia Tesla K20 GPUs, to be exact—and that’s a match the three think is not “a,” but “the”, blueprint of the future.

Moore’s Law has made incredible amounts of compute capability available incredibly cheaply. Our modern smartphones have more compute power than rooms of computers could muster 30 years ago. With all that power on our desks, in the data center, on our laps, and in our hands, what could we possibly still need supercomputers for?

The answer—as Nvidia is quick to point out—is that “big problems (still) require big solutions.” While some applications can now be handled by cheap desktops, tablets, or even phones, there are plenty for which there simply is no amount of performance that will ever be “good enough.” Every last drop of incremental performance a new generation of hardware can muster gets soaked up by implementing more complex models that operate on bigger data sets and run through more refined iterations. If there is an end to the compute power these difficult problems can leverage, it’s certainly not anywhere in sight.

What kind of big problems? Weather prediction is one. Climate change is another. Both are obvious fits at Oak Ridge, which partners with NOAA (National Oceanic and Atmospheric Administration). The list goes on, including modeling biological and chemical processes to find treatments for disease, unraveling the mysteries of both cosmic and sub-atomic physics. In fact, there are no signs the list is getting smaller, despite the huge strides the industry’s made in driving up the teraflops scale. Because as we start to answer today’s questions, it inevitably leads to a Pandora’s Box of new quandaries to explore.

1:1 ratio of CPUs and GPUs

The number and complexity of evolving models demand an incredible amount of computation. And computational demand is only half the problem; the other is managing terabytes—even petabytes—of data. So yes, there is a good reason for Oak Ridge Labs to upgrade its old Jaguar supercomputer, that old dog that can only deliver a measly 2.3 petaflops. With Titan, Oak Ridge scientists will have more than 20 petaflops at its beck and call. But in this era, the flops count is really not the end game. As in phones or laptops, the goal is ultimately about flops per watt.

Nvidia is quick to point out Jaguar consumes 7.0 megawatts to achieve 2.3 petaflops, and linearly extrapolation would mean a Titan built by similar means would require 60 megawatts, or the amount required to power about 60,000 homes. Hence, the trio of vendors realized the old solution and old technology aren’t going to work as the industry marches toward exascale computing (1 exaflop = 1,000 petaflops).

Rather, it’s going to take a system that leverages technologies both old and new: conventional (mostly) x86 CPUs augmented with GPUs—with the GPUs harnessed for demands other than conventional 3D rendering. What started years ago as a grassroots attempt by desperate, budget-challenged academics to exploit GPUs as relatively cheap, floating-point co-processors has evolved into the blueprint for many in their exascale pursuit.

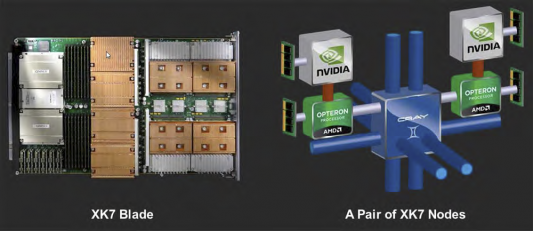

The commonality of computational demand between traditional, polygonal 3D rendering and a whole range of parallelizable, floating-point-intensive algorithms has made the GPU a component of choice for many new supercomputer architectures. Modern GPU architecture lends itself well to use as a co-processor to handle the floating-point heavy lifting, and to do so in a far more modest power budget. But of course, GPUs are not as general-purpose as some would like to think, so CPUs certainly can’t go away, even if the architect plans little in the way of heavy-duty computational load for the CPU. Titan’s system architecture clearly reflects all this rationale. It consists of 200 cabinets of Cray XK7 blade units, comprising 18,688 individual compute nodes (AMD 16-core Opteron + Nvidia Tesla K20 GPU) and delivering 20+ petaflops of aggregate throughput.

The Tesla K20 is based on the GK110, Nvidia’s Kepler-generation GPU streamlined for HPC applications. Big brother to the flagship, graphics-focused GK104 that drives GeForce and Quadro products, the K20 was built with applications like Titan in mind. It takes the baseline Kepler architecture and throws in a general compute GPU-optimized concoction of premium-performance (and premium-cost) features, such as high-throughput double-precision floating-point calculation, an attribute with little value in graphics rendering, but a must-have for certain HPC applications.

The choice of CPU might surprise you: it’s none other than last year’s 16-core Opteron CPU from AMD. Given that the reviews of Bulldozer were anything but super, and it’s been a year since it was introduced, is it then ironic to see it in a cutting-edge supercomputer? Well, not so much, because such assessments depend on the context. AMD definitely made some decisions that traded off some aspects of performance for this Bulldozer-class CPU, and then they delivered it late.

Worse, the company poorly set expectations for the part, because it was never destined to be a great performer for client-side computing. But GPU-accelerated supercomputing is a far different beast than typical client computing. The vast majority of floating-point computes are executing on the Tesla GPUs, not Opteron FPUs … consciously, by design. So Bulldozer’s lack of floating-point prowess is not a critical issue. But with 18,688 nodes in 200 cabinets, power density is. Hence, we can imagine Bulldozer being a reasonable fit, at least for this application.

And the fact that the part selected is essentially a year old makes sense considering the circumstances … the design of a computer of this magnitude has an extended development time, so part selections were made a long time ago. Oak Ridge’s Jaguar-to-Titan upgrade is complete and on schedule, with early applications showing a 4X to 8X improvement in many scientific applications. One, the Lab’s own application calculating advanced material properties (determining temperature above which a material loses its magnetism), delivered 10+ petaflops throughput on Titan, a rate three times higher than the Gordon Bell winner in 2011.

Our take

So of course, to Nvidia there is no question the only feasible way to build new supercomputers is to incorporate GPUs—90% of peak 20-pflop performance is coming from the Tesla K20s. But it’s clearly not just posturing. The company makes a great argument. Sure, watts aside, there may be other ways to go about it. But when you consider housing them in a remotely compact manner, then powering them with a practical power supply, the case for a hybrid CPU/GPU architecture to maximize flops per watt is pretty compelling.

Today GPU-enabled supercomputers still represent the exception rather than the rule. But their role is undeniably growing. Three of the top ten ranked machines (pre-Titan) in the world include Tesla GPUs, and a sizable number on the “Green 500” (which focuses on flops per watt) do as well. Will GPU-less supercomputers become a thing of the past? Not if Intel gets its way, as its recently introduced Xeon Phi co-processor (a.k.a. Knights Corner) is going after the same applications and customers with a very different—you guessed it, x86 style—solution.

There is much more news to come on the new breed of supercomputers at the Supercomputing Conference in Salt Lake City in a couple of weeks. JPR will be there to cover it all.

Alex Herrera is a senior analyst for Jon Peddie Research.

Related

Hardware at Siggraph: Power boosts and acceleration from hardware add-ons

University group deploys UK’s most powerful GPU supercomputer

Nvidia launches the Kepler era of virtual GPU computing and accessible HPC