A generation of computer and workstation reviewers in the CAD industry have relied on this industry standard benchmarking software.

By Bob Cramblitt

If there is a review of a professional workstation or graphics card within an online or print publication, the reviewer is likely to quote results from a SPEC Graphics and Workstation Performance Group (SPEC/GWPG) benchmark.

We recently talked to some reviewers who are long-time users of SPEC/GWPG benchmarks to find out which benchmarks they use and why they use them. Here’s what they had to say:

David Cohn, Digital Engineering: David is one of the longest time users of SPEC/GWPG benchmarks, dating back to when Digital Engineering (formerly Desktop Engineering) was a print-only publication.

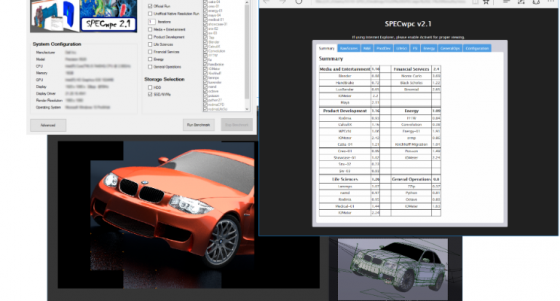

“I use SPECviewperf to test graphics performance of workstations and GPUs, SPECapc for SolidWorks to test overall performance of workstations running typical MCAD applications, and SPECwpc to test overall performance of workstations.

“I feel that SPEC/GWPG benchmarks add a greater level of detail and credibility to my reviews. As part of my review, I need to include objective performance metrics using tests that are readily available so that the same test could be run by someone else in order to validate the results I obtain.”

Greg Corke, Develop3D: As managing editor of Develop3D, Greg has used SPEC/GWPG benchmarks in reviews of hundreds of CAD/CAM workstations and graphics cards.

“At Develop3D magazine, we find the SPECapc benchmarks (both SolidWorks and PTC Creo) very useful because they provide a detailed view of graphics performance. Importantly, the results are consistent (so the benchmarks are reliable) and the benchmarks run inside actual CAD applications so they better reflect what the CAD user would experience in the real world.”

“One of the best things about the SPECapc benchmarks is they allow you to drill down into the details of 3D graphics benchmarking. The overall graphics composite scores are useful, but you can get a much better understanding of how performance relates to your specific workflows by looking at individual scores for different render modes. For example, if you only view models in ‘shaded and edges’ mode, just check out that specific score.”

Alex Herrera, Jon Peddie Research: A 25+ year veteran of the computer graphics industry, Alex authors Jon Peddie Research’s Workstation Report and does reviews for the TechWatch newsletter.

“The two [SPEC/GWPG benchmarks] I rely on the most are SPECwpc and SPECviewperf. I use the former as my baseline benchmark when reviewing systems. SPECwpc is especially nice if the review is for a system that is targeted at a specific vertical, as it provides composites for six different application categories [media and entertainment, product development, life sciences, financial services, energy, and general operations] . I use SPECviewperf as my standard for benchmarking workstation GPUs, as it primarily stresses the graphics subsystem.”

“Benchmarks aren’t perfect, but SPEC/GWPG does a good job of making them well-rounded. And the fact that they are maintained and published by a non-partisan consortium is critical to credibility.”

Kevin O’Brien, StorageReview.com: Kevin has spent more than a decade in editorial positions in the tech industry and is currently lab director at StorageReview.com. Despite its name, StorageReview.com covers the entire spectrum of the storage market, including computing system performance.

“We look at everything that connects into storage, so we’ll cover things from battery backups and racks, all the way out to thin clients. In the case of SPEC benchmarks, we use SPECwpc to look at the whole system — storage, CPU and GPU — and SPECviewperf to just look at GPU.”

“We’ve tried other benchmarks, but they don’t resonate as well with enterprise buyers, since many are focused on consumer applications or games. The SPEC/GWPG benchmarks incorporate workloads that resonate well with the enterprise shopper, and it’s a fairly simple process to install the benchmarks. A lot of the vendors that we work with know, understand and trust SPEC benchmarks, so for us using them is a no-brainer.”

Rob Williams, Techgage: As editor-in-chief of Techgage, Rob says his role is to make sure the online publication sticks to its mission statement: “To be an advocate of the consumer, first.”

“I take great advantage of a handful of SPEC/GWPG benchmarks, including SPECviewperf, SPECapc for 3ds Max, and, of course, SPECwpc. Each of these suites includes specific tests that would cater to a specific reader or viewer, so I like to use them all for the sake of being able to deliver important results to anyone eyeing a new workstation — regardless of their angle.

“SPEC/GWPG benchmarks allow me to test scenarios that I wouldn’t otherwise be able to test, such as viewport performance. Beyond that, even if I am able to replicate a scenario SPEC has covered, the ease-of-use and consistent results delivered from run to run are what keep me hooked. With the myriad SPEC/GWPG benchmarks available, I can cover a wide-range of popular workstation workloads to show my readers just how current hardware scales, whether we’re looking at a CPU, GPU or an entire system.”

To find out more about SPEC/GWPG, visit the website, subscribe to the newsletter or join the Graphics and Workstation Benchmarking LinkedIn group: https://www.linkedin.com/groups/8534330.

Bob Cramblitt is Principal at Cramblitt & Company, and has been assisting SPEC/GWPG with its communications for many years.