Making people and creatures for fun and profit.

It was just a few years ago, 2015 actually, that Adobe introduced its character animation tool to the world at NAB. At that time, it was part of Adobe’s After Effects and seemed a cute feature and something of a quirky addition to After Effects. Back then, a quick look at Character Animation made it seem similar to facial animation apps that have come and gone in the content creation world since 2009 or so.

Obviously, that was a misperception. This year, the company returned to NAB and showcased Character Animator as a standalone product for content creation. The company says it has gone through a very active beta period, aided by its availability in After Effects. As a result, the company has been able to come to NAB with high visibility customers and a strong ecosystem. Character Animator has been used for segments of the Stephen Colbert show which have evolved into the Cartoon President series. It has been used in an episode of The Simpsons in which the actors live-streamed their characters’ vocal performances and were animated with keyboard triggered animated motion. During the show, Homer Simpson answered questions live on-air. Other shows using Character Animator include Chelsea, Netflix’ show built around Chelsea Handler, and CNBC’s Mad Money with Jim Cramer.

Customers have been putting their own spin on creating characters and content. The Adobe sponsored YouTube series “Character Animator Tips and Tricks” shows a wealth of content being created in different styles.

Character Animator Product Manager Sirr Less isn’t at all surprised at the acceptance of Character Animator. He sees it is further evidence for his belief that communications are rapidly shifting. He, like many of the people doing content creation work, got his start using turnkey systems like “the Avid” (Avid’s Media Composer, a software based turnkey systems sold with equipment). But when, in the 2000s, video production began to shift to the desktop aided in no small part by Apple Final Cut Pro and Adobe Premiere, his small company doing work in video content creation and game development made the leap to the desktop and saw a business grow for short form content, and marketing. The whole industry has been changed by that transition and Less sees more opportunity coming with social media and new tools like Character Animator. “I know the future is animation,” says Less, who sees opportunity in the disruption caused by the shift from static design to “things in motion.” Less has seen this shift affect the content creation tools and expects it to continue. Previously, he says, static content like illustrations, images, were used to generate film, videos, animation. Now, he says static content is often being inspired or directly taken from motion content.

All of which goes a long way to describing why all those cute little single function apps disappeared while Character Animator seemed so easily to take root. Timing is everything.

Integrating parts

Time sure does make a difference. Over the years, Adobe has grown to become a market leader in content creation. Adobe can claim over 90% penetration in the professional content creation market in illustration and imaging, and it has a dominant position in video and compositing. The company has finally managed to integrate its tools (more or less) to enable easier sharing between programs. Also, and just as important, Adobe has grown Adobe Stock, its content business, into a resource with benefits for all of its programs. For instance, it has materials and models for Dimension CC, the company’s evolving 3D product marketing tool, stock video, templates for photoshop content, and, of course characters for Character Animator.

Today, it’s safe to say that Adobe products are part of the workflow for just about all animators, filmmakers, colorists, designers, and content creators in general. Aside from being a popular feature for creatives interested in working with different forms of content creation, Character Animator gives Adobe an import link in the content workflow.

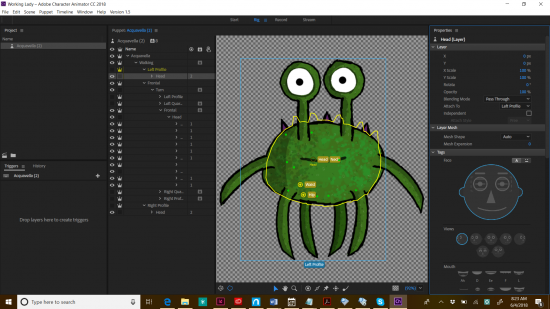

As a true Adobe product, Character Animator works the way an experienced user of Illustrator and Photoshop might expect. The trick is to put every element of the animation on a separate layer. That method of working is comfortable to users of Photoshop and Illustrator and of course layers are also the key to After Effects, as a compositor. In this sense, it’s an example of a tool that marries the video world and imaging world. It’s also an indicator of how Adobe is thinking of its products and the ways in which they will work together.

In fact, the really earth-shattering aspect of Character Animator is the most obvious feature that it marries 2D animation to video. You don’t have to draw 24 frames a second. You add motions, and actions to characters, you tell them what to say, and send them on their way.

Adobe Sensei was used in the development of Character Animator. In fact, Sensei is used in the development of every Adobe product. In this case, Sensei put machine learning to work watching people talk, and their lip movements to create a library of lip shapes and sounds to drive the characters. An early example of that work was the Face Tracking feature introduced at the same time as Character Animator. The role of Sensei in Adobe development is only going to increase, pledges Adobe Technology.

The next logical step in the evolution of Character Animator is that ability to act out the whole character. To walk, slouch, wave arms, dance using motion capture techniques and Adobe agrees, that is the next step and they’re working on it. Adobe also has a whole lot of different animation tools, some originally in After Effects, some coming from the Flash world like Adobe Animate. At Adobe Max 2017, the company sneaked a new tool code-named Puppetron, which can create stylized images from photographs. As he demo’d the new tool, Jakob Fišer said he can also bring the head he designed into Character Animator and animate it and talk with his voice. “Now I can play with all my toys.”

Adobe MAX 2017 (Sneak Peeks) | Adobe Creative Cloud

That is what Adobe ultimately wants for its customers. Character Animator will also benefit from work the research labs have done on other fronts including non-realistic rendering. Puppetron takes advantage of earlier work from Adobe Labs and Cornell University called Style Transfer. Fišer was also involved in that project.

In a brief follow up chat with Sirr Less after NAB, he said that there will be much more to come on this front. Obviously, he couldn’t say much but it’s clear that Adobe has technology scattered all through its technology groups. The company has also pledged to continue integrating its technology so apps and applications can work more smoothly together. Animation is clearly going to be a major front in this effort.

It’s truly easy

Great, so anyone can do it. There goes the art and craft right into the grubby hands of everyone. Animation will become just another tool.

Yes, great! This is another amazing example of how the melding of the real world and the digital world in content creation is not only making the creativity easier, it’s enabling new forms of personal expression. Media itself is changing.

Now for the reality part. If you get all excited and leap into Character Animator, you’ll be confronted by the utterly demoralizing Adobe interface—a black hole with a menu on top and on the sides. No problem. This is sort of the Adobe way; watch a getting started video. After downloading a character from the Creative Cloud store and bringing it into Character Animator, your work is just about done. The software separates the components into their proper places within the interface, and you can start talking for your character immediately.

Creating characters in Photoshop and Illustrator is a little more difficult for obvious reasons: talent and imagination is a big help on this front. But it’s also logical and straightforward. Think about how characters will move and speak and then build the layers of puppet parts with their dependencies.

Adobe Character Animator has taken away quite a bit of the manual labor and makers the animator the driver. This is transformative.

What do we think?

As so often happens, the real importance of Character Animation didn’t come through immediately. It was the act of trying to make the thing work, when I appreciated what a true revolution the product can bring about. On the one hand, Character Animator works within the Adobe workflow familiar to customers; it also keeps animators inside the Adobe environment and its products.

It gives a bit of a clue about Adobe’s plans for the future. It isn’t enough that Adobe is used in just about every creative workflow, Adobe wants to own the pipeline.