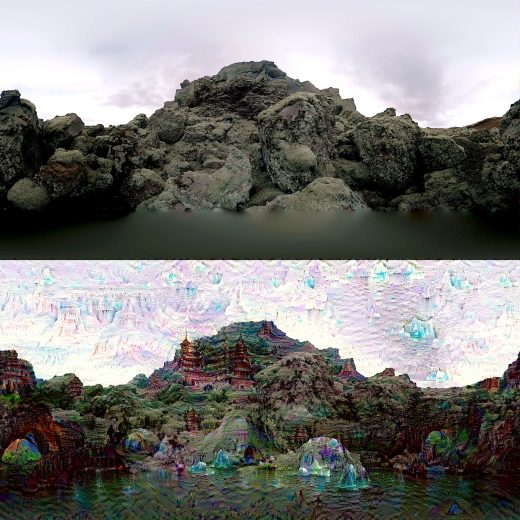

Automating the process of style transfer, asking the software to add the style of one photo to another.

Adobe has released a paper on Deep Photo Style Transfer revealing work from Adobe and Cornell researchers (Fujun Luan, Cornell University, [email protected]; Sylvain Paris, Adobe, [email protected]; Eli Shechtman, Adobe, [email protected]; Kavita Bala, Cornell University, [email protected]) who tinkered with image style transfer techniques. The idea of style transfer is not all that new and has been used to achieve painterly effects in a photo by asking the software to add the style of one photo to another photo. The most commonly used approaches for this tend to present a resulting image that looks like a painting and, in fact, that’s how the algorithms are widely used. The Prisma mobile app is a good example. Users can make a photo look Picasso-esq or Van Goghish. The problem is that as the software tries to reconcile the disparate image components, the results will get imprecise edges, stray artifacts, that we associate with art works rather than photos. The commonly used algorithm is the CNNMRF (a combination of Convolutional Neural Networks and Markoff Random Fields, the solution in the past has been to go all the way and embrace the painterly effect).

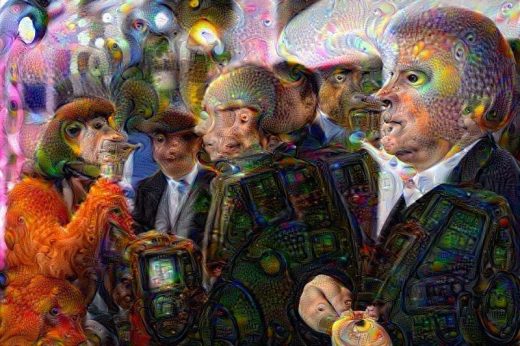

There are several sites that play with this software for hours of fun. To try it out, see the Deep Dream Generator site, which allows you to upload a picture and try out the different effects.

The alternative is to use filters or scripts in imaging programs to try and reproduce the effects of one look to another photo – but the approach is more of a blunt instrument requiring a skilled retouching artist to bring out the details, and adjust colors in certain areas to get the desired effect.

The researchers’ goal was to use the algorithms that had been used for style transfer but to refine it so that it stays within the lines and better transfers the lighting, color, feeling of another photo while retaining a photorealistic effect.

Google says that it has been better able to train image recognition in computers by understanding how it works. The machine-learning process produces many layers of information as it tries to extract similarities in the image to other images it has been shown. However, the researchers also found that they could take an opposite approach and coax machines toward recognizing elements in the image as other recognizable features from other images. As a result, they could get wonderful images by exaggerating elements in certain layers of the process. There is a Google paper on the subject and the company has also released the Deep Dream algorithm.

Another famous researcher in the field is non-other than actress Kristen Stewart who worked with Adobe Researcher Bhautik J Joshi and David Shapiro, Starlight Studios producer to use style transfer techniques on her painting “Come Swim.” The paper is also published as “Bringing Impressionism to Life with Neural Style Transfer in Come Swim.”